Anthropic has updated its agentic development capabilities with the transition from the Claude code SDK to the Claude agent SDK in early 2026, providing native, advanced multi-agent orchestration designed for enterprise workflows. This SDK enables developers to build systems in which a central orchestrator agent delegates complex multi-step tasks to specialized sub-agents.

Key Features of the Claude Agent SDK and Multitasking Routing

The updated SDK supports building, deploying, and managing complex agentic workflows.

- Native Multi-agent Orchestration: The framework enables the construction of hierarchies in which Claude Opus powers a main agent for delegating tasks to specialized subagents.

- Persistent and autonomous: Unlike stateless API calls, the SDK operates a long-running process that can manage complex workflows, maintain conversational state across multiple queries, and execute demands in a persistent shell environment.

- Model Context Protocol (MCP) integration: agents can seamlessly connect to external tools, data sources, and Internet databases, facilitating interoperability in enterprise environments.

- Agent teams/subagents: the system supports agent teams (experimental as of early 2026), which allow multiple Claude code sessions to collaborate on shared tasks, with a lead session directing the workflow.

- Production-Grade Controls: Includes built-in guardrails for granular permissions, allowing listing and working directory isolation.

Enterprise AI Focus

The shift to the Claude agent SDK supports the creation of specialized agents for various business domains.

Specialized roles:

- Co-op Development of legal assistance

- Financial advisors and customer support bots

- Codebase automation: Code agents capable of understanding and editing entire codebases, running bash commands, and managing git work trees

- Integration with Enterprise Tools: The SDK supports integration with platforms like GitHub and allows for private plug-in marketplaces to enhance enterprise control.

Technical Details And Availability

- Languages: available for both Python and TypeScript

- Migration developers are encouraged to migrate from Claude Code SDK to Cloud Agent SDK.

- Commands: new agents. New agent SDK commands allow for defining workflows as code and managing multi-agent systems.

Cloud now has research capabilities that allow it to search across the web, Google Workspace, and any integrations to accomplish complex tasks.

The journey of this multi-agent system from prototype to production taught us critical lessons about system architecture, tool design, and prompt engineering. A multi-agent system consists of multiple agents (LLNs) working together in a loop, each using tools autonomously. Our research feature involves an agent that plans a research process based on user queries and then uses tools to create parallel agents that search for information simultaneously. Systems with multiple agents introduce new challenges in agent coordination, evaluation, and reliability. This post breaks down the principles that worked for us. We hope you will find them useful to apply when building your own multi-agent systems.

Benefits of a Multi-agent System

Research work involves open-ended problems where it is very difficult to predict the required steps in advance. You can’t hard-code a fixed path for exploring complex topics, as the process is inherently dynamic and path-dependent. When people conduct research, they tend to update their approach as discoveries emerge continuously and follow leads during the investigation.

This unpredictability makes AI agents particularly well-suited for research tasks. Research demands the flexibility to pivot to or explore tangential connections as the investigation unfolds. The model must operate autonomously for many turns, making decisions about what directions to pursue based on immediate findings. A linear one-shot pipeline cannot handle these tasks.

The essence of search compression: distilling insights from a vast corpus. Sub-agents facilitate compression by operating in parallel with their own context windows, exploring different aspects of the question simultaneously before condensing the most important tokens for the lead research agent. Each sub-agent also provides separation of concerns, distinct tools, prompts, and exploration trajectories, thereby reducing path dependency and enabling thorough, independent investigations. Once intelligence reaches a threshold, multi-agent systems become a virtual means of scaling performance. For instance, although individual humans have become more intelligent over the last 100,000 years, human societies have become exponentially more capable in the information age because of our collective intelligence and ability to coordinate. Even generally intelligent agents face limits when operating as individuals. Groups of agents can accomplish far more.

Our internal evaluations show that multi-agent research systems excel, especially for breadth-first queries that involve pursuing multiple independent directions simultaneously. We found that a multi-agent system with Claude Opus 4 as the lead agent and Claude Sonnet 4 sub-agents outperformed single-agent Claude Opus 4 by 90.2% on our internal research eval. For example, when asked to identify all the board members of companies in the information technology S&P 500, the multi-agent system found the correct answers by decomposing the task into sub-agent tasks. In contrast, the single-agent system failed to find the answer through slow sequential searches.

Meta agent systems work mainly because they have spent enough tokens to solve the problem. In our analysis, three factors explain 95% of the performance variance in the BrowseComp evaluation (which tests the ability of browsing agents to locate hard-to-find information). We found that token usage alone explains 80% of the variance, with the number of toll calls and the model choice as the other two explanatory factors. Finding validates our architecture, which distributes work across agents with separate context windows to increase parallel reasoning capacity. The latest Claude models act as large efficiency multipliers for token use, with upgrading to Claude Sonnet 4 yielding a larger performance gain than doubling the token budget on Claude Sonnet 3.7. Multi-agent architectures effectively scale token usage for tasks that exceed the limits of single agents.

There is a downside: in practice, these architectures burn through tokens fast. In our data, agents typically use about 4x as many tokens per chat interaction, and multi-agent systems use about 15x as many tokens per chat. For economic viability, a multi-agent system requires tasks whose value is high enough to cover the increased performance cost. Further, some domains that require all agents to share the same context or involve many dependencies between agents are not well-suited to multi-agent systems today. For instance, most coding tasks involve fewer truly parallelizable tasks than research, and LLM agents are not yet great at coordinating and delegating to other agents in real time. We’ve found that multi-agent systems excel at valuable tasks that involve heavy parallelization in handling information beyond single-context windows and interfacing with numerous complex tools.

Architecture Overview for Research

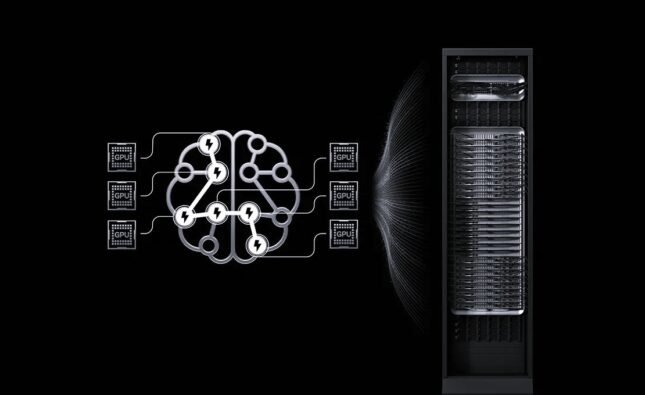

Our research system uses a multi-agent architecture with an orchestrator working pattern, where a lead agent coordinates the process while delegating to specialized sub-agents that operate in parallel.

When a user submits a query, the lead agent analyzes it, develops a strategy, and spawns sub-agents to explore different aspects simultaneously. As shown in the diagram above, the sub-agents act as intelligent filters by iteratively using search tools to gather information (in that case, on AI agent companies in 2025) and then returning a list of companies to the lead agent so it can compile the final answer.

Traditional approaches to retrieval-augmented generation (RAG) rely on static retrieval. That is, they fetch a set of chunks that are most similar to an input query and use these chunks to generate a response. In contrast, our architecture uses a multi-step search that dynamically finds relevant information, adapts to new findings, and analyzes results to formulate high-quality answers.