Meta has introduced a new AI model, Segment Anything Model 2 (SAM2), that can identify and track any object as it moves through a video. It builds on the first SAM, which only worked with images. This upgrade creates new possibilities for editing and analyzing videos.

SAM2’s ability to segment objects in real-time is a major technical advance. It shows how AI can handle moving images and distinguish between different objects, even as they enter and exit the frame.

To put SAM2’s achievement in context, segmentation means figuring out which parts of an image belong to which objects, and AI can do this, making it much easier to process or edit complex images. A capability that was the key feature of Meta’s first SAM. It has been used to segment coral reef images, analyze satellite imagery for disaster relief, and even analyze cellular images to detect skin cancer.

Built on these image-based abilities, SAM2 expands segmentation to video, a shift not possible until now. To launch SAM2, Meta released a database of 50,000 videos used to train the model, along with 100,000 additional videos. Real-time video segmentation also requires significant computing power, so even though SAM2 is free now, that may change in the future.

Segment Success

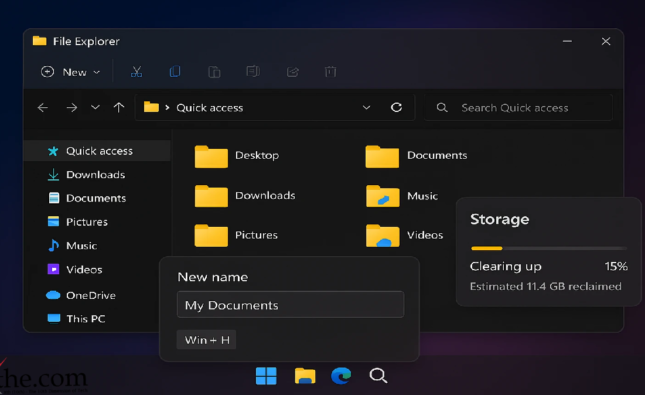

With SAM2, video editors can select and modify objects in a scene more easily than with current editing tools, without having to adjust each frame by hand. Meta also sees SAM2 changing the interactive video landscape. People can select and move objects in live videos or virtual spaces using this AI model.

Looking further ahead, Meta believes Sam2 could be important for developing and training computer vision systems, especially for self-driving cars. These systems need to track objects accurately and efficiently to understand and navigate their surroundings safely. The same could speed up labeling visual data, giving these AI systems better training material.

While much of the AI video hype centers around generating videos from text prompts, the kind of editing capabilities provided by SAM2 might play an even bigger role in embedding AI into video creation. Models like OpenAI’s Sora, Runway, and Google Vue get a lot of attention for a reason, but SAM2 offers a different type of impact.

While Meta may lead for now, other AI video developers are working on their own margins. For example, Google is testing video abstraction and object recognition features on YouTube. Adobe’s Firefly AI tools also focus on photo and video editing, with features like content overfill and auto reframe.

Source: Meta’s new AI model tags and tracks every object in your videos