Key Points

- Meta is testing its first AI training chip as part of its plan to rely less on suppliers like NVIDIA.

- Sources say the chip is designed to help lower the costs of AI infrastructure.

- Meta plans to use these chips for recommendation platforms and Generative AI.

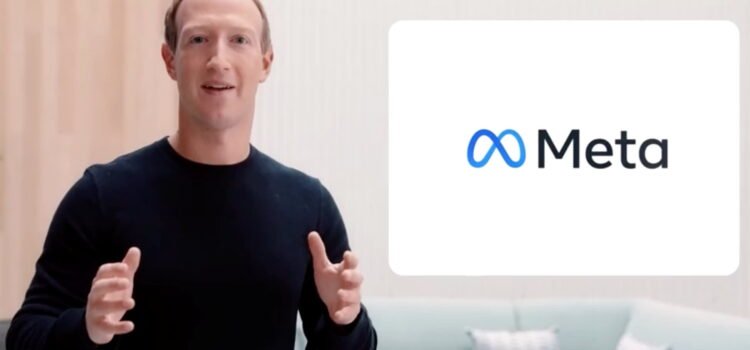

Meta, the owner of Facebook, is testing its first in-house chip for training artificial intelligence systems, two sources told Reuters. This is a key step as Meta works to design more of its own custom chips and depend less on outside suppliers like Nvidia.

The company has started a small rollout of the chip and plans to increase production for wider use if the test is successful, according to sources.

Meta’s effort to develop its own chips is part of a long-term plan to lower its large infrastructure costs as it invests heavily in AI tools to spur growth.

Meta, which also owns Instagram and WhatsApp, expects its total expenses in 2025 to be between $114B and $119B. Up to $65B of this will go toward capital spending, mostly for AI infrastructure.

One source said Meta’s new training chip is a dedicated accelerator built to handle only AI-specific tasks. This design can make it more power-efficient than the GPUs usually used for AI workloads.

Meta is working with Taiwan-based chip manufacturer TSMC to produce the chip, according to the source.

The test deployment began after Meta completed its first tape-out of the chip, meaning sending the initial design through a chip factory, the other source said. This is an important milestone in chip development.

A typical tape-out costs tens of millions of dollars and takes about 3 to 6 months to complete, with no guarantee of success. If it fails, Meta would need to find the problem and repeat the process.

Meta and TSMC declined to comment.

This chip is the newest in Meta’s Meta Training and Inference Accelerator (MTIA) series. The program has struggled for years and once canceled a chip at a similar stage,

Here, Meta began using an MTIA chip for inference, which is the process of running an AI system as users interact with it. The chip helps power the recommendation platforms that decide what content appears on Facebook and Instagram news feeds.

Meta executives have said that they want to start using their own chips for training by 2026. Training is the process of feeding large amounts of data to an AI system to teach it how to perform tasks.

Like the Inference Chip, the Training Chip will first be used for advisory systems. Later, Meta plans to use it for Generative AI products, such as the Meta AI Chatbot, according to executives.

We are working on how to train the recommended systems, and eventually how to think about training and inference, according to GenAI’s Meta’s Chief Product Officer, Chris Cox, at the Modern Stanley Technology Media and Telecom conference last week.

Cox described Meta’s chip development as a “walk/crawl/run” situation so far, but said executives see the first-generation inference chip for recommendations as a “big success.”

Meta previously canceled an in-house custom inference chip after it failed a small-scale test similar to the current training chip test. The company then switched course and ordered billions of dollars’ worth of Nvidia GPUs in 2022.

Since then, Meta has stayed with one of Nvidia’s biggest customers, connecting many GPUs to train its models. These include Recommendation and AD systems, as well as the Llama Foundation model series. The GPUs also handle inference for more than 3 billion people who use Meta’s apps daily.

This year, some AI researchers have questioned the value of these GPUs. They doubt how much more progress can be made by simply adding more data and computing power to large language models.

These doubts grew after Chinese start-up DeepSeek launched new low-cost models in late January. DeepSeek’s models are more efficient because they rely more on inference than most present models.

After DeepSeek’s launch, AI stocks worldwide dropped, and Nvidia shares lost about a fifth of their value. The shares later recovered most of that loss as investors still believe Nvidia’s chips will remain in the industry standard for training and inference. However, they have fallen again amid broader trade concerns.

Source: Exclusive: Meta begins testing its first in-house AI training chip