The NVIDIA Blackwell B200 is a big step forward from the Hopper H200. It delivers much bigger AI throughput and data bandwidth and is built for trillion-parameter models and large-scale AI training. At the same time, the H200 (141 GB HBM3e) focuses on improving memory capacity and bandwidth for existing infrastructure. The B200 (192GB HBM3e) features a dual-drive chiplet design that doubles performance in most metrics.

Key Specifications Comparison

| Feature | Hopper B200 | Blackwell B200 |

| Architecture. | Hopper (monolithic) | Blackwell (dual die) |

| Transistor Count | ~ 80 Billion | ~ 208 million |

| Memory Capacity | 141 GB, HBM 3E | 192 GB, HBM 3E |

| Memory bandwidth | ~ 4.8 TB’s | ~ 8 TBs |

| Interconnect | NVLink4 (~0.9 TB’s) | NVLink5 (1.8 TB’s). |

| Peak AI (FP8/FP4) | ~4PF (FP8) | ~9 PF (FP 8) /18PF (FP 4). |

| TDP (Power) | ~700 W | ~1000 to 1200 W+ |

Data Center Throughput

- Training performance: The B200 can deliver up to 3 times better training performance for large models than the previousgeneration.

- Inference throughput: for AI inference, the B200 provides up to 2.5-3 times the throughput of the H200.

- FP4 capabilities: One key advantage of the B200 is its native support for 4-bit floating-point (FP4) format. This enables up to 18-20 petaflops of performance, about twice the throughput of the H200s FP8 in some cases.

- Transformer engine: The B200 features a second-generation transformer engine, enabling faster, more efficient AI model processing.

Memory Bandwidth and Capacity

- Capacity: The B200 has 192 GB of HBM3E memory, while the H200 has 141 GB.

- Bandwidth: The B200 offers 8 TB/S of bandwidth, much higher than the H200’s 4.8 TB/S. This helps reduce memory bottlenecks during large model training.

Interconnect and Scaling

- NVLink 5: The B200 uses the fifth-generation NVLink, providing 1.8 TB/s of Intel GPU communication bandwidth. This is twice as fast as the H200’s 900 TB/s.

- Scaling Cologne: The Blackwell architecture can support much larger and denser clusters, such as the GB200 and NVL72. It allows up to 576 GPUs to work together as one unit.

Summary of Use Cases

- Hopper H200: High-throughput inference for models under 100B parameters, upgrading existing H100 infrastructure, and cases where power cooling limitations exist.

- Choose the Blackwell B200 for training next-generation base models running a trillion-parameter model inference, handling long-context LLMs, and using new liquid-cooled high-density data centers.

NVIDIA’s latest GPUs can make choosing the right hardware for AI projects tricky. The H100 is a proven, reliable option. The H200 offers much more memory, and the new B200 promises huge performance gains. Still, with high prices and unpredictable availability, it is important to look past the marketing and understand what really sets these chips apart.

We have looked at how each GPU performs in real-world scenarios, including power consumption and actual performance, to help you decide which one fits your needs and schedule.

H200: the Memory Monster

The H200 builds on the H100 by offering much more memory. Using the NVIDIA HAPA architecture, the H200 is the first GPU to provide 141 GB of HBM3e memory with a bandwidth of 4.8 TB/s.

Key Specifications:

- Memory: 141 GB HBM3e.

- Memory Bandwidth: 4.8 TB/s

- TDP: 700 W (same as H100)

- Architecture: Hopper

- Best for: larger models (100+ B+ parameters), long-context applications.

A key advantage is that both the H100 and H200 use the same 700 W of power. The H200 is not only faster, but it also delivers higher throughput without increasing power consumption.

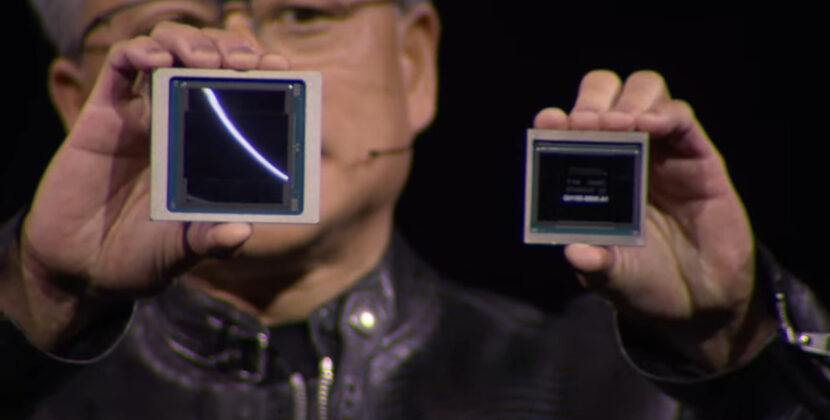

B200: The Future Unleashed

B200 is NVIDIA’s new flagship based on the Blackwell Architecture. It has 208 billion transistors compared to 80 billion in the H100 and H200, and brings major new features.

Key specifications

- Memory: 192 GB/HBM3E

- Memory bandwidth: 8 TBs

- TDP: 1000W

- Architecture: black file (dual chip design)

- Best for: next-gen models, extremely long contexts, future-proofing

Performance Deep Dive: Where Rubber Meets the Road

Training Performance: Performance data shows that a single Blackwell 200 GPU is about 2.5 times faster than a single H200 GPU, measured in tokens per second. The DJX B200 system offers three times the training performance and 15 times the inference performance compared to the DJX H100 system.

Inference Capabilities: For organizations that value deployment, inference performance is often more important than training speed. The H200 can double the inference speed of the H100 when running large language models like Llama2. The B200 offers even greater gains, with up to 15 times the inference performance of H100 systems.

Memory Bandwidth: The Unrecognized Hero

Memory bandwidth affects how quickly a GPU can supply data to its compute cores. Higher bandwidth means data moves faster, which can improve performance.

- HB100:3.35 TB/s(respectable)

- H200: 4.8 TB/s (43% Improvement)

- B200: 8TB/s (another universe)

The H200’s memory bandwidth is 4.8 TB/s, an increase from the H100’s 3.35 TB/s. This extra bandwidth is important for processing large datasets, as it helps reduce wait times for data during memory-intensive tasks. This may result in faster training.

Cost Analysis: What You Are Paying

Pricing on these GPUs has been all over the map this year. The H100 started 2025 at around $8 per hour on cloud platforms, but increased supply has pushed that down to as low as $1.90 per hour. Following the recent AWS price cuts of up to 44%, with typical ranges of $2 to $3.5 per hour, depending on the provider

If you plan to buy hardware directly, expect to pay at least $25,000 for each H100 GPU. After adding costs for networking, cooling, and other infrastructure, a full multi-GPU setup can easily exceed $400,000. These are major investments.

H200 Premium, you can expect to pay about 20-25% more for the H100, whether you buy or rent in the cloud. For some workloads, the extra memory makes the higher price worthwhile.

B200 investment: The B200 will cost at least 25% more than the H100. The H200 at first may be hard to get in early 2025; however, it offers excellent long-term performance and effectiveness. If you want the latest technology right away, you will pay a premium.

Source: H100 vs. H200 vs. B200: Choosing the Right NVIDIA GPUs for Your AI Workload