News Summary:

- NVIDIA Bluefield 4 is the engine behind the NVIDIA Inference Context Memory Storage platform. A new AI-native storage system built for large-scale inference helps speed up and expand agentic AI.

- This new storage processor is designed for agentic AI systems that need to handle long-term contexts, offering fast access to both long and short-term memory.

- The Inference Context Memory Storage platform provides AI agents with long-term memory and enables fast cross-up sharing of context. This can increase tokens per second and power efficiency by up to five times.

- With NVIDIA Spectrum-X Ethernet extended context memory, multi-term AI agents respond faster, boost throughput for each GPU, and make it easier to scale agentic inference.

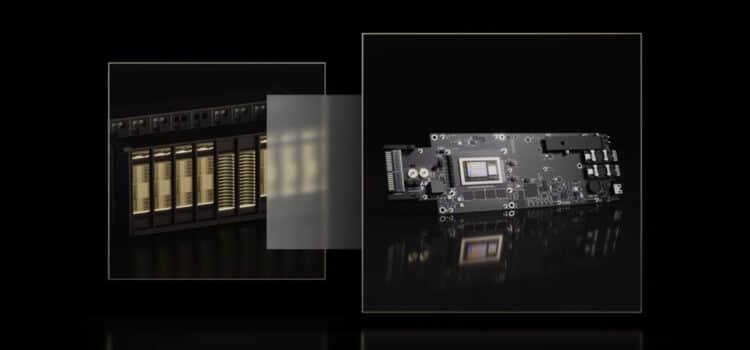

NVIDIA today announced that the NVIDIA Bluefield 4 data processor, part of the full-stack NVIDIA Bluefield platform, powers the NVIDIA Inference Context Memory Storage platform, a new class of AI-native storage infrastructure for the next frontier of AI.

As AI models grow to trillions of parameters and employ multi-step reasoning, they generate vast amounts of context data. The data is stored in a key-value (KV) cache, which is important for accuracy, user experience, and continuity.

Storing a KV cache on GPUs in the long term would slow down real-time inference in multi-agent systems. AI-native applications need a new scalable way to store and share this data.

The NVIDIA Inference Context Memory Storage platform extends GPU memory, enables fast sharing across nodes, and can boost tokens per second and power efficiency by up to 5x compared with traditional storage.

AI is changing the entire computing stack and now storage, said Jensen Huang, founder and CEO of NVIDIA. AI is no longer about one-shot chatbots, but intelligent collaborators that understand the physical world, reason over long horizons, and stay grounded in facts. AI uses tools to do real work and retain both short- and long-term memory. With Bluefield for NVIDIA and our software and hardware partners, we are reinventing the storage stack for the next frontier of AI.

The NVIDIA Inference Context Memory Storage Platform increases KV cache capacity and speeds up context sharing across large AI system clusters. Persistent context for multi-turn AI agents also helps them respond faster, increases throughput, and encourages efficient scaling.

The capabilities of NVIDIA Bluefield for Power Platform include:

- NVIDIA Rubin offers cluster-level KV Cache capability, providing the scale and capability needed for long-context, multi-term agentic interface.

- It delivers up to 5 times the power efficiency of traditional storage.

- The platform uses the NVIDIA DOCA framework, along with the NVIDIA NIXL library and NVIDIA Dynamo software, to enable fast and smart sharing of KV cache across all nodes. This helps maximize tokens per second, reduce the time to the first token, and improve responsiveness in multi-turn tasks.

- NVIDIA Bluefield 4 manages hardware-accelerated KV cache placement, removing metadata overhead, reducing data movement, and guaranteeing secure, isolated access from GPU nodes.

- NVIDIA Spectrum-X Ethernet enables efficient data sharing and retrieval, acting as the high-performance network for RDMA-based access to AI-native KV cache.

Companies like AIC, Cloudian, DDN, Dell Technologies, HPE, Hitachi Vantara, IBM, Notting, Pure Storage, Super Micro, Vast Data, and WEKA are some of the first to develop new AI storage platforms using Bluefield. These platforms are expected to be available in the second half of 2026.

To find out more, watch N Media live at CES.

Source: NVIDIA BlueField-4 Powers New Class of AI-Native Storage Infrastructure for the Next Frontier of AI