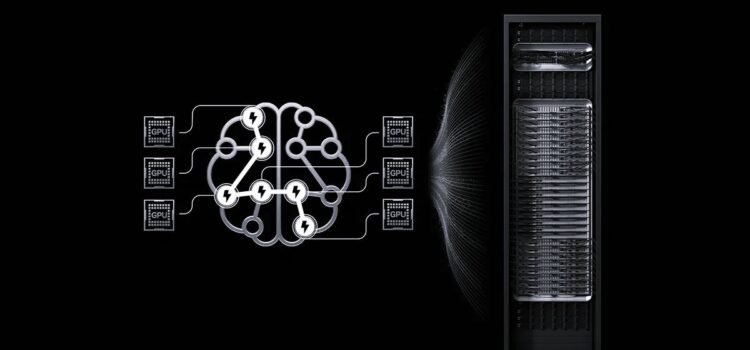

NVIDIA CUDA Deep Neural Network Library (cuDNN) is a GPU toolkit for deep neural networks. The latest update optimizes implementations of key operations, including forward and backward convolutions

- attention

- matrix multiplication

- pooling and normalization

How cuDNN Works

- Accelerated Learning: cuDNN uses computational kernels that leverage tensor cores when appropriate, delivering top performance for compute-bound operations. It also uses heuristics to select the optimal computational kernel for each problem size.

- Fusion Support: cuDNN fuses compute-bound and memory-bound operations for common fusion problem patterns. It builds computational kernels at runtime. For specialized patterns, it leverages pre-optimized computational kernels.

- Expressive op graph API command users represent computations as graphs of tensor operations. cuDNN provides both a direct C API and an open-source C++ front-end API, with most users starting with the front-end API.

This Guide Covers Installing And Using The CuDNN Front End And Back End

The NVIDIA cuDNN Deep Neural Network Library is a GPU toolkit for deep learning, offering optimized versions of common cuDNN operations such as:

- Scaled dot Production attention

- Convolution including cross-correlation

- Matrix Multiplication

- Normalizations, Softmax, and Pooling

- Arithmetic, Mathematical, Relational, and Logical Point-wise Operations

In addition to delivering fast operations, cuDNN lets you use flexible multi-operational fusion patterns for improved performance. This approach enables you to maximize the capabilities of NVIDIA GPUs for key deep-running tasks.

cuDNN enables you to express both single-operation and multi-operation computations as operation graphs. You can build these graphs using the following API layers.

- Python front-end API

- C++ front-end API

- C backend API

NVIDIA cuDNN Python and C++ frontend APIs present a user-friendly, high-level programming model suitable for most scenarios, abstracting much of the complexity of lower-level GPU operations.

Select the NVIDIA cuDNN backend API if you need access to routines not supported by the front-end APIs or if your application requires a pure C interface.

Key Features

Deep Neural Networks

Deep learning neural networks are used in computer vision, conversational AI, and recommendation platforms. They have enabled advances such as self-driving cars, smart voice assistants, and NVIDIA GPU-accelerated frameworks that help train these models much faster, cutting training time from days to just hours.

cuDNN provides more libraries for fast, low-latency inference with deep neural networks. It works in the cloud, on embedded devices, and in self-driving cars.

- CuDNN accelerates key compute-bound operations, such as attention training, convolution, and matrix multiplication, while optimizing memory-bound operations, such as attention, decode, pooling, and normalization, through advanced fusions and heuristics.

- Optimized Memory Bound Operations like:

- Attention

- Decode

- Pulling

- Softmax

- Normalization

- Activation

- Pointwise

- Tensor Transformation

- Visions of Compute-Bound and Memory-Bound Operations

- Runtime Fusion Engine to generate kernels at runtime for common fusion patterns

- Optimizations for important specialized patterns like fused attention

- Heuristics to choose the right implementation for a given problem size

CuDNN Graph API and Fusion

The cuDNN Graph API lets you describe and optimize common deep learning computation patterns by organizing operations as nodes and tensors. By treating edges in a data flow graph as first-class citizens, the API enables better performance, easier optimization, and greater flexibility for model developers than handling operations individually.

You can use the cuDNN Graph API through the recommended Python or C++ frontend APIs, which provide high-level access. For legacy applications or when Python or C++ is not suitable, use the low-level C backend API for more direct control.

- Flexible fusions of memory-limited operations into the input and output of matmul and convolution

- Specialized fusions for patterns like attention and convolution with normalization

- Support for both forward and backward propagation

- Heuristics for predicting the best implementation for a given problem size

- Open source Python/C++ frontend API

- Serialization and deserialization support

Ethically, the media sees trustworthy AI as a shared responsibility. We have established policies and practices to support a wide range of AI applications when using a model. Under our terms of service, developers should work with their teams to ensure the model meets industry needs and prevents misuse. misuse.

Please report security vulnerabilities of NVIDIA AI concerns here.

Source: NVIDIA cuDNN