The global manufacturing sector is navigating a seismic shift in which yesterday’s static automation can no longer keep pace with the race for competitiveness. As production lines demand ever greater adaptability and precision, the backbone of industrial robotics must transform. NVIDIA has risen to this challenge with its latest breakthrough: the ISAAC SDK update, which brings on-device reinforcement learning to factory robots. This leap propels industrial AI out of the data center and onto the factory floor, right at the edge.

For robotics engineers and facility managers, this update marks the end of the train-and-deploy era. Traditionally, reinforcement learning required massive external compute clusters. These clusters simulated millions of iterations before any code was deployed to a physical robot. Now, these processes run locally on N-media, Jetson, Thor, and O-Ren modules. N-media enables a new generation of self-driving industrial machines capable of real-time self-optimization.

The Technical Evolution of Isaac SDK

The Isaac SDK (software development kit) tools and resources to develop software applications have long been the backbone of NVIDIA’s robotics ecosystem, providing the library’s drivers and APIs (application programming interfaces), software bridges that let programs communicate, and are necessary to bridge the gap between virtual simulation and tangible reality. However, previous iterations relied heavily on the same-to-real pipeline. Developers would use NVIDIA ISAAC Gym (a simulation tool for training robots) to train a policy (a set of rules or behaviors) in a high-fidelity virtual environment and then export that frozen model to the robot.

With this update, the SDK releases a native on-device learning (ODL) framework that enables robots to continue learning long after deployment. If a factory robot meets an unexpected variable, be it shifting lighting, a novel component texture, or subtle changes in resistance, it no longer waits for a developer to step in. Instead, it taps into reinforcement learning for grasping and navigation, fine-tuning its motor control on the fly so as to keep production humming no matter how unpredictable the environment becomes.

Breaking The Connectivity Bottleneck

Latency has always been a challenge for advanced AI in heavy industry. Robotics often sends sensor data to distant cloud servers and then waits for updated instructions. Even fast 5G cannot prevent costly delays, which can lead to errors or safety issues. By embedding reinforcement learning directly onto the device, N-media eliminates the need for high-bandwidth connections. Robotics can now update models independently.

This local-first approach revolutionizes multi-agent coordination in smart factories. Imagine dozens of self-governing mobile robots navigating a busy floor. Each robot learns and anticipates its peers’ moves in real time. The Isaac SDK update gives these robots shared memory and peer-to-peer communication. Devices synchronize learning and build collective intelligence as the fleet grows.

The Mechanics of On-Device Reinforcement Learning

The Morpheus update to the Isaac SDK delivers a specialized compute kernel. This core program manages specific hardware functions. It splits the Jetson module’s GPU resources into two dedicated lanes. One lane powers real-time inference the doing. The other runs reinforcement learning in the background the learning.

This dual-pathway design ensures the robot’s main job never gets sidetracked by learning. Using online policy gradient optimization, the robot tweaks its behavior in careful, incremental steps. If a new mode exceeds safety limits, the Isaac SDK’s built-in safety monitor steps in. It overrides risky actions and shields both the robot and its environment during experimental phases.

Learning For Robotic Grasping

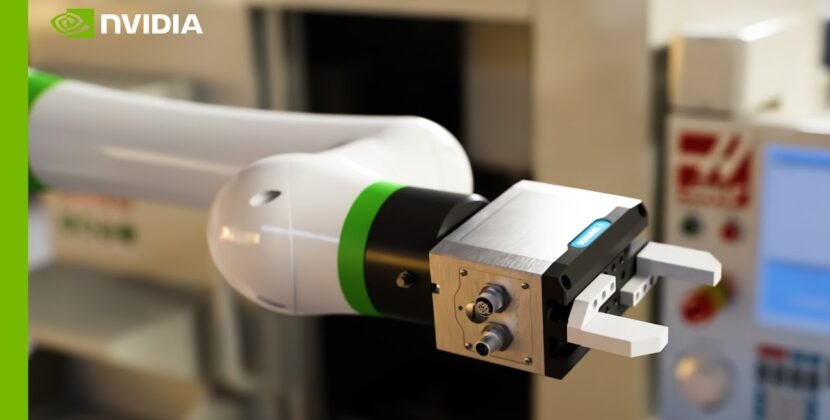

Perhaps one of the most immediate uses for this technology is in pick-and-place operations. Today’s e-commerce and pharmaceutical lines demand robots that can handle thousands of unique objects, some fragile, some translucent, many oddly shaped. Static algorithms struggle in the face of such endless variety.

Element Learning for Robotic Grasping

A robot equipped with the new Isaac SDK can adjust its grip, pressure, and approach angle based on tactile feedback and computer vision. If a grip fails, the robot analyzes the sensor data, updates its local policy, and attempts a different strategy on the next cycle. This level of granular autonomous refinement will eventually lead to the dark factory vision, where human participation is required only for high-level tactical oversight rather than mechanical troubleshooting.

Integration with Omniverse and Digital Twins

While this update focuses on on-device execution, cloud integration still plays a role. Robots that develop more efficient movements can transmit their advancements to digital twins via N-media omnivores, creating a feedback loop between real and virtual operations.

That data is validated in a rapid-fire simulation before being shared with every robot in the fleet. This sparks a global optimization cycle: robots solve local challenges, and their solutions are tested and spread worldwide. For manufacturers with plants across countries, a robot in Texas can learn from a breakthrough in Germany within hours.

Security and Governance for Autonomous Machines

Enabling autonomous machine updates brings safety and oversight considerations to the forefront. NVIDIA addresses these by aligning the Isaac SDK update with Holoscan and advanced security standards.

Every behavioral update generated through on-device reinforcement learning is logged with a cryptographic signature. This allows facility managers to perform a post-mortem audit if a robot behaves unexpectedly. Furthermore, the SDK supports policy sandboxing, allowing a robot to test a new learned behavior in a virtualized sub-process before sending actual voltage to its physical actuators.

The Economic Impact: Reducing the Total Cost of Ownership

On-device reinforcement learning delivers financial advantages by lowering the total cost of ownership for industrial robotics. Reduced dependence on ongoing human oversight makes robots long-term, self-improving assets, maximizing return on investment.

Gazing ahead to the rest of 2026, adopting the new NVIDIA Isaac SDK is likely to become essential for any facility changing to Industry 5.0 status. By blending local AI, hardware-enforced safety, and global simulation, manufacturers can build a resilient ecosystem that withstands the shocks of today’s supply chains.

Conclusion: The New Standard For Factory Intelligence

The latest Isaac SDK update is more than a feature addition it is a shift in how industrial machines learn. By freeing robots from cloud dependencies, they now learn directly from hands-on experience, moving autonomous manufacturing another step forward.

For today’s engineers, the mission shifts from programming individual robots to orchestrating entire ecosystems of learning. The machines are ready to evolve. Our role is to create an environment where evolution can prosper.

Source: NVIDIA Isaac