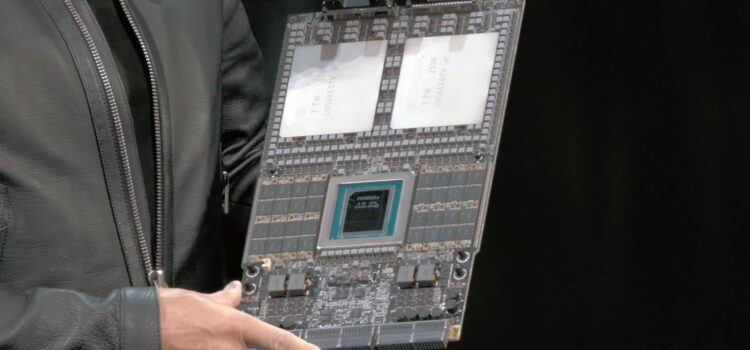

Mobile Conductor: The industry changed direction at CES 2026 when NVIDIA CEO Jensen Huang reviewed the Vera CPU’s technical details, while most of the industry focused on the Blackwell GPU supplies and HBM3e production in 2025. NVIDIA CEO Jensen Huang was working behind the scenes to develop the chip that would transform it from a GPU supplier into a full-stack data center architect.

Vera CPU is not simply an upgrade to the base architecture. It is a purpose-built agent processor made to remove the last bottleneck in the AI pipeline: serial processing and data management for autonomous agents, with mass production starting this quarter. Vera is set to become the core of the most advanced supercomputers in 2026.

The Architecture: 88 Olympus Cores

At the core of Vera lies the Olympus microarchitecture: a custom-designed ARMv9.4a implementation, unlike its predecessor, which had 72 cores. Vera features 88 high-performance cores per die. These aren’t generic, off-the-shelf ARM designs; they are AI-hardened cores with a specific focus on branch-forecasting accuracy for the intricate decision-making trees used by agentic AI.

The Vera CPU has 512 MB of L3 cache, which is 40% more than the Grace architecture. This on-chip memory helps lower the delay of KV cache lookups, which is important for long context inference. In a dual-socket setup called the Vera-Vera Super Chip, one node can use 176 cores, providing the power needed to handle the heavy data traffic in a Rubin-class data center.

Breaking the Memory Wall: HBM4 Integration

Vera’s biggest challenge from standard CPUs is its memory system. Instead of using LPDDR5X, NVIDIA has built HBM4 (high bandwidth memory) right into the CPU. This builds a shared memory pool with this Rubin GPU and offers 2.2 TB/s of memory bandwidth.

Our system in the news: this unified memory design is a big deal! The CPU can use the GPU’s memory directly without extra copying. When an AI agent needs to perform tasks such as database searches, API calls, and model updates, the Vera CPU manages everything without the usual PCIe slowdown. NVIDIA says this makes the agentic processing five times more efficient than x86-based head nodes.

The Agentic Instruction Set

Vera stands out among competitors, such as Intel’s 2026 Diamond Rapids and AMD’s Turin, thanks to its support for enemy agent extensions. This is a special instruction set designed for the chain-of-thought processing used in models such as GPT-5.2 and Gemini 3.1.

These extensions speed up the token-to-action process when a model needs to use a tool, such as doing a web search or writing to a database. The Vera CPU sends that work to a special secure logic engine on the chip. The main cores continue to focus on high-level reasoning, helping avoid the slowdowns often seen in complex autonomous tasks.

Connectivity: NVLink 6 and CXL 3.1

Vera is the first CPU to support NVLink 6, providing a fast 3.6 TB/s connection to the Rubin GPU. It also uses CXL 3.1 for memory pooling in 2026 computers. Vera will let multiple racks share a large global memory pool, enabling the training of world models with more than 50 trillion parameters.

By adding Bluefield DPU logic to Vera, networking tasks such as encryption, packet inspection, and storage visualization are managed at the processor’s edge. This frees up about 15% of the core capacity that was previously lost to data center overhead.

Power Efficiency: The 2nm Milestone

Manufactured on a refined 2nm-class process, Vera is optimized for high-performance computing, with a TDP of about 450 W for the full superchip. It delivers three times the performance of a 2024 x86 server and uses 40% less power when idle for 2026 and 2027. This power profile is the difference between a project being viable or being cancelled due to grid constraints. NVIDIA’s green supercomputing initiative is built entirely on Vera’s ability to do more with every joule of energy.

The 2026 Supercomputer Roadmap

The first videos of the Vera CPU will be from the Vera Rubin Superchip, which pairs one Vera CPU with one Rubin GPU. These will be installed in the NVL72 rack, which works as a single large AI processor.

Research institutions and cloud providers such as AWS, Azure, and Google Cloud have already placed priority orders for Vera-based systems. These machines are expected to lead to breakthroughs in several fields:

- Climate Science Coron Running Earth 2 Simulations with 10X Higher Granularity

- Drug discovery: Simulating protein folding in real time using agentic feedback loops.

- Autonomous Systems Column: Training the Alfa Romeo R1 model for level 3 self-driving vehicles

Conclusion: The end of the general-purpose era

A range of CPU specs shows that general-purpose server CPUs are no longer sufficient for high-end AI workloads. By designing a chip for AI agents as its primary users, NVIDIA has built a specialized powerhouse that other companies will likely try to match over the next few years.

Vera is far more than a CPU. It leads the AI system as production begins in early 2026. Enterprises now need to focus less on which CPU to buy and more on how quickly they can adopt the Vera-Rubin architecture to remain competitive in a world driven by autonomous agentic intelligence.