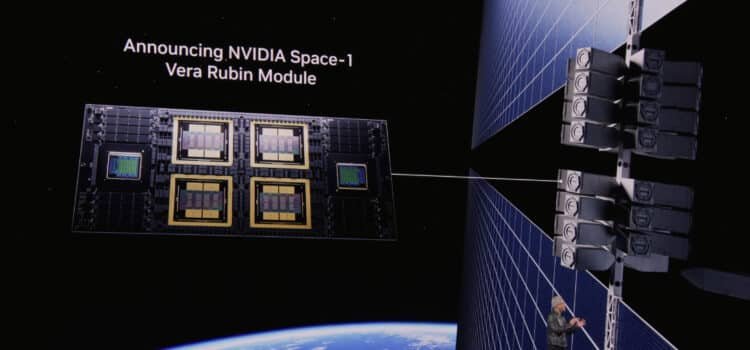

NVIDIA is unveiling the Vera Rubin platform, introducing a new era in AI as seven new chips enter full production to scale the world’s largest AI factories.

The platform combines an NVIDIA GPU, a Vera CPU, a Rubin GPU, and an NVLink 6 switch. Connectex 9, SuperNic, Bluefield 4 DPU, Spectrum 6 Ethernet switch, and the new Groq 3 LPU. These chips work together as a single AI supercomputer, powering every single stage of AI from large-scale training and testing to real-time agent tech influence.

Jensen Huang, Founder and CEO of NVIDIA, stated that Vera Rubin marks a generation with seven breakthrough chips, five racks, one large supercomputer, all built to support every phase of AI. According to Huang, the arrival of Agentic AI accelerates the latest infrastructure build-out in history.

Enterprises and developers are using the cloud for increasingly intricate, agentic workflows and mission-critical decisions. “That demands infrastructure that can keep pace,” said Dario Amadei, CEO and co‑founder of Anthropic. NVIDIA’s Vera Rubin Platform gives us the compute, networking, and system design to keep delivering as we advance the safety and reliability our customers depend on.

Sam Altman, CEO of OpenAI, affirmed that NVIDIA’s infrastructure is the foundation for continued AI advancements. With NVIDIA, Vera Rubin, and OpenAI expect to run more powerful models and agents at scale, delivering faster, more reliable systems to a broad user base.

Shift to POD Scale Systems

AI infrastructure is evolving from separate checks to servers to fully integrated rack-scale systems to POD-scale deployments to AI factories to sovereign AI. These changes deliver higher performance and make AI more cost-efficient for organizations of any size. They also enhance accessibility to powerful AI and improve energy efficiency, reducing costs for intensive workloads.

By closely integrating compute, networking, and storage and working with over 80 NVIDIA MGX partners worldwide, NVIDIA and Vera Rubin deliver the most extensive POD-scale platform. This supercomputer combines multiple AI-focused racks into a single unified system.

NVIDIA Vera Rubin NVL72, Rack

The Vera Rubin NL72 delivers key benefits by combining 72 Rubin GPUs and 36 Vera CPUs connected via NVLink 6, along with ConnectX 9, SuperNic, and Bluefield 4 DPUs. It enables streaming large mixture-of-experts models with only a quarter of the GPUs required by the NVIDIA Blackwell platform while delivering up to 10 times higher inference throughput per watt and reducing the cost per token by 90%.

NVL72 is built for large-scale AI factories worldwide. It works smoothly with NVIDIA Quantum X800 InfiniBand and Spectrum X Ethernet, keeping graphics processing utility unit clusters running efficiently while cutting training time and overall costs.

NVIDIA Vera CPU Rack

Reinforcement learning and agentic AI workloads depend on large numbers of CPU-based environments to test and validate results generated by models running on GPU systems.

The NVIDIA Vera CPU Rack features a lens liquid-cooled setup powered by NVIDIA MyMJX and 256 Vera CPUs. It delivers scalable, energy-efficient performance and top single-core performance, enabling large-scale agentic AI.

With Spectrum-X Ethernet Networking (a network infrastructure solution), Vera CPU Racks keep CPU environments in sync across the AI factory. Alongside GPU Compute Racks, they form the CPU base for large-scale agentic AI and reinforcement learning. CPUs in this system deliver results twice as efficiently and 50% faster than traditional CPUs.

NVIDIA Groq3 LPX Rack

Media, Groq3, LPX, Advances Accelerated Computing with Key Benefits. It is designed for low-latency, large-context, agentic systems and delivers up to 35 times higher inference throughput per megawatt and up to 10 times greater revenue potential for trillion-parameter models when used with Vera Rubin. When scaled up, many LPUs can work together as a single large processor for fast, predictable inference. The LPX rack has 256 LPU processors, 128 GB of on-chip SRAM, and 640 TB/s of bandwidth. Used with Vera Rubin, NVL72, Rubin GPUs, and LPUs, speeds up decoding by computing every layer of the AI model for each output token in parallel.

The LPX architecture is optimized for Trillium parameter models and million-token contexts, working with Vera Rubin to maximize power, memory, and compute. Its higher throughput per watt and better token performance open up a new level of high-end trillion-parameter inference, creating more revenue opportunities for AI providers. LPX is fully liquid-cooled, built on MGX infrastructure, and will be available in the second half of this year as part of next-generation Vera Rubin AI.3.

NVIDIA Bluefield 4STX Storage Rack

The NVIDIA Bluefield-4 STX RackScale System is a storage solution created for AI, extending GPU memory across the POD and powered by Bluefield-4, which combines the NVIDIA Vera CPU and ConnectX-9 SuperNIC. STX provides a high-bandwidth shared layer for storing and retrieving a large key-value cache derived from data from language models and agentic AI workflows.

NVIDIA DOCA Memos, a new DOCA framework that enhances BlueField for storage, allows dedicated KV Cache storage processing. This boosts inference throughput by up to 5x and greatly improves power efficiency compared to general-purpose storage. As a result, the system provides a broader context for faster multi-term interactions with AI agents, more scalable AI services, and better overall infrastructure utilization.

Timothee Lacroix, Co-Founder and Chief Technology Officer of Mistral AI, commented that the NVIDIA BlueField for STX rack-scale complex memory storage system delivers the significant performance boost required to expand agentic AI. Lacroix highlighted that by introducing a storage tier designed specifically for AI agents, primary STX helps maintain coherence and speed during complex reasoning over large datasets.

NVIDIA Spectrum 6 SPX Ethernet Rack

Spectrum-6 SPX Ethernet is designed to accelerate east–west traffic (data transfer between servers in a data center) in AI factories. It can be set up with Spectrum X Ethernet (networking technology) for NVIDIA Quantum X800 InfiniBand switches (high-speed data connections), providing fast, high-throughput connections between racks at scale.

Spectrum-X Ethernet Photonics with pro-packaged optics (advanced optical technology for networking) delivers up to 5× better optical power efficiency and 10× more resiliency than traditional pluggable transceivers.

Improving Resiliency and Energy Efficiency

NVIDIA and over 200 data center partners announced the NVIDIA DSX platform for Vera Rubin. DSX Max-Q dynamically manages power across the AI factory, enabling data centers to deploy 30% more AI infrastructure within the same power limits. The new DSX Flex software makes AI factories grid flexible, unlocking 100 gigawatts of unused grid power.

NVIDIA also released the Vera Rubin DSX AI Factory Reference Design, a blueprint for AI infrastructure that maximizes tokens per watt and overall output. This design improves system resiliency (ability to handle outages) and speeds up time to first production.

By tightly integrating compute, networking, storage, power, and cooling, this architecture improves energy efficiency and supports reliable AI factory scaling under heavy workloads while maintaining high uptime.

Broad Ecosystem Support

Vera Rubin based products will become available from partners in the second half of the year. Leading cloud providers. Amazon Web Services, Google Cloud, Microsoft Azure, and Oracle Cloud Infrastructure along with NVIDIA cloud partners CoreWeave, Cursoe, Lombard, Nebius, Nscale, and Together AI will offer these products.

Global system manufacturers such as Cisco, Dell Technologies, HPE, Lenovo, and Supermicro plan to offer a variety of servers based on Vera Rubin products. Additional partners include Aivres, Asus, Foxconn, Gigabyte, Inventec, Pegatron, Quanta Cloud Technology (QCT), Wistron, and Wiwynn.

AI labs and leading model developers, including Anthropic, Meta, Mistral AI, and OpenAI, intend to use the NVIDIA Vera Rubin platform to train larger, more advanced models. Their goal is to deliver long-context, multimodal systems with reduced latency and lower costs than previous GPU generations.