As confidential computing grows, keeping generative AI secure is now a top priority for enterprise architects. When companies shift from pilot projects to full-scale use of Large Language Models (LLMs), protecting model weights and prompt data during inference becomes a major challenge. To help solve this, Amazon Web Services has released AWS Nitro Enclaves v3.4, which introduces Sealed Generative Logic Isolation.

This new feature changes how “data in use” is protected in the cloud. By building on the Nitro System, AWS now offers a secure, verifiable space where generative workloads can run without being exposed to the parent instance, system administrators, or the cloud provider.

The Architecture of Sealed Generative Logic Isolation

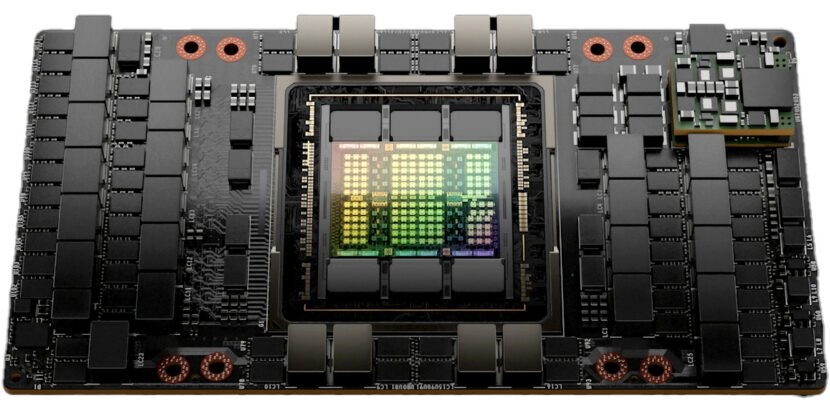

Sealed Generative Logic Isolation is a security tool made for the high memory and computing needs of modern AI. Older confidential computing setups often have trouble handling large model inference. Nitro Enclaves v3.4 addresses this by adding a hardware-based “seal” around the enclave’s memory and execution.

When running a generative model in an enclave, Sealed Generative Logic Isolation keeps the full inference—reading the prompt through to output—inside a secure boundary. These enclaves block interactive access, have no persistent storage, and have no external network. Data moves only via a secure local vsock channel to the parent EC2 instance, which relays encrypted data.

+1

Cryptographic Attestation and Model Integrity

A main update in v3.4 is the enhanced attestation document. In generative AI, proving the correct model runs is as important as data protection. Nitro Enclaves v3.4 allows detailed measurement of model weights and logic with Platform Configuration Registers (PCRs).

Through integration with AWS Key Management Service (KMS), a Nitro Enclave can cryptographically prove its identity and the integrity of its “Generative Logic” before any decryption keys are released. This means that an LLM’s weights, often a company’s most valuable intellectual property, remain encrypted in Amazon S3 and are decrypted only in the enclave’s volatile memory. If the enclave’s code or the model’s signature is altered by even a single bit, the attestation fails, and the “seal” prevents the logic from being executed.

+1

Confronting the Challenges of Generative AI at Scale

Large-scale AI deployments face three primary security hurdles: prompt leakage, model weight theft, and exposure of inference telemetry. AWS Nitro Enclaves v3.4 addresses these with a layered isolation approach: applications in which the parent instance never sees the unencrypted prompt or the model’s response. This is essential for domains such as healthcare and finance, where PII (Personally Identifiable Information) must be processed by AI without being logged or stored.

- Persistent Model Protection: Since enclaves lack persistent storage, decrypted model weights exist only in memory. When the enclave shuts down, the Nitro Hypervisor securely erases the memory, so attackers cannot recover any data.

- Refined Resource Allocation: v3.4 improves the balance between memory and compute, so larger enclaves can use high-performance instances like the c7i and r7g series. This means the added security does not significantly slow down inference.

Pragmatic Deployment Workflows

To deploy Sealed Generative Logic Isolation, you follow a clear DevOps process. First, you create an Enclave Image File (EIF) with the inference engine, such as vLLM or llama.cpp, and the required startup code.

In v3.4, nitro-cli supports larger images and complex dependencies, simplifying the containerization of multimodal models. After signing and deploying the EIF, the enclave retrieves the model’s decryption key from KMS using its unique identity, keeping models isolated from the parent OS kernel.

The Shift Toward Sovereign and Compliant AI

This release comes as global regulations move toward Sovereign AI. Governments and international bodies now require AI processing to remain within specific legal and security boundaries. Using AWS Nitro Enclaves v3.4, organizations can demonstrate a “Zero-Trust” setup for their AI workloads.

Isolation matters for Multi-Party Collaboration. With v3.4, two organizations can share sensitive data in a single enclave, running specialized “LoRA” analysis or training models while keeping the raw data inaccessible to either party. The enclave acts as a secure neutral “clean room” for generative logic.

Conclusion: The New Baseline for AI Trust

As generative AI transitions from novelty to a core component of enterprise infrastructure, the underlying “plumbing” must be as robust as the models themselves. The introduction of Sealed Generative Logic Isolation in AWS Nitro Enclaves v3.4 provides the technical foundation for this trust. By hardware-sealing the inference process, AWS is removing the major barriers to AI adoption in highly regulated sectors.

For organizations seeking to securely integrate LLMs into workflows, v3.4 sets a new standard. It protects model intelligence, even in cloud environments.

Source: What is Nitro Enclaves?