In high-performance computing, hardware access is only possible with strong software support. With the release of CUDA 13.1 in 2026, the industry received more than a routine update, and NVIDIA confirmed full specifications for its most powerful Blackwell accelerator: the B200 Ultra with 144 GB of HBM3e memory per GPU. Developers have the resources needed for new trillion-parameter models and advanced AI workflows.

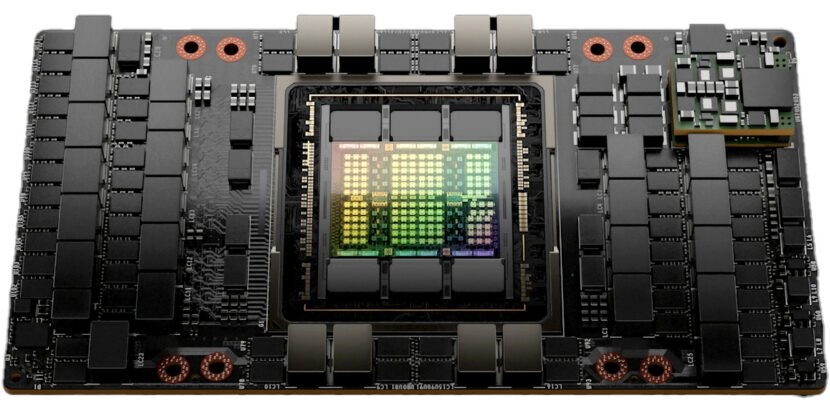

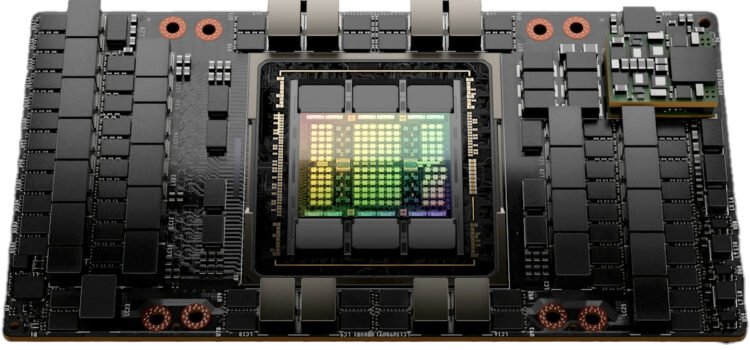

The Architecture of the B200 Ultra

The B200 Ultra is the peak of Blackwell Architecture, addressing memory bottlenecks seen in Hopper-based systems (the architecture family that preceded Blackwell). While the standard B200 was a major step, the Ultra variant targets the AI Factory era, handling rapidly growing model weights and KV (key-value) caches in AI workloads.

NVIDIA uses 12 high-bandwidth memory (HBM3e) stacks to reach 144 GB on the B200 Ultra, enabled by a dual-die setup with two chips linked via the NVIDIA Hi-Bandwidth Interface (NV-HBI), a specialized high-speed connection. The 10 TB/s (terabytes per second) connection joins the chips into a single accelerator, allowing CUDA 13.1 to use the full memory pool without added latency.

CUDA 13.1: The Software Enabler for 144 GB

CUDA 13.1 is the technical bridge enabling developers to harness this capacity. A key addition is CUDA Tile A, a programming model abstracting Blackwell’s hardware complexity.

Previously, GPU programming required managing data at the thread and warp level with SIMT. As memory and hardware grow more complex, this becomes harder. CUDA tiles let developers break work into tiles or data chunks, which the computer and runtime assign to the B200 Ultra’s 144 GB of memory and its Tensor cores. The memory bandwidth, about 8 TB/s, is used efficiently, and programmers avoid manual detail management.

Memory Locality and Green Contexts

Among the most significant features in CUDA 13.1 are those that manage how data is moved, stored, updated, calculated, optimized, partitioned, and introduced.

Green Contexts: Let system administrators set up and manage separate sections of GPU resources on a B200 Ultra with 144 GB of HBM3e. A single card can be split into several isolated environments, each with its own memory and streaming multiprocessors (SMs, the fundamental compute units of a GPU). This is especially useful for AI hubs and data centers that support multiple users, since one B200 Ultra can run a secure government language model in one section and a commercial AI agent in another, with hardware making sure there is no data leakage between them.

Why 144 GB Matters: The LLM and MOE Challenge

The jump to 144 GB of HBM3 meets the specific needs of a mixture of expert architectures and large language models with long context windows. By 2026, models like Gemini 2.0 and GPT-5 will require substantial memory for millions of tokens simultaneously.

Previously, developers used model sharding, splitting models across GPUs, which caused delays due to interconnect latency. With 144 GB memory, the B200 Ultra fits larger models on a single chip or in fewer GPUs per cluster, cutting costs and boosting real-time speeds.

The B200 Ultra supports FP4 (4-bit floating-point 12) precision in CUDA 13.1, effectively doubling memory usage. With FP4, developers fit models that required 288 GB into 144 GB with minimal accuracy loss, thanks to Blackfield Transformer Engine’s dynamic scaling.

Interconnect And Rack-Scale Integration

The B200 Ultra is powerful on its own, but it really shines when connected with NVLink 5 (NVIDIA’s latest high-speed interconnect technology for GPUs). CUDA 13.1 improves communication techniques for the NVLink switch system, enabling up to 576 GPUs to work together over a single fast network.

A standard DGX B200 setup combines eight 144 GB units for more than 1.1 TB of HBM3e memory. This high memory density enables the development of models with trillions of parameters, something that was not possible just two years ago. CUDA 13.1 also boosts the performance of the NVIDIA Collective Communications Library (NCCL), so operators like AI Radios and all-gather runs run at the full 1.8 GB/s speed of NVLink 5.

Developer Productivity and Future Proofing

Finally, NVIDIA has used the CUDA 13.1 update to modernize the developer experience. The toolkit now includes a unified version for both Tegra embedded (NVIDIA’s platform for mobile and edge devices) and desktop- and data-center GPUs, reducing the overhead for developers building cross-platform AI applications. The addition of CU TilePython, a domain-specific language (DSL) for authoring tile-based kernels, enables data scientists to write high-performance GPU code directly in Python, avoiding the need for low-level C++ for many common optimization procedures.

The focus on productivity ensures the transition to the Blackwell Ultra platform remains as seamless as possible. Companies that have invested in the CUDA ecosystem over the last decade will find that their current codebases are forward-compatible, gaining performance boosts simply by recompiling with the CUDA 13.1 toolkit to take advantage of the B200 Ultra’s new memory resource abstractions.

Final Thoughts: The New Baseline for AI Infrastructure.

With CUDA 13.1 confirming 144 GB HBM3e memory for the B200 Ultra, a new standard has emerged for enterprise and national AI infrastructure. Large memory and a software stack focused on abstraction, safety, and multi-tenant use secure NVIDIA’s place in AI.

As the B200 Ultra ships to top cloud providers and research labs in early 2026, the question will move from “How much memory is available?” to “How can we use it best?” With 144 GB, working with AI researchers is no longer held back by hardware, but only by the challenges they decide to tackle.