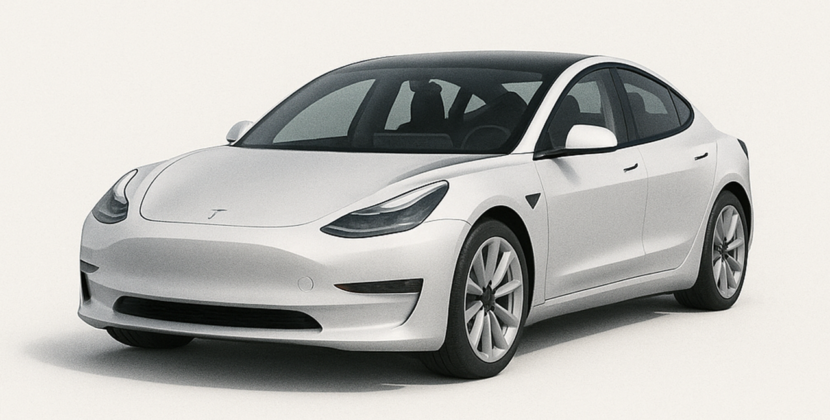

In autonomous mobility, the benchmark for full self-driving has shifted. Now it requires deep semantic understanding, comprehending an environment’s meaning and context, not just obstacle avoidance. In March 2026, Tesla’s Gen 3 firmware introduced a paradigm-defining feature: VLM (vision language model) logic for terrain adaptation. By embedding vision-language models into the vehicle’s system, in the reasoning stack responsible for deliberate, complex decisions, Tesla moves beyond traditional occupancy grids. Occupancy grids are basic maps showing where objects are present. This approach lets its fleet interpret and navigate unstructured environments with human-like intuition.

This update is the most significant architectural change to Tesla’s Neural Stack since the introduction of end-to-end neural networks (FSD V12). In those networks, the entire driving process is managed by a single neural network that addresses the semantic gap. The issue is that only systems can understand ambiguous surfaces, such as simple wet glass, deep silt, or construction zone debris.

The Architecture of VLM Logic in Gen 3 firmware

The Gen 3 firmware moves from a purely geometric world model to a semantic reasoning framework. Traditional AI systems treat the world as a 3D grid of 3D volumes called voxels. Voxels are small cubes in a grid used to represent space. In this system, a voxel is marked as occupied or empty. This method works for avoiding solid object obstacles such as concrete walls; however, this binary logic does not help when a cyber-truck must decide if a muddy path is safe or if a puddle hides a pothole.

With VLM and Logic, the Tesla AI supercomputer processes camera feeds through a multi-modal transformer. This neural network model can interpret multiple types of data. The vehicle first describes the scene in a latent linguistic space, which is an internal language-like representation used by AI to understand context before executing a command. For example, instead of seeing only a low-level competitor like Brown Moline at XYZ, the VLM identifies deep, saturated mud with standing water and a high risk of traction loss. This semantic level triggers specific terrain-adaptation profiles: suspension damping (how shock absorbers respond), torque distribution (how engine power is sent to each wheel), and tire slip targets (optimal tire spin for traction). Adjust in real time.

TERRAIN ADAPTATION: THE PHYSICS OF SEMANTIC INTELLIGENCE

Terrain Adaptation, powered by Vehicle Logic Models (VLM) software, updates the Cybercrime and upcoming Cyber Beast models when the firmware detects a shift from asphalt to an unstructured surface. VLM Logic Response promptly: it acts as a strategic planner for the vehicle’s air suspension system, which controls ride height and stiffness, and the powertrain, which manages power distribution to the wheels.

- Predictive damping delays the traditional system’s response after a vehicle hits a bump. VLM logic instead analyzes terrain texture and appearance ahead. The model detects surfaces such as loose gravel, small shifting stones, and washboarding, and repairs uneven patches. The firmware softens compression damping on Gen3 struts. This adjustment maintains tire contact patch integrity. The tire stays fully in touch with the road surface.

- Dynamic Torque Vectoring: On slippery or uneven surfaces, the ision Language Model (VLM)t logic informs the Tri-Motor Drive Unit. The unit distributes power among the motors. It applies anticipatory torque bias in shifting power to the wheels most likely to need it before traction issues occur. The vehicle maintains momentum through sand or snow with less input from the traditional traction control system. The traditional system typically reduces wheel slip by braking or limiting power.

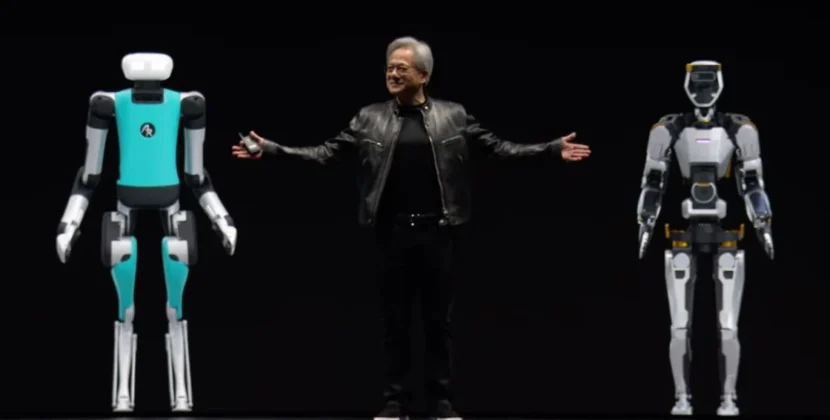

- Micro adjustments in gait: this common disk logic is not limited to vehicles. The Gen3 firmware is a unified software platform that also powers the Optimus Gen3 humanoid robot. With VLM training adaptation, the robot moves confidently across cluttered factory floors using its vision system to detect hazards. For example, it recognizes a pile of oily rags as a slip hazard and adjusts its center of mass before its foot comes into contact with the pile.

Embodied AI and the Sovereign Logic Guardrail

A critical component of this update is the concept of Sovereign AI. Tesla runs these massive vision-language models entirely on the device. This bypasses the need for cloud-based inference. As a result, terrain adaptation stays functional even in remote off-grid areas where LTE or Starlink connectivity is intermittent.

To achieve this, the Gen3 firmware uses a technique called optimized speculative decoding. It compresses numbers to improve the efficiency of AI computations. The AI computer runs a smaller, faster draft model for repetitive, frequent driving tasks. The longer visual language model, verifier model, intermittently checks the meaning and context of what the car sees in its surroundings. If the VLM detects a complex terrain change that the draft model missed, it overrides the driving path with a safe state command. This command directs the car to pause or take safe action. This dual-model approach provides a safety guardrail that is impossible in single-model, end-to-end systems.

The Role of Generative World Models in Training

VLM logic for terrain adaptation became effective through millions of miles of synthetic (computer-generated) off-road training. Tesla’s neural word simulator made this possible. This generated artificial intelligence program creates hyper-realistic three-dimensional environments and helps teach the VLM how different terrain types behave.

By simulating the physics of mud, sand, water, and ice, Tesla’s engineers exposed the VLM to corner cases too dangerous or rare to test in the real world. This training enables the VLM’s cloud-like reasoning to predict that a dark patch on a frozen road is likely black ice. It triggers an immediate shift in the terrain adaptation profile to ultra-low-grip mode.

Developer and Power Use Implications

For the technical community, Tesla Gen3 firmware includes a new vision debug mode via the service menu. This mode displays Vision Localization Modules (VLMs) and internal monologue. In real-time, users see descriptive labels such as:

- surface wet cobblestone, indicating the detected surface type

- traction ESD 045, for estimating tire traction

- adaptation soft rebound active, for the current suspension mode

This transparency is a massive step for AI interpretability. Instead of wondering why a vehicle slowed down or changed course, VLM logic provides a clear semantic reason. This builds user trust. It also lets Tesla’s fleet learning system flag when the VLM’s terrain view differs from the human driver’s actions. This creates a cycle of continuous improvement.

Final Thoughts

The integration of Vision Language Model (VLM)M logic for terrain adaptation in TeslaGen 33 firmware marks the end of the specialized era. We are no longer viewing just a car that drives or a robot that walks. Now there is a unified embodied intelligence that understands the physical world semantically. Firmware continues to roll out globally throughout the first half of 2026. The gap between human and machine perception will continue to close. Whether navigating a snowy mountain pass in a Cybertruck or a busy warehouse in an autonomous robot, the ability to see, think, and adapt to the terrain is the final piece of the Autonomy Puzzle.

Source: Firmware Version 23.8.2 for the Tesla Gen 3 Wall Connector