We are launching Meta AI Support Assistant worldwide on Facebook and Instagram. This AI-powered tool provides 24/7 automated assistance for account issues, such as real-time password updates and profile settings adjustments.

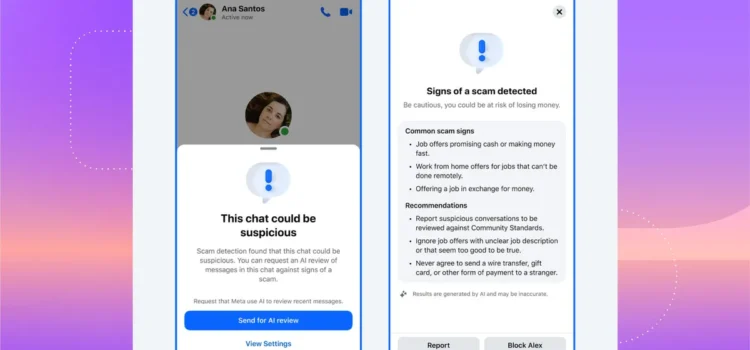

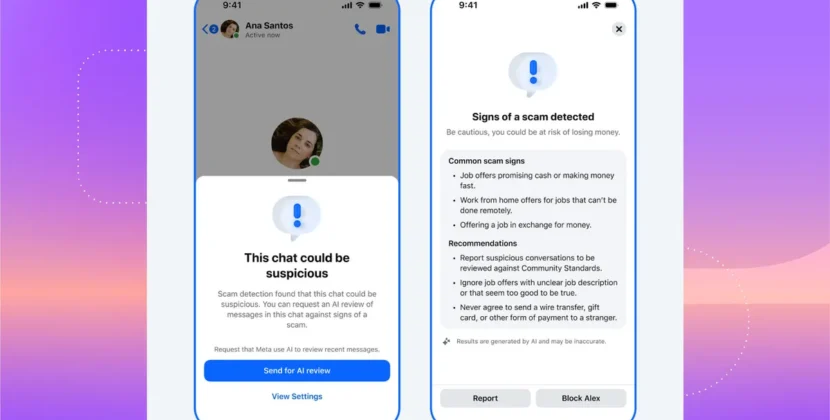

We plan to introduce progressively advanced AI systems across our apps to strengthen our enforcement capabilities. These systems are designed to quickly identify and remove serious content violations, such as scams and illegal material, by analyzing patterns, behaviors, and content for signs of misuse.

Today, we are launching new AI tools for support and content enforcement to improve your experience on our apps as technology advances. We are using AI to provide you with reliable help when you need it and to detect serious violations, such as scams, more quickly and accurately, while reducing pleading errors.

Introducing the Meta AI Support Assistant On Facebook And Instagram

In December, we previewed the Meta AI Support Assistant. This tool provides reliable 24/7 support for nearly any issue. Now we are rolling it out in areas where Meta AI is available on Facebook and Instagram for iOS, Android, and other desktop Help Centers. We’ve added even more capabilities and ways to help (updated March 20, 2026, at 1:30 p.m. PT)

When you have an issue, you need a solution – not just a suggestion. The new Meta AI support assistance is designed to help you resolve account problems from start to finish. It offers answers for any question – like about notification settings or new features – and issues like it can also take action for you on a growing set of requests directly within Facebook and, in the future, on Instagram, including:

- Reporting scans, impersonation accounts, or problematic content

- See why your account was removed, manage appeals, track updates, adjust privacy and profile settings, and reset your password.

- Managing your privacy settings

- Resetting passwords

- Updating profile settings

Getting support should be simple. The Meta AI Support Assistant is built into Facebook and Instagram, so support is just a tap away, with responses in under five seconds—dramatically reducing wait times compared to traditional Help Center searches or seeking answers on external websites.

The Meta AI Support Assistant marks progress in our app support. Most feedback has been positive. It’s now available in all languages supported by Facebook and Instagram.

We started rolling out the Support Assistant to help people who have trouble logging into their Facebook and Instagram accounts. Right now, it’s available in select cases in the US and Canada. We plan to expand to more countries and support more account types and scenarios soon. We are continuing to invest in AI-powered tools to make support more accessible, reliable, and effective. As more people use the Meta AI Support Assistant and the technology improves, we will continue to refine it. Learn more about the Meta AI Support Assistant here.

Improving Content Enforcement With More Advanced AI

Last year, we shared positive results from changes we made to reduce mistakes and focus our proactive enforcement on the most serious illegal conditions: content such as terrorism, child exploitation, drugs, fraud, and scams. We also explained that we’re testing more advanced AI systems for content enforcement. These new systems can catch more violations accurately, stop more scams, and respond faster to real-world events, all while making fewer mistakes.

Early tests of these systems have been promising, promising as they can:

- Increase detection rates for scam attempts that trick people into revealing login details. AI algorithms have helped identify and address up to 5,000 previously undetected scam attempts daily.

- Identify and prevent additional accounts from impersonating celebrities and other high‑profile people, helping us reduce reports about the most impersonated celebrities by over 80%.

- Double the detection of violent or explicit sexual solicitation using AI models, while also reducing missed violations by more than 60% through improved accuracy.

- Prevent account takeovers by monitoring access patterns. If an account is accessed from an unusual location, or if the password or profile is changed unexpectedly, AI flags this behavior potentially harmful.

- Detect fake websites imitating legitimate ones by analyzing suspicious URLs, logos, and pricing patterns. After broader testing, AI helped reduce user exposure to scam ads and other severe violations by 7%, providing stronger user and brand protection.

These advanced AI systems can do all of this in languages spoken by 98% of people online. This is much more than the previous coverage of about 80 languages. They can also increase capacity in any language as needed. They adapt to understand cultural traits, including niche subcultures, rapidly changing and regionally specific code words, emoji meanings, and slang.

A Smarter Approach

In the next few years, we’ll start using these advanced AI systems across our apps once they consistently outperform our current content enforcement methods. This will change our approach as we make this shift. We will rely less on third-party vendors for content enforcement and focus on strengthening our own systems and teams. We still have people reviewing content, but these AI systems will handle tasks better suited to technology, such as repetitive reviews of graphic content or areas where bad actors are constantly changing their tactics, like illicit drug sales or scams.

Even as we use new technology to expand what we can do, people will stay at the center of our approach. AI helps us move faster and work at a larger scale, but it does not replace human decision-making. Instead, it helps us apply that judgment more consistently across billions of pieces of content. Experts will design, train, oversee, and evaluate our AI systems, measure their performance, and make the most complex and important decisions. For example, people will still play a key role in making the highest-risk and most critical decisions, such as appeals of account disablement or reports to law enforcement.

We thoroughly test each AI system, install safeguards, and evaluate performance to minimize bias and ensure consistency and accuracy. With AI-driven tools like the Meta AI Support Assistant, people can more easily report violations and appeal errors, letting AI handle scalable detection while experts manage nuanced decisions. This combined approach leverages both advanced AI and human expertise for optimal support and safety.

Source: Boosting Your Support and Safety on Meta’s Apps With AI