Google Cloud has launched the Vertex AI Evaluation Suite. This toolkit measures how well digital agents perform and how reliable they are. As more businesses use autonomous digital agents for complex customer and internal tasks, solid benchmarking is needed. The suite offers a standard way to test how well these digital agents follow instructions and use external data. By uniting these evaluation tools in the cloud, Google Cloud aims to replace subjective impressions with objective, repeatable technical audits.

Quantifying Digital Performance and Precision

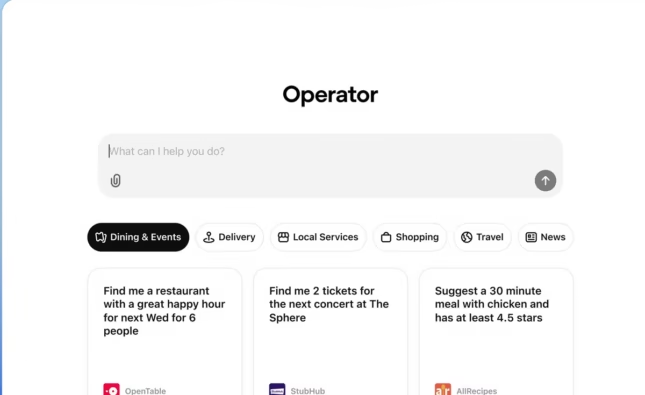

The main feature of the new evaluation suite is “instruction following”. It measures how accurately a system completes multi-step requests. Previously, engineers had to check these systems manually, which took a lot of time. Now, the suite automates this process. It compares the system’s results to a set of predefined “ground truth” datasets. It gives a score based on relevance, accuracy, and safety. This helps developers pinpoint where a process fails during a complex task.

The suite also adds “contextual recall” testing. This ensures systems use the right information from their knowledge base. This is critical in areas such as finance or legal services, where rules change frequently. The tools check if your digital assistant is using the latest policy documents. They also flag it if it pulls from outdated sources by mistake. Finding defects early helps companies avoid spreading wrong information. This detailed oversight acts as a safety net for large enterprise projects.

Rapid Prototyping And Iterative Benchmarking

The Vertex Evaluation Suite speeds up the move from development to production. In test days, by enabling repeated benchmarking, developers can run thousands of simulated interactions much faster than they can conduct manual reviews. These tests check how well the system handles unusual situations and tricky problems. The platform gives a detailed side-by-side comparison of different software versions. This makes it easier for teams to choose the best setup for speed and accuracy.

Connection with existing “continuous integration and deployment” (CI/CD) workflows is a key feature of the April 2026 update. This means that a major feature in the April 2026 update is integration with existing continuous integration and deployment (CI/CD) workflows. Now, whenever a developer updates the code, the evaluation suite automatically runs a new set of tests. If the performance score falls below a set level, the system block can block the update from going live. This automated gatekeeping ensures only stable, verified versions are deployed, reducing the risk of errors that could upset customers or compromise end data variability.

Governance And Observability In The Cloud

The release focuses on visibility, introducing the new agentic health dashboard. This tool provides a real-time view of how virtual assistants are working across the company’s network. It tracks reasoning latency, the time a system takes to process a request and generate a plan. If an agent starts acting strangely or slows down, administrators get instant alerts. This kind of observability helps teams fix faults early before small problems become bigger ones.

This suite also comes with bias and safety guardrails built into its evaluation process. These tools can automatically scan for outputs that could break company policies or ethical rules. They mark anything unusual or possibly harmful for people to review. This keeps the digital agents aligned with the organization’s values. By delivering a clear audit trail for every automated decision, Google Cloud helps businesses meet new international transparency rules.

Scaling Infrastructure For Universal Intelligence

To handle the heavy computing needs of these evaluations, the suite uses the latest TPU and GPU hardware clusters. This lets it run even the most elaborate digital agent simulations almost instantly. The evaluation suite can adjust its testing power to fit each project. A small business might run a few hundred tests daily, while a global retailer could simulate millions of digital agent interactions every hour.

The rollout features a Library of Templates with ready-made testing scenarios for different industries. There are special modules for retail, healthcare, and telecommunications, each having its own key performance indicators. This speeds up project start-ups, so teams can begin auditing their systems right away. By standardizing these metrics, Google Cloud is helping the industry speak the same language about software reliability. This supports partners and customers in building a better-connected and more reliable digital infrastructure.

The Crystalline Watchman of Integrity

As we watch these digital synapses fire faster across our screens, we are witnessing the rise of a new kind of quiet protector, the clouds, whose architecture is now more attentive, acting as a tireless protector that keeps up with our need for certainty. We are moving toward a future where errors are simply technical challenges handled by clear logic. Over time, worries about mistakes or hallucinations may fade, replaced by trust in systems that deal with complex tasks with integrity. One day, we may realize that much of our world is managed by reliable and unseen technology. The machine is learning to monitor itself, serving as a steady and dependable partner.

Source: Vertex AI offers new ways to build and manage multi-agent systems