News Summary

- The NVIDIA Vera Rubin platform is leading the way into the next AI era with:

- Vera Rubin NVL 72 GPU Raks

- Vera CPU racks, GPU3, LPX racks for faster AI answers, Bluefield 4, STX, storage racks, 4 data, and Spectrum X. SPX Ethernet racks for connecting everything.

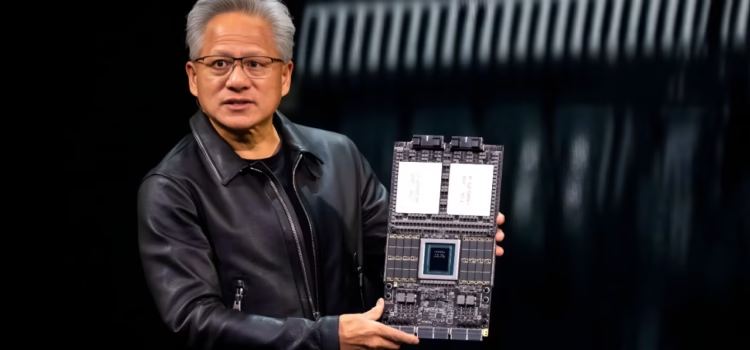

At GTC, Nvidia announced that the Nvidia Vera Rubin platform is launching the next phase of agentic AI: 7 new chains. Chips are now in full production to help scale the world’s largest AI factories.

The platform brings together the Vera CPU, the Ruben GPU, a fast Ethernet link switch, a special network card, the BlueField 4 processing chip, a Spectrum 6 switch for network connections, and the new GROC3 chip for fast inference. All these parts work together as one powerful computer for every task, from training to giving instant answers.

“ There is a generational gap: seven breakthrough chips, five racks, one giant supercomputer all built to power every phase of AI, said Jensen Huang, founder and CEO of Nvidia. The agentic AI inflection point has arrived, with Vera Rubin kicking off one of the greatest infrastructures build-outs in history.

Enterprises and developers are using cloud for more intricate reasoning, agentic workflows, and mission-critical decisions that require infrastructure we have that can keep up, said Dario Amodei, CEO and co‑founder of Anthropic. NVIDIA’s Vera Rubin platform provides the compute, networking, and system design we need to continue delivering safe and reliable solutions for our customers.

“Nvidia infrastructure is the foundation that lets us keep advancing AI,” said Sam Altman, CEO of OpenAI, with Nvidia. Vera Rubin will run more powerful models and agents at scale, delivering faster, more reliable systems to hundreds of millions of people.

Shift To Pod-Scale Systems

AI tools are evolving rip rapidly, moving from separate components to integrated systems and large-scale AI centers. These changes make AI tools evolve rapidly, moving from separate components to integrated systems and large-scale AI centers. These changes make AI fine. faster and cheaper for all types of organizations. They also make AI easier to use and consume less power.

With close integration among computing, networking, and storage, and support from over 80 partners, where Rubin is the largest platform. For large-scale AI systems, it brings many AI racks together into a single system.

NVIDIA, Vera Rubin, NVL 72

The Vera Rubin NBL72 commands 72 Rubin GPUs and 36 Vera CPUs connected by NVLink 6. Plus, ConnectX9 SuperNics and BlueField 4 DPUs. This setup trains large mixture-of-experts models using only a quarter of the GPUs needed for the NVIDIA Blackwell platform and delivers up to 10× higher inference throughput per watt at one-tenth of the cost per token.

NVL72 is built for large-scale AI factories worldwide. It works smoothly with NVIDIA Quantum X800. InfiniBand and Spectrum X Ethernet keep graphics processing unit clusters highly utilized while reducing training time and overall costs.

NVIDIA Vera CPU Rack

Testing AI often requires many CPUs to verify results from GPU systems.

The Vera CPU rack is compact and uses liquid cooling. It has 256 CPUs, making it both powerful and energy-saving for running big AI projects.

With Spectrum X networking, CPU racks stay in sync in the AI center. Together with GPU RACs, they help AI run faster and more efficiently than older CPUs.

NVIDIA Groq 3 Lpx Rack

NVIDIA GROC 3 LPX makes AI much faster. LPX works with Vera Rubin to give up to 35% more output for the same power and up to 10× more business value with huge models

Many LPUs together act as one big processor for fast answers. The LPX rack has 256 LPUs, ample built-in memory, and high data speeds used with NBL72. They share the job of solving each part of AI tasks.

LPX is designed for large AI models with substantial data. It makes computing more efficient and lets providers offer better AI services. It’s fully liquid code and will be part of the new Vera Rubin AI Centers later this year.

NVIDIA Blue Field – 4 RTX Storage Rack

The BlueField‑4 STX system is made for AI storage, helping expand GPU memory. Powered by BlueField‑4, it provides a fast way to store and find large amounts of AI data.

NVIDIA DOCA memos – a new DOCA framework that enhances BlueField for storage, allowing dedicated KV cache storage processing. This boosts inference throughput by up to 5x and greatly improves power efficiency compared to general-purpose storage. As a result, the system provides POD-wide context to enable faster multi-turn interactions with AI agents, more scalable AI services, and better overall infrastructure utilization. The Four EGG4 STX rack-scale context memory storage system will enable a critical performance boost needed to exponentially scale our agentic AI efforts, said Timothee Lacroix, co-founder and chief technology officer of Mistral AI. By delivering a new storage tier purpose-built for AI agents and memory, STX is ideally positioned to ensure our model can maintain coherence and speed when reasoning across large datasets.

NVIDIA Spectrum 6 SPX Ethernet Rack

Spectrum-6 SPX Ethernet moves data quickly inside AI centers. It can be used with other fast network switches to provide quick, reliable connections between systems.

Spectrum-X Ethernet Photonics uses light to transmit data, making it five times more energy efficient and ten times more powerful than conventional methods.

Improving Resiliency and Energy Efficiency

NVIDIA and its partners announced the DSX platform for VeraRubin. DSX Max-Q enables the AI center to use 30% more systems in the same power envelope. DSXFlex helps AI centers use unused grid power.

By closely integrating compute, networking, storage, power, and cooling, the architecture boosts energy efficiency and helps factories scale up under constant dense workloads with maximum uptime.

Broad Ecosystem Support

Vera Rubin-based products will be available from partners in the second half of this year. This includes top cloud providers like Amazon Web Services, Google Cloud, Microsoft Azure, and Oracle Cloud Infrastructure, as well as Nvidia Cloud partners such as CoreWeave, Cursoe, Lambada, Nibius, NScale, and Together AI.

Global systems manufacturers like Cisco, Dell Technologies, HPE, Lenovo, and Supermicro are expected to offer a wide range of servers based on Vera Rubin products. Other partners include Avarice, SS, Foxconn, Gigabyte, Inventec, Pegatron, Qanta, Cloud Technology (QCT), Wistron, and Wiwynn.

AI labs and leading model developers such as Anthropic, Meta, Mistral AI, and OpenAI plan to use the Nvidia Vera Rubin platform to train larger, more advanced models. They intend to deliver long-context multimodal systems with lower latency and lower cost than previous GPU generations.