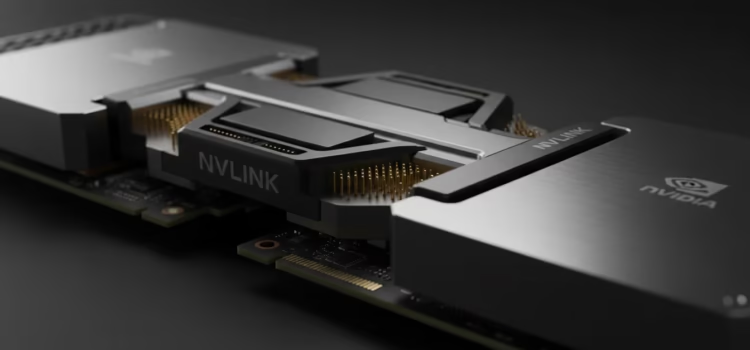

NVIDIA has introduced a new high-performance interconnect standard that connects multiple graphics processors into one powerful system for local workstations. Launched in April 2026, this hardware and software solution targets professionals who need substantial parallel processing power without using cloud data centers by linking the memory and processing cores of several cards. A single workstation can handle datasets that were too large for a standard desktop. This development meets the growing need for detailed simulations and complex data processing at the network’s edge. It signals a return to decentralized, high-performance computing for researchers, engineers, and digital artists.

Overcoming The Bottlenecks Of Traditional Bus Architecture

One of the main technical challenges for local multiprocessor systems is communication latency between processors. Standard motherboard slots often can’t move data quickly enough to keep several high-end chips working together smoothly. NVIDIA’s new unified memory bridge fixes this with a dedicated high-speed connection that skips the usual system bus. This lets two or more processors share their memory as if it were one large pool. As a result, data doesn’t have to be copied between cards. Each calculation cycle is much faster.

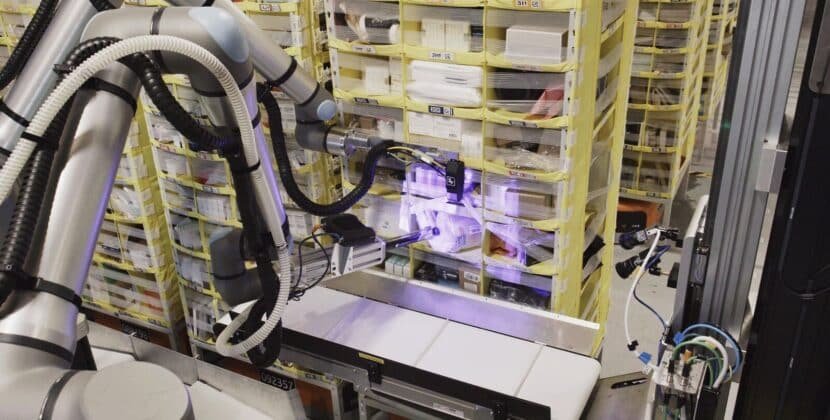

The architectural shift is supported by a new “Dynamic Load Balancer” embedded within the driver stack. This is a system monitor. This new design introduces a dynamic load balancer built into the driver software. It keeps track of each core’s workload in real time and automatically shifts tasks so no single processor slows down the group. If one unit finishes early, it takes on more work from the shared queue to help the others. This setup means that adding more cards almost doubles or triples the system’s output. Such efficiency is especially important for tasks such as real-time 3D rendering or processing large genomic data sets. It effectively merges the video memory of all linked units. In the past, if a single task required 48 gigabytes of memory but each card had only 24, the task could not run locally. The new linking removes this physical boundary, allowing the software to see a 96-gigabyte or 192-gigabyte memory space. This is a game-changer for those working with high-resolution 3D environments or large-scale statistical models. It allows for more complex textures and more detailed physics simulations without slowing down or crashing the systems.

To handle larger memory, NVIDIA has added Predictive Data Prefetching, a feature that anticipates what data will be needed next and loads it into the high-speed cache (temporary memory used to store frequently accessed data) before processing. This way, the processing cores are never left waiting for data from slower storage devices (such as hard drives or SSDs). By keeping the compute pipeline (the sequence of processing setups) full, the system reaches speeds that once required liquid-cooled server racks (large industrial computer setups). Now, a single professional workstation can match the performance of a mid-sized server cluster (a group of connected servers) from just a few years ago.

Thermal Management in High-Density Workstations.

Putting several high-power processors in a single case creates significant thermal challenges that can slow performance. The new linking standard addresses this with a synchronized cooling protocol that coordinates all system fans. The hardware works together to direct airflow and move heat away from the chips and out of the case. If one card gets hotter than the others, the system can lower its speed slightly and raise a neighbor’s speed to keep overall performance steady. This thermal load-sharing prevents any single part from overheating.

For people working in quiet offices, the system includes an Acoustic Optimization mode, which sets all fans to lower speeds to move more air without producing high-pitched noise. This reduces the typical sound produced by powerful cooling units. As a result, the workstation stays cool and quiet even during long processing sessions. By focusing on the physical environment, the company shows it understands that noise and heat matter in real-world workspaces.

Security and Data Sovereignty at the Local Edge

One of the main reasons for the shift to local hardware is the growing concern about data sovereignty and the safeguarding of intellectual property in the cloud. Many organizations are reluctant to upload proprietary designs or sensitive customer data to remote servers. By keeping large workloads local, NVIDIA helps create a stronger barrier against unauthorized access or the exposure of confidential data. The new multi-unit bridge uses hardware-based encryption for all data moving between processors, ensuring information remains secure even as it travels within the computer.

In addition to the enhanced security and data control provided by local hardware, using a local system also avoids the cost of moving large amounts of data to and from a cloud provider. For example, a research lab that processes daily satellite images or medical scans can save significant money. Local hardware also offers predictable performance, so users are not affected by changing internet speeds or the impact of other users on shared cloud resources. This gives professionals full control over their computing environment and helps ensure that important deadlines are met even if a remote service goes down.

Thermal Synchronization And Acoustic Load Balancing

As workstations around the world become more linked and effective, digital labs are quietly changing. Offices are becoming more responsive to our creative needs. Power is shifting away from a central location, and each desk can now be a productive hub. Over time, the line between what the machine does and what we create may blur, allowing us to work more smoothly. We may soon find that our work is supported by reliable systems that esteem both our intentions and our data. The workstation is becoming more than just equipment it is now a dependable part of our daily work.

Source: NVIDIA AI Ecosystem Expands as Marvell Joins Forces Through NVLink Fusion