The new chip design will completely change artificial intelligence by delivering 100 times better performance through its architecture, which operates independently of cloud services. The research combines memristor-based in-memory computing with secure processing methods to enable AI models to execute directly on devices while consuming minimal power. The advancement enables smartphones, Internet of Things devices, and edge systems to execute advanced artificial intelligence tasks locally, improving processing speed, user data protection, and environmentally friendly operation.

Redefining AI Hardware

In a typical AI environment, vast amounts of cloud computing power are required to handle the immense volume of data generated by AI applications. This introduces latency issues due to long-distance data movement, as well as high energy consumption and privacy concerns when moving such sensitive information across networks. The memristor-based chips provide a different architecture that combines computation and memory into a single integrated unit.

Because this new architecture eliminates the need to move large amounts of data back and forth between RAM and the CPU and GPU for AI computation, it addresses a key bottleneck in AI implementations. Additionally, the memristor-enabled chip enables AI algorithms to be executed directly on the device, creating opportunities for new real-time AI applications across robotics, autonomous vehicles, wearables, smart sensors, and more.

Energy Efficiency and Sustainability

A major benefit of the new chip is its energy efficiency. In general, AI processing consumes a lot of electrical power, mostly from massive data centres. moves much less, and memristor switching consumes very little energy.

The lower electrical cost of AI processing, enabled by energy-efficient chips, also means a lower carbon footprint. With rapid growth in AI use across sectors, energy-efficient hardware solutions will be key to ensuring large-scale AI implementations are environmentally sustainable.

On-Device AI and Privacy

By enabling local IoT device operation, the chip also helps address growing concerns about data privacy. All types of sensitive information (e.g., personal health data, financial transaction information, and proprietary business information) can be processed on the device without being transmitted off the device.

In addition, on-device processing reduces response latency across all the examples above; therefore, these AI models can provide real-time responses. This level of capability is crucial for scenarios like autonomous navigation, real-time translation, and augmented/virtual reality (VR/AR), where speed and immediacy are critical to the user experience and operational dependability.

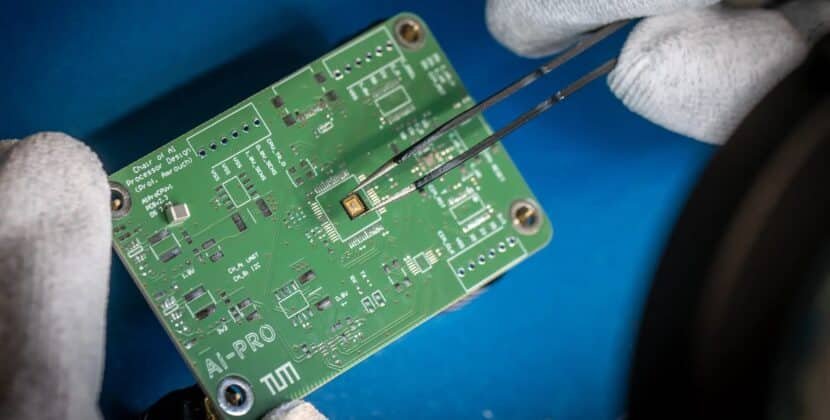

Memristor-Based In-Memory Computing

At the core of this discovery exists memristor technology. Memristors are memory devices that store data and enable simultaneous information processing. The system performs computations at the location of data storage because it can process information without using a standard CPU-GPU architecture that separates memory and processing tasks.

The chip uses multiple memristors, which the system organises into arrays that can perform AI computations simultaneously. The system uses parallel processing to manage extensive neural networks, thereby improving performance without increasing energy consumption or physical dimensions.

Security and Trust

The microprocessor’s performance is enhanced by multiple security features. It executes calculations on a physically secure medium, reducing exposure to external threats and data loss. This form of securing a design for AI applications will be crucial due to their ability to provide value in sensitive sectors such as healthcare, banking, the military, and autonomous systems.

Mixing AI high-performance processing capabilities with superior security on a single chip represents a significant advancement and will ultimately provide users (businesses or end users) with the most complete AI solution.

Implications for Edge Computing

The new technology is likely to accelerate the adoption of edge computing by bringing AI functionality closer to where data is generated and collected, thereby moving away from using cloud servers for computation and instead having edge applications perform computations locally. Therefore, when computing locally via edge computing, edge applications can provide quicker response times than cloud computing, with increased reliability and substantially lower operating costs.

Manufacturers, logistics companies, smart cities, and autonomous systems will all benefit from this breakthrough technology. Edge computing will enable real-time analytics, predictive maintenance, and adaptive control systems to operate more efficiently than continuously relying on the cloud for computing.

Transforming AI Applications

With this new chip, more complicated AI models can be run on smaller and more portable devices and allow developers to implement complex neural networks into various apps directly on board devices, providing for a much larger pool of potential applications, such as for computer vision, natural language processing, and reinforcement learning by way of hosted or offline processing capabilities.

By providing access and capabilities to small and mid-sized companies, start-ups, and researchers to innovate with on-device integrations, AI will be democratised, offering smaller organisations greater accessibility without the perceived need to deploy large amounts of infrastructure.

Competitive Advantage in AI Hardware

The increasing demand for AI worldwide has made hardware efficiency and speed key competitive advantages. AI acceleration continues to be an area of ongoing investment from major companies, including NVIDIA, Intel, and others; however, the introduction of a memristor-based chip offers a fundamentally different approach to AI processing, combining memory and computation to create a new level of security. This combination of memory and computation provides value to those seeking high-performance, low-power AI applications.

Many market analysts believe that innovations such as this will change the requirements for AI infrastructure, reduce reliance on traditional cloud-based solutions, and alter how companies economically deploy AI.

Future Directions and Development

The researchers are investigating how to continue scaling this technology by increasing memristor density, improving fabrication processes, and integrating the chip into a wide range of devices and platforms. Additional refinements will enable even larger neural networks to support enhanced AI capabilities and broader use in consumer and enterprise devices.

Moreover, the technology provides an avenue for hybrid AI systems that allow some processing to occur locally, while more complex or aggregated processing can leverage cloud resources, creating a flexible and efficient AI ecosystem.

Potential Challenges

Although there is great promise in using memristor-based AI chips on a large scale, there are still many challenges that must be overcome before they can be fully adopted in an everyday consumer setting: manufacturing them at scale, g software, and optimising the way AI models will utilise what is called “in-memory” processing power. All these items will need to be solved by researchers and engineers so that we can make memristor-based AI chips commercially viable and ready for widespread use.

However, reports from research laboratories indicate significant potential for memristor-based AI chips, and partnerships between chip manufacturers and AI developers may help accelerate the transition from laboratory prototypes to commercially available products.

Broader Implications

The breakthrough will impact beyond just the performance of artificial intelligence: it could usher in new standards for energy-efficient computers, secure processing at the device level, and the rapid deployment of intelligent systems. Improving how technology reduces reliance on cloud infrastructure could enable resilient systems, reduce costs, and increase global access to artificial intelligence.

Smart devices will soon allow individuals and businesses to work with devices that are both efficient, respect privacy and provide quicker insights into their operations than ever before, changing the way Artificial Intelligence becomes a part of everyday life.

Conclusion: A New Era of AI Efficiency

The latest memristor-based chip signifies a major step forward for artificial intelligence hardware. The integration of in-memory processing, security, and energy efficiency enables devices to run high-performance AIs without relying on cloud-based services.

The advantages offered by this innovative memristor chip will enable AI applications to operate more quickly and efficiently, while prioritising user privacy, than ever before. Additionally, they will create a host of new opportunities across a variety of industry sectors, leading to entirely new methods of deploying AI. In continued development, this chip could change our view of the AI landscape, providing powerful, efficient, and secure AI solutions for many more people and devices.

Source: https://phys.org/