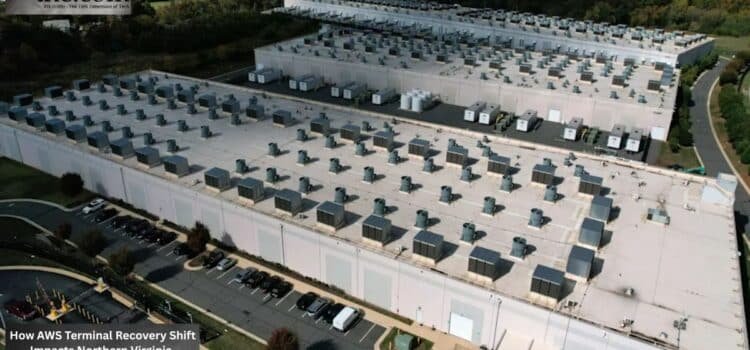

Ashburn, VA.

Atomic Answer: Amazon Web Services (AMZN) has finalized its “Thermal Stability Protocol” following the resolution of the May 2026 Northern Virginia outage. The technical shift mandates a new cooling capacity buffer (CCB) across US East 1, requiring a 15% reduction in peak rack density until secondary cooling unit loops are verified.

At 2:17 a.m., enterprise dashboards across the East Coast stopped updating. Financial apps slowed down, retail checkouts stalled, and Slack alerts started coming in waves. For many companies using Amazon’s cloud, the AWS Northern Virginia outage was a reminder that even the best cloud systems still rely on physical power that can fail unexpectedly.

The subsequent thermal event recovery operation did more than restore workloads. It revealed how hyperscale cloud operations now manage electrical isolation, cooling resilience, and infrastructure compartmentalization under growing compute demand.

For Amazon and its parent company, known as AMZN on the stock market, the impact goes beyond just fixing services. Northern Virginia is the heart of global cloud activity, so any disruption there affects banks, hospitals, logistics companies, SaaS platforms, and government contractors simultaneously.

Why Did the AWS Northern Virginia Outage Carry Broader Significance?

Northern Virginia is home to one of the world’s largest clusters of cloud computing infrastructure. Analysts say the region accounts for a large share of global internet traffic daily. This setup is efficient, but it also means there’s a higher risk of tasks failing if something goes wrong.

The recent AWS Northern Virginia outage was reportedly caused by a heat-related infrastructure issue that affected several systems. Even though AWS is built for redundancy and separation, this incident showed that local failures can still spread across connected business environments.

The term AWS thermal event resulting in loss of power recovery sounds technical, but the effects are simple. If cooling systems fail in crowded data centers, operators often have to shut down parts of the infrastructure to prevent hardware damage, electrical issues, or larger failures.

This approach protects the infrastructure in the long run, but it causes short-term problems for customers. Many companies faced delays in short-term storage syncing, compute scheduling, and partial app interruptions during recovery. Some developers saw slow instance launches while backend engineering teams monitored EC2 restoration timelines.

The Engineering Pressure Behind Modern Cloud Resilience

Cloud outages rarely begin with a software anomaly.

Most outages start with a chain reaction involving power, cooling, backup systems, and environmental controls. Today’s massive computing setups push these systems to their limits.

Modern AI, real-time analytics, and automation tools make data centers much denser. More density means more heat, so cooling has become one of the most important parts of cloud operations.

Ten years ago, most enterprise data centers used much less power per rack. Now, large operators run clusters that use several times more. Even small changes in airflow, humidity, or cooling can quickly become big problems in these dense environments.

The latest thermal event recovery process highlighted how aggressively cloud providers now work hard to isolate operational risks.

Instead of allowing disturbances to propagate across interconnected systems, providers increasingly rely on infrastructure isolation strategies. These designs separate workloads, networking paths, cooling domains, and electrical systems into compartmentalized operational zones.

This isolation helps contain failures, but it can also make recovery more complicated.

To safely bring systems back online, teams must check thermal stability, power, and network synchronization in steps before allowing workloads to run at full scale.

Availability Zones Were Built For Moments Like This

Amazon Web Services has promoted using multiple zones to protect against local disruptions. This setup mostly worked as planned during the recent recovery.

Still, many customers discovered an uncomfortable truth: deploying applications across multiple availability zones does not automatically guarantee resilience if dependencies remain concentrated inside a single regional architecture.

For example, a healthcare software provider might run patient scheduling in two zones but use a shared authentication system in the same city. Even with zone redundancy, these shared parts can still create risks during outages.

The recent AWS outage in Northern Virginia has reignited conversations about spreading systems across regions, not just zones.

This shift in thinking also affects company finances.

Running active-active deployments across multiple regions costs significantly more than localized redundancy. Many companies previously accepted regional concentration risks to reduce operating expenses and latency. Incidents tied to thermal event recovery may push executives to reconsider those trade-offs.

Why EC2 Restoration Matters to Enterprise Confidence

For most customers, outages hit home when their compute instances stop working.

Cloud infrastructure can seem distant until production apps stop responding. That’s why people pay close attention to EC2 recovery times during outages.

AWS engineers reportedly used a step-by-step recovery process to avoid more problems. This method slows down the initial recovery but lowers the risk of more outages from starting workloads too soon. From an infrastructure perspective, taking a careful approach to recovery makes sense.

From a business angle, every extra minute of downtime costs money.

If a retail platform experiences payment processing issues during busy periods, it can lose millions of dollars. A logistics company that relies on real-time data may see shipment delays across its whole network. Even short outages show how much businesses depend on reliable cloud services.

For AMZN, keeping customer trust during outages is just as important as fixing the infrastructure. AWS generates significant revenue for Amazon, so keeping businesses confident is crucial.

The Hidden Infrastructure Battle: Power Versus Density

The cloud industry now faces competing demands.

Customers want faster computing, lower delays, and bigger AI workloads. Providers address capacity constraints by adding more servers and boosting capacity, but each step adds more heat and complexity.

This challenge is why companies are investing more in data center cooling. Operators now use liquid cooling, improved airflow systems, predictive analytics, and AI monitoring to reduce risk.

The recent AWS thermal event, which resulted in a power outage and recovery, underscores a broader reality: Future cloud computation may rely as much on electrical engineering as on software design.

Northern Virginia is at the heart of this challenge. Its huge amount of data– large data centers already put pressure on utility planning, land use, and power distribution.

Cloud providers now compete not just for business customers, but also for steady electricity, cooling, and transmission capacity.

The Next Phase of Cloud Resilience Will Become More Physical

For years, cloud computing talks were mostly about software. That focus is now changing.

Now, infrastructure debates are about transformers, substations, cooling, backup power, and thermal systems. The recent AWS outage in Northern Virginia showed that digital businesses still depend a lot on physical engineering behind the scenes.

The companies that will do best in the next decade are likely those that pair strong software with reliable infrastructure. Fast recovery is important, but being able to contain problems keeps things cool, and using infrastructure isolation systems may matter even more as cloud use grows.

Northern Virginia will continue to be the main testing ground for this future.

Enterprise Procurement Checklist

- Operational Consequence: Enterprises in US-EAST-1 must audit “Availability Zone” distribution to avoid single-facility thermal risks.

- Procurement Risk: Expect temporary limits on high-density GPU instance provisioning in specific Ashburn nodes.

- Infrastructure Redesign: Shift critical workloads to “Liquid-Verified” AZs to ensure uptime during high-ambient-temperature weeks.

- Deployment Impact: Automated traffic-shifting protocols now trigger at lower thermal thresholds (104°F/40°C facility intake).

- Action Step: Review EBS volume snapshots; AWS reports a 2% “impairment” rate on older volumes post-thermal reset.

Source: AWS News Blog