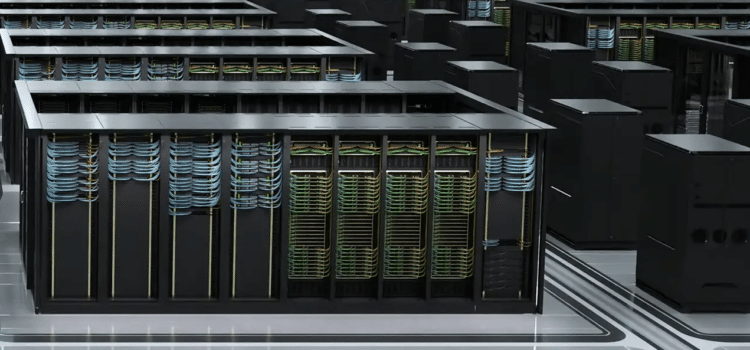

NVIDIA has launched Project Rio, an engineering effort to improve how high-density data centers handle heat, announced in April 2023. The project addresses the rising heat from new Blackwell and Rubin architecture clusters, which have outgrown traditional air-cooling methods. As demand for powerful, low-computing-growth systems rises, cooling these large server arrays has become a major challenge for both operators and environmental protection. Project Rio uses a modular liquid-cooling system, with predictive telemetry helping data center operators move from reactive cooling to a smarter, workload-based, chip-level approach that removes heat directly from the chips. The project aims to lower overall power use and keep the hardware reliable over time.

The Shift to Direct to Chip Liquid Cooling

Project Rio replaces standard perimeter CRAC (computer room air conditioning) units with an integrated liquid cooling system. Instead of relying on fans to push chilled air over heat sinks, which become less effective as rack power exceeds 100 kilowatts, Project Rio employs a closed-loop cold plate that sits directly on top of the processors. This direct-to-chip approach uses a safe, non-conductive fluid to absorb heat at its point of origin and transfer it away through stainless steel coolant pipes.

By eliminating the need for powerful fans, Project Rio reduces energy used solely for moving air rather than for processing data. This design allows components to be packed more closely together, doubling computing power in the same amount of space. For businesses, this means data centers can be smaller and quieter, with less wear on server components.

Predictive Telemetry And Dynamic Flow Control

Project Rio goes beyond physical plumbing by adding a smart management system called Dynamic Flow Control. It uses thousands of tiny sensors in the server backplane to track temperature changes as they happen. Unlike older systems that kept current flowing at the same rate, no matter the workload, Project Rio can predict when temperatures will spike based on the tasks coming in. If a group of processors is about to take on a heavy job, the system increases coolant flow to those chips before they start to heat up.

Predicting temperature changes is key to maintaining thermal balance during processing in a facility. Stay at a steady temperature, and they experience fewer thermal cycles or heating and cooling events. This reduces physical stress, so the hardware lasts longer and tiny cracks in the semiconductor packaging are less likely to occur. Facility managers can use the Project Rios dashboard to see thermal health data for every rack. This clarity helps them plan maintenance during less busy times.

Environmental Impact and Heat Recovery

This focus on efficiency ties directly to environmental goals. One of Project RIO’s main objectives is to improve power usage effectiveness (PUE), a metric that measures a data center’s energy efficiency. By eliminating the need for energy-intensive refrigeration in air-cooling systems, Nvidia believes facilities using Project RIO can achieve a PUE as low as 1.05. This means nearly all the electricity goes to computing, not just running the building. Such efficiency is important for meeting strict carbon-neutral routes. Rules set by governments in North America and Europe.

Furthermore, the project studies the potential for “waste heat valorization.” The project also examines ways to reuse waste heat, since liquid cooling removes heat more efficiently than air. The warm water leaving the data center can be used elsewhere. Project Rio has standard heat-exchange connections, allowing data centers to connect to city heating systems or greenhouses. In colder areas, a data center can act as a carbon-free heat source for the community, turning excess heat into useful energy. This solution helps data centers become active participants in the local energy system rather than just consumers.

The Crystalline Pulse of the Machine

A system of cooling-ready partners, including suppliers of pumps, manifolds, and leak-detection sensors. By open-sourcing certain mechanical specifications for the Rio Manifold project, Nvidia is encouraging a standardized approach to liquid cooling across the industry. Such interoperability is vital for large-scale co-location providers who host hardware from multiple vendors. If every manufacturer uses a proprietary cooling hookup, the difficulty of managing a large facility is unsustainable. Project V provides a common language for thermal management, ensuring that as the voice computational needs grow, the infrastructure supporting them remains manageable and efficient.

We are entering a new era in infrastructure marked by significant advancements in the digital world. Data centers, once characterized by noisy fans, are becoming more efficient and controlled with liquid cooling. Machines now manage their heat in a measured, predictable manner, aligning technology with cooling systems. In the future, the concept of overheated servers may become obsolete as coordinated cooling enables scalable, reliable operations. Modern data centers provide calm, efficient environments that promote reliability as they handle ever larger volumes of data.

Source: NVIDIA News Archive