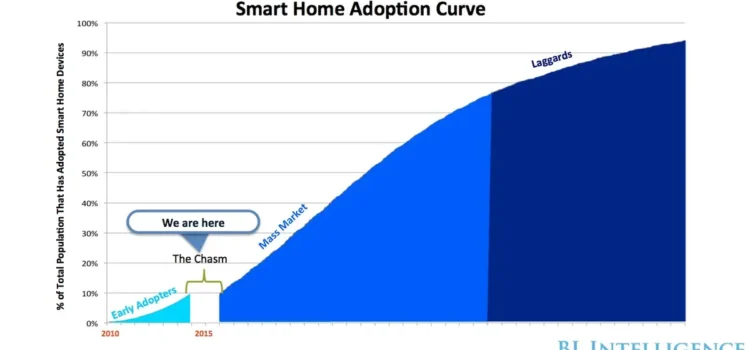

The United States is now entering a new phase of smart home technology adoption, driven by artificial intelligence. The initial development of voice-controlled lighting and thermostats has advanced to sophisticated systems capable of predictive automation, real-time monitoring, and contextual decision-making. The three leading market platforms driving this transformation are Amazon, Google, and Apple.

The three systems provide different approaches to implementing smart home artificial intelligence, varying in system design, user security, device compatibility, and future operational efficiency. Consumers face growing difficulties when selecting a home automation system because they must understand that switching systems can lead to costly technical issues.

The Evolution of Smart Home AI Platforms

Smart home platforms have shifted from reactive systems to proactive environments powered by AI. Modern systems use behavior pattern analysis to automatically operate functions such as lighting, temperature control, and security protection, rather than waiting for user commands.

Google has focused on predictive intelligence, using AI to anticipate user needs, while Amazon emphasizes broad device integration and voice-driven control. Apple, meanwhile, is prioritizing privacy and on-device AI processing.

The divergence between these two approaches represents a major trend that requires businesses to balance three elements: convenience, intelligence, and protection of security systems.

Amazon Alexa: Scale and Device Compatibility

The Alexa platform, developed by Amazon, has become one of the most popular smart home systems because it offers excellent compatibility with a wide range of third-party devices.

Alexa enables users to create automated systems ranging from basic routines to complex operations involving multiple devices. The system uses cloud computing as its primary processing method because it enables advanced functionality but also introduces delays and security risks.

Amazon developed its system to provide users with maximum access while supporting a wide range of devices; therefore, it is ideal for people who need to use different types of equipment.

Google Nest: Predictive Intelligence and Integration

Google’s Nest ecosystem focuses on integrating AI with its broader data and services platform. The system provides highly personalized automation capabilities by learning user preferences over time.

The Nest devices demonstrate their climate-control capabilities by adjusting temperature settings based on occupancy detection and recommending energy-conservation measures. Google’s strength lies in its ability to leverage data for predictive insights.

The data-driven method raises privacy and data-use concerns, which have become crucial for consumers to consider.

Apple Home: Privacy and On-Device AI

The Apple smart home platform, which people connect to their Home ecosystem, takes a different approach by prioritizing privacy through its local processing system. Apple processes most of its data because its system functions without needing extensive cloud servers.

The system delivers two benefits by eliminating the need to send sensitive data to external servers, resulting in faster response times and better protection of personal information. The Apple ecosystem works with its hardware products to deliver an uninterrupted user experience.

The method provides excellent privacy protection, but it restricts users’ ability to connect to third-party devices, imposing more limitations than competing systems. The method provides excellent privacy protection, but it restricts users’ ability to connect to third-party devices, imposing more limitations than competing systems.

AI Capabilities: Reactive vs Predictive Systems

The performance of a smart home system depends heavily on its artificial intelligence capabilities. Google uses its predictive intelligence technology to analyze data and deliver automated solutions that meet user requirements before they become apparent.

Amazon develops its AI technology to execute immediate responses when users issue voice commands or make other requests. Apple combines two different methods to create a system that understands user context while protecting their personal information.

The decision for users is whether to choose automatic systems that operate without their input or systems that require immediate user response.

Privacy and Data Security Considerations

Privacy is the main reason people use smart home technology. Cloud-based systems, such as those used by Amazon and Google, require data to be transmitted and processed remotely.

The system provides advanced capabilities, but it creates security vulnerabilities that can jeopardize user information. Apple’s local processing model reduces these risks by keeping data within the home environment.

The growing awareness of data privacy now helps differentiate platforms.

Cost and Long-Term Value

The total expenses of a smart home system begin with device purchases but continue to increase through subscription fees, system limitations, and maintenance needs.

Amazon allows users to connect multiple devices through its wide compatibility, helping them save money. Google offers advanced functions that some users will find worthwhile despite its higher price tag.

Apple users need to pay more upfront to access the ecosystem, but they will receive lasting benefits from its integrated features and security protections.

Ecosystem Lock-In and Flexibility

The primary danger people face when selecting a smart home system is the risk of lock-in to its specific ecosystem. Users face high costs and difficulties when they try to switch systems after making their initial choice.

Amazon provides its users greater flexibility by supporting a wide range of devices, whereas Apple creates a controlled ecosystem that offers seamless system integration. Google occupies a middle position between these two extremes because it provides users with both system integration and product interoperability.

People need to understand these trade-offs because they will determine their final decision on which option to choose.

The Future of Smart Home AI Platforms

The smart home market will continue to develop as AI advances create more advanced automation systems and integration methods. The future will see increased adoption of hybrid models that combine local and cloud processing to deliver optimal performance and scalability.

Amazon, Google, and Apple will dominate future development by competing to create the most effective and user-friendly solutions.

The upcoming technological progress will make platforms more similar to one another, yet their fundamental design principles will remain different.

Conclusion: Choosing the Right Smart Home Platform

Selecting the right smart home AI platform requires careful consideration of compatibility, privacy, cost, and long-term value. Amazon provides businesses with a flexible solution to expand their operations, Google allows users to access its predictive capabilities, and Apple focuses on user privacy through system integration.

Each platform has its strengths and limitations, and the best choice depends on individual needs and priorities. The ongoing development of AI technologies in smart homes makes it essential to choose solutions that create efficient, secure smart home systems with a future-ready design.

Sources: Apple Newsroom