An open source model has just outperformed GPT 5.4 and Claude Opus 4.6 on one of AI’s toughest coding benchmarks. A year ago, that would have seemed impossible.

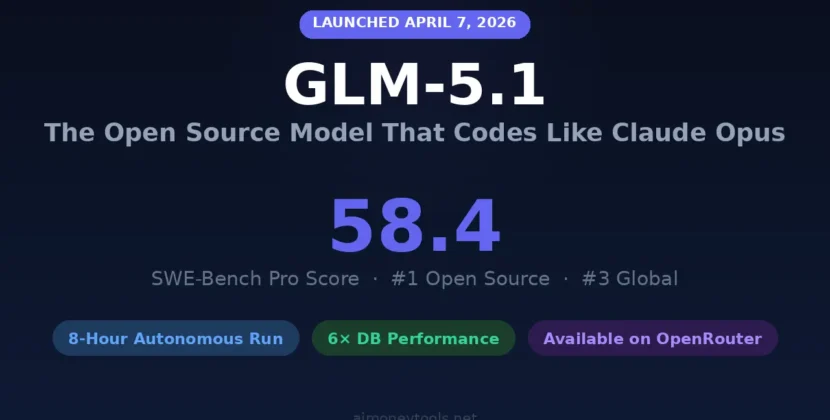

On April 7, 2026, Z.ai (previously Zhipu AI) released GLM 5.1, which scored 58.4 on SWE Bench Pro. This put it at the top of the global leaderboard, just ahead of GPT 5.4 at 57.7 and Claude Opus 4.6 at 57. The model is available for free under the MIT license, which is hosted on Hugging Face.

I’ve watched the open source AI gap shrink over the past three years. In 2023, it lagged by two years. By 2024, it was one year behind. In 2025, it was just six months. Now there’s only a single benchmark point separating them. This is when the idea that open source is always behind finally ended.

Before diving into details, it’s helpful to understand what makes GLM 5.1 notable.

What Is GLM 5.1?

GLM 5.1 is Z.ai’s main open-source AI model, released on April 7, 2026. It is designed for agentic engineering and long-term software development. This version builds on the GLM 5 base model, keeping the 744-billion-parameter mixture-of-experts (MOE) architecture, but with much better coding tools and autonomous execution.

Z.ai, formerly known as Zhipu AI, is a Tsinghua University spin-off. It became the world’s first publicly traded foundation model company after its Hong Kong IPO on January 8, 2020, which raised about HKD 4.35 billion (around $558 million) and valued it at $52.83 billion. The IPO funding accelerated their releases: GLM5 on February 11, GLM5 Turbo on March 15, GLM5.1 API on March 27, and the open-source weights on April 7.

The model isn’t limited to single code runs it keeps improving its output. It plans, executes, tests, fixes, and tunes autonomously for up to 8 hours. This isn’t just marketing: ZAI’s GLM 5.1, starting from a bare Linux desktop, ran 655 cycles and raised vector database query speed 6.9× over baseline.

In my view, ZAI’s strategy is smart. They aren’t trying to win on chat quality. Instead, they focus on developer productivity specifically, how long their model can run before needing human help. That’s a better competition to be in.

GLM 5.1 Benchmark Results Versus GPT 5.4 Claude Opus 4.6 Gemini 3.1 Pro

As of April 9, 2026, GLM 5.1 is the top open source model worldwide and ranks third overall across the SWE Bench Pro, Terminal Bench, and NL2 Repo benchmarks. Here’s how it compares to the top proprietary models:

GLM 5.1’s margin over Claude Opus 4.1 on SWE Bench Pro is 1.1 points, a difference that highlights how close the top models are for a clearer comparison on the broader coding composite (which includes Terminal Bench Pro 2.0 and NL2 Repo). Claude Opus 4.6 scores 57.5 points, and GLM 5.1 scores 54.9 points. This means that while GLM 5.1 outperforms Claude on SWE Bench Pro across all tested benchmarks, Claude Opus 4.6 remains ahead overall. According to independent reviewers, GLM 5.1 achieves about 94.6% of Claude Opus 4.6’s combined coding performance.

The cyberGYM score of 68.7 is notable. This benchmark encompasses 1,507 real-world tasks. GLM 5.1 improved by nearly 20 points relative to the prior GLM5 release. Such progress within a single version is uncommon.

The 8-Hour Autonomous Coding Capability Explained

GLM 5.1 can run a continuous experiment, analyze, and optimize for up to 8 hours without human involvement, making it the first open-source system to be evaluated at that level of autonomy. In practical terms, this means handing in a complex software project at 9 a.m. and returning to production-ready output by 5 p.m.

To see why this is important, look at what ZAI’s leader Lowe proposed on X after the launch agents could do. By the end of last year, GLM 5.1 could do 72–1,700 RN autonomous work. Time may be the most important curve after scaling principles.

A key demonstration involved GLM 5.1 autonomously constructing a complete Linux-style desktop environment within an e-tower window, featuring functional components such as a file browser, terminal, text editor, system monitor, and games. This was accomplished through 655 autonomous iterations. The model also optimized a vector database, delivering a 6.9× increase in throughput compared to baseline performance. Progress from 20 to 1700 steps in four months underscores rapid development.

ZAI is upfront about the limits in the limits column. Reliably evaluating tasks without explicit metrics remains a challenge. The model can also get stuck when further tuning doesn’t help. While this is still an early-stage feature, it’s the most convincing open-source example of long-term agentic work I’ve seen in 2026.

GLM 5.1 Architecture 754B Parameters MOE And No NVIDIA

GLM 5.1 is built on a 754-billion-parameter mixture-of-experts (MOE) architecture, where only 40 billion parameters are active per token, rather than the full count being used each time. Its 200,000-token context window allows it to process and reference large amounts of information, and the model can produce up to 120. This is distinct from other leading models, which may use different architectures or have varying active parameter counts during inference.

With the MoE design. The full 754 billion parameters aren’t used at once. Only the most relevant 40 billion are active per token, helping keep inference costs reasonable for such a large model. Z.ai also uses Deep Speak, Deep Seek, and Sparse Attention (DSA) to reduce deployment costs while maintaining strong long-context performance.

One of the most important technical points is that GLM 5.1 was trained entirely on Huawei Ascend 910 B chips, with no Nvidia hardware involved, due to US export restrictions on advanced GPUs to China. This isn’t simply a technical detail—it shows that China can train top-level models using its own computing resources.

Is GLM 5.1 Truly an Open Source License And Access Details?

GLM 5.1 uses the flexible MIT license. You can download, review, modify, fine-tune, and use it commercially without restriction. Find the model weights at HuggingFace.co/Zai-org/GLM-5.1 (in standard and FP8 quantized formats).

This differs from some open-weight models that restrict commercial use or require extra licenses. With MIT, you have total freedom. Build, use, and deliver to your customers.

GLM 5.1 works with tools like Claude Code, Open Code, Kilo Code, Root Code, Cline, and Droid. Add it to your AI stack with a simple config change.

Z.ai offers GLM 5 Turbo, a closed-source model for fast inference and supervised agent tasks. Turbo focuses on speed. GLM 5.1 targets longer, complex jobs. Each has different pricing and users.

GLM 5.1 Api Pricing And How To Get Access

In April 2026, the GLM 5.1 API costs $1.40 per million input tokens and $4.40 per million output tokens. Repeated input uses cache and drops the price to $0.26 per million tokens.

Between 14:00 and 18:00 Beijing time, the model uses the quota three times faster until April 2026. You can use the model at offpeakrates.ak. Now is a good time to try it.

Most developers will find it practical to use the API for both prototyping and production, as it is affordable with the right GPU setup. If you need data privacy, you can self-host. For companies working with sensitive code, self-hosting such a powerful model under MIT terms is a new and desirable option.

You can run GLM 5.1 locally, but it’s rarely practical. With 754 billion parameters, it needs enterprise GPUs—at least eight H100S or similar if you have the hardware. GLLM and SGLAN let you run it locally. P8 quantization halves memory use while maintaining similar performance, helping advanced users.

My honest read: if you’re asking, can I run this on my gaming PC, the answer is no. GLM 5.1 is not a 7B model. You can pull with Olama for 99% of the developer community. API access is the path. The MIT license is for enterprises and researchers with serious, concrete budgets, not for individual tinkerers.

My Honest Take: What GLM 5.1 Gets Right (and What it Doesn’t)

GLM 5.1 impresses. SWE Bench Pro scores are verified. The 8-hour autonomous run and MIT license are confirmed. Huawei-only training is notable and geopolitically relevant.

I want to be clear: GLM 5.1 only outperforms Cloud Opus 4.6 on one benchmark, so it’s misleading to suggest otherwise. Claude Opus 4.6 still leads in most coding, reasoning, and creative tasks. GLM 5.1’s 1.1-point edge on SWE Bench Pro is impressive, but it doesn’t change the overall ranking. The real story is that open-source AI is no longer second-tier. A model trained entirely on domestic Chinese chips and released for free under MIT terms just set a global benchmark record that matters, no matter what your position on the AI debate.

The progress is long-term autonomous coding that interests me most. In just 4 months, the model increased from 20 to 1,700 autonomous steps, demonstrating rapid improvement. If future versions, such as GLM 6.0 or GLM 5.2, can run for 24 or 72 hours without losing coherence, this could change how software is developed.

For open source coding or end tasks, GLM 5.1 should be a top choice. The API is competitively priced. The MIT LES offers full control, and benchmarks rank it among the top open-source models as of April 9, 2026.

Source: GLM-5.1: #1 Open Source AI Model? Full Review (2026)