AI on Google Search is releasing Search Live in the Google app. It uses a real-time camera and voice input. The feature runs on the Gemini 3.1 Flash Live model. Users can now show their surroundings to the AI in Google Search and ask questions in a true dialogue.

Important Details

- Real-time communication: users benefit from seamless voice conversations with AI, enabling faster, more natural searches.

- Camera and voice integration: with instant camera and voice activation, users can quickly get answers about any object or place they encounter.

- Location: the feature is in the Google app (Android and iOS), accessible via the live icon under the search bar.

- Availability: expanding to 200+ countries and several Indian languages, Search Live benefits a broad global audience.

How Search Alive Works

- Open live mode in the Google app, tap the Live button, or access it via Google Lens (a tool for searching by images captured from a camera).

- Point and ask, enable the camera, and ask questions allowed.

- The AI gives audio feedback. It also shows relevant web links.

- Continuous conversation—the feature permits follow-up questions for natural interaction.

- Background operation: users can keep interacting with the AI while multitasking, maintaining efficiency even though camera sharing pauses.

Use Cases

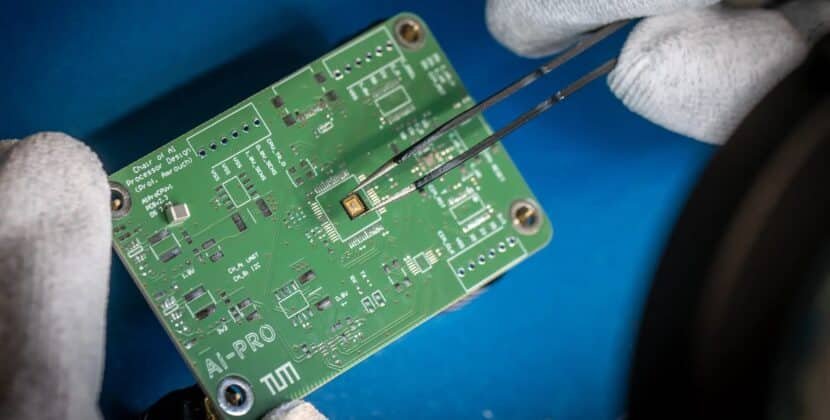

- Troubleshooting: users can point the camera at electronics to ask how to connect specific cables.

- Traveling users can identify landmarks.

- Hobbies and learning: users can request explanations for items in a matcha set or about educational experiments.

- Shopping, getting shook, product details, and reviews.

This is part of a shift toward multimodal search where imagery, visual cues, and speech replace text input.

Google has launched Gemini 3.1 Flash Live, a real-time audio and voice AI model for faster, more natural conversations. It reduces latency, improves reliability, and enhances dialogue quality for advanced, voice-first, multimodal AI applications.

Gemini 3.1 Flash Live

Gemini 3.1 Flash Live manages real-time conversations with enhanced responsiveness and context awareness. It supports natural dialogue flow, multi-term interactions, extended conversations, and dynamic user inputs.

The model delivers reliable, natural-sounding conversations and completes complex tasks, achieving benchmarks that exhibit significant improvements over previous versions. For example:

- ComplexFunkBench audio: Gemine 3.1 Flash Live achieves 90.8% on multi-step function calling with various component constraints, outperforming earlier models.

- Scale AI audio multi-challenge: it scores 36.1% with thinking enabled, excelling at complex instruction following and long-horizon reasoning, despite interruptions and hesitations typical of real-world audio.

Key Features And Improvements

- The model delivers faster responses and maintains fluid, instant interactions, even in noisy environments, by filtering out background noise for reliable performance.

- Better reliability in real-life conditions: Gemini 3.1 Flash Life executes tasks more reliably in noisy environments by filtering out background noise such as traffic or television, ensuring agents remain responsive to instructions.

- It closely follows complex instructions and guardrails, ensuring dependable performance even as conversations shift.

- The model accurately interprets pitch, tone, and place, adapting responses to user sentiment and enabling more natural dialogue.

- More natural dialogue flow: The model maintains conversation threads for longer periods, preserving context throughout extended interactions and idea generation. Mission Sessions

- It enables real-time conversations in over 90 languages for global accessibility and consistent performance.

Developers can use the Gemini Live API (a platform for building features using real-time data) to build real-time conversational agents that process voice and video inputs and respond instantly. Key capabilities include:

- Handling real-time audio and multimodal input

- Function calling and external tool integration

- Session management for long-running conversations

- Ephemeral tokens for secure interactions

- Building interactive voice-first AI agents

In addition to these foundational capabilities, the Google Gen AI SDK (a software toolkit for building generative AI features) enables asynchronous connections to audio sessions and supports instant interaction. Actions

Search Live Expansion And Use Cases

Search Live now works in 200+ regions with AI mode, using Gemini 3.1 Flash Live for real-time voice and camera queries. AI mode is available in Bengali, Gujarati, Kannada, Malayalam, Marathi, Odia, Tamil, Telugu, Urdu, and more.

Key Features Of Search Live Include:

- Voice-activated conversation through the Google app

- Follow-up questions in ongoing sessions

- Camera input for context-aware queries

- Google Lens integration for visual L-word interaction

- Helpful audio responses with supporting web links

This allows users to perform tasks that require real-time interaction, such as troubleshooting, learning, or investigating real-world objects.

Ecosystem And Integrations

Gemini 3.1 Flash Live delivers scalable infrastructure and partner integration for production environments:

- WebRTC-based systems for live voice and video

- Global edge routing for distributed applications

- Partner integrations for handling diverse input systems

Companies such as Verizon, LiveKit, and the Home Depot report positive results using the model in conversational workflows.

Safety And Content Authenticity

All generated audio includes a synth ID watermark imperceptibly embedded in the output. This enables the detection of AI-produced content, supporting honesty and reducing misinformation.

Availability

Gemini 3.1 Flash Live is available across multiple Google platforms.

- Developers: preview access via Gemini Live API in Google AI Studio

- Enterprises: Gemini Enterprise for Customer Experience Applications

- End users: Gemini Live and Search Alive

- Global Reach: Search Live is available in 200+ countries and territories with AI mode.

- Languages: real-time conversation support in more than 90 languages

- The Platforms column is accessible via the Google app on Android and iOS, as well as through Google Lens for camera-based interactions.