A new approach to developer tool data usage is creating huge uncertainty in the technology community. The update to the GitHub Copilot policy has prompted many companies to re-evaluate their use of AI-assisted coding tools, particularly regarding the handling of sensitive material and intellectual property.

As AI tools become increasingly integrated into the development workflow, it is becoming increasingly difficult for companies to ignore the potential risk to their business. An item that was perceived as a productivity enhancement has now become a compliance and security tool.

What the Change in Policy Means

The updated GitHub Copilot policy changes how data is processed, stored, and possibly reused by AI systems. While these changes will enhance functionality and improve the model, they also raise concerns about how user data is used internally.

For businesses that rely on proprietary code, the mere possibility of data exposure may be enough of a concern. Developers routinely handle sensitive logic, confidential algorithms, and internally developed tools that could be exposed if appropriate safeguards are not in place.

The Growing Importance of AI Governance

The development of artificial intelligence (AI) has led to many new technologies that can significantly improve the efficiency of various organizations. However, with this increased reliance on AI comes the growing need for governance – a set of guidelines and regulations that govern how organizations use AI.

Organizations must develop clear guidelines for using AI tools and have a tracking/monitoring plan in place. The key components of AI governance include:

1. Data Guidelines

2. Monitoring AI Output

3. Transparency of Operations

4. Compliance with all regulations governing the use of software

5. Control over how the organization processes data

Without these foundations in place, organizations risk losing all control over how their data is used and shared.

The Second Concern of AI Governance-Code Privacy

Due to the rise of AI, one of the primary concerns with governance changes is code privacy. Developers are using AI tools like GitHub Copilot to write new code much faster and more easily than ever before. However, most tools require developers to provide code snippets to the service before they can be used with an AI tool.

In the event that these AI services store or reuse snippets of code provided by the developer and/or organization, this could lead to the inadvertent release of sensitive data. For example, companies or developers developing proprietary software or handling client data face a higher code privacy exposure risk.

Code privacy is important for protecting an organization’s intellectual property and for compliance with Data Protection regulations.

Why Businesses Should Pay Attention To

Many companies adopt artificial intelligence (AI) tools in a rush, focusing on improving productivity without understanding the associated risks. The recent changes to GitHub Copilot’s policy illustrate that convenience can come at the expense of security.

Organizations that do not audit their use of AI tools risk:

• Data leakage

• Violations of compliance

• Loss of intellectual property assets

• Harm to their reputation

The risks are especially acute in sectors such as finance, healthcare, or technology, where data is highly sensitive and confidential.

Finding a Balance Between Productivity and Risk

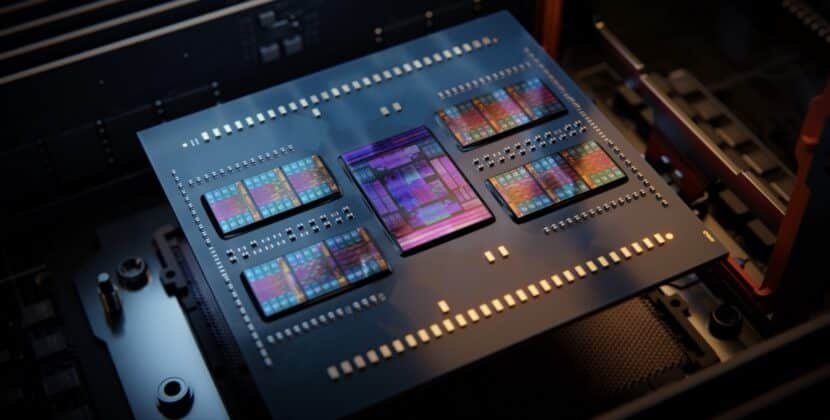

AI-powered tools offer undeniable benefits, including shorter development cycles and greater efficiency, but they also pose potential risks.

Organizations must put controls in place to gain the benefits of AI while minimizing the risk of exposure. Controls that need to be implemented include setting limits on which types of data should be used with AI tools and ensuring employees are educated on the proper usage policy.

Transparency’s impact on AI adoption

When it comes to the adoption of artificial intelligence (AI), transparency is becoming a critical piece of the puzzle. Companies desire insight into how their data is being utilized, where it is stored, and who has access to it.

The present situation makes it clear that companies expect tool vendors to provide greater clarity around their data management practices. Trust in an AI system can be lost quickly if there is no transparency related to its operations.

A larger trend throughout the industry

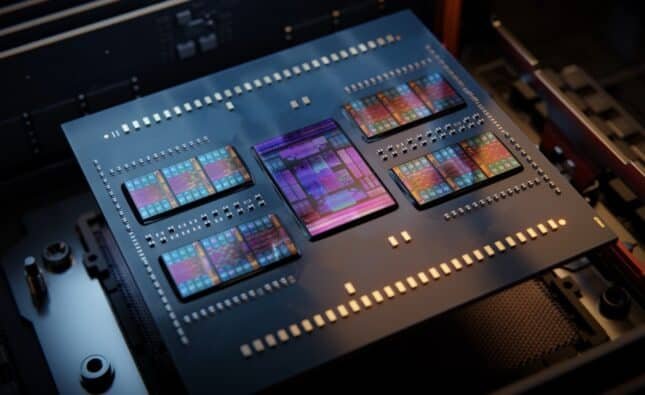

Concerns about the policy change are another example of a larger trend in technology. As AI evolves to greater capabilities than ever before, that same level of power raises additional concerns about the need for oversight and regulation.

Both governments and organizations are taking steps to develop frameworks for overseeing AI use. These frameworks include rules for using data, protecting individual privacy, and holding users accountable.

How well organizations develop a balance between innovation and responsibility will determine the future of AI in software development.

Moving forward, some key steps include:

Conducting periodic audits of how AI tools are operated

Creating a comprehensive governance framework

Developing best practices for employee training related to data security

Staying current on regulatory changes impacting AI

The recent GitHub Copilot update may serve as a warning sign for this whole industry; although AI tools can be very powerful, they should be used cautiously and with oversight.

Source: We do newsletters, too