Mountain View, CA,

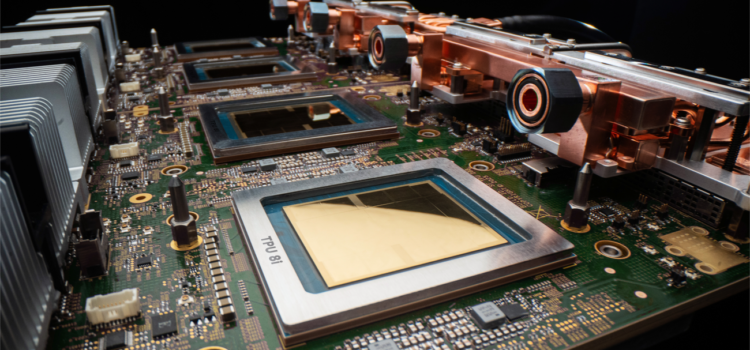

Atomic Answer: fresh minute 10 performance data for Google’s TPU 8I shows an 80% performance-per-dollar advantage for agentic workloads. This purpose-built architecture allows enterprise developers to run autonomous reasoning loads at a fraction of the cost of traditional general-purpose GPUs, specifically targeting the high cache footprint of training trillion-parameter reasoning models.

A major retail platform found that its AI customer service agents were costing more in infrastructure than they brought in during busy seasons. The models worked well, but the real issue was deeper. GPU clusters built for training couldn’t efficiently handle thousands of simultaneous inference requests from autonomous agents. The company’s review focused on one key metric: Google TPU 8I benchmarks. Meanwhile, for companies using ongoing AI agents, the cost of infrastructure is now just as important as the models’ performance. More businesses are paying attention to agentic inference OpEx because they realize that as AI systems grow across customer service, compliance, analytics, and automation, inference costs can quickly surpass training budgets.

Why Agentic AI Is Rewriting Infrastructure Economics

Traditional inference workloads remain consistent. A user would ask a question, the model would respond, and the compute demand would spike briefly before dropping again.

Agentic systems work differently. Autonomous agents are always retrieving data, handling subtasks, checking permissions, monitoring results, and starting new jobs without waiting for people. This constant activity changes the way hardware is used.

This is why Google TPU Airtime benchmarks are now so important for enterprise procurement teams. New companies are looking beyond just peak performance. They want steady efficiency across thousands of fast, simultaneous inference tasks.

Take a financial services firm that uses AI for fraud detection, regulatory checks, and client onboarding. Each task alone doesn’t max out the system, but together they put constant pressure on inference clusters. There are no idle cycles, so infrastructure efficiency directly affects operating margins.

As a result, executives are now focusing more on agentic inference than on headline benchmark scores.

The TPE Economics Shift

For years, companies saw GPUs as the standard for AI computing. But as inference-heavy workloads grow, that assumption is changing.

Now, the debate about TPU versus GPU costs is less about theoretical speed and more about how consistent they are in real operations. GPUs are still flexible for training, but large-scale inference often requires uniform performance, robust, reliable orchestration, and less overhead from connecting different parts.

Google built TPU architectures for machine learning tasks. That is a lot of tensors. The TPU 8.0 generation is especially suited for agentic workloads, where inference requests arrive continuously rather than in short bursts.

A healthcare network shows this differentiation well. Its AI scheduling assistants manage tens of thousands of appointments every day. GPU arrays worked fine during busy times, but used too much energy during normal workloads because resources weren’t balanced. When they tested moving to TPU infrastructure, they reportedly reduced wasted compute while still meeting response-time goals.

This type of stability directly affects how companies calculate the ROI of Google Cloud AI. More businesses are now asking whether specialized accelerators offer better long-term efficiency than general GPU fleets.

How Vertex AI Optimization Changes Enterprise Deployment

Hardware is important, but it’s the orchestration software that decides if companies actually save money.

That’s why there’s more focus on Vertex AI optimization in enterprise AI operations. Companies using multi-agent systems need centralized workload balancing, automated deployment, and lifecycle management to keep inference costs under control. Without orchestration controls, even the best hardware can get expensive. A logistics company saw this during a recent demand spike. Their autonomous supply chain agents kept sending duplicate forecasting requests across separate business units, increasing inference traffic by almost 30%.

After consolidating workloads through Vertex AI optimization, the company reduced duplicate model calls and stabilized compute allocation during high-volume operation windows.

This operational discipline increasingly separates profitable AI deployments from expensive experiments.

Why MOE Architectures Matter for TPU Performance

The rise of mixture-of-experts systems adds another layer to planning infrastructure.

Modern agentic environments now often use specialized reasoning pipelines in which only parts of a model activate for specific tasks. This makes things more efficient, but also adds complexity to hardware orchestration.

The challenge has prompted enterprises to pay closer attention to the MoE model’s hardware performance. AI administrators want accelerators that can route workloads on the fly without causing memory issues or slowdowns.

TPU architectures seem particularly well-suited to these distributed inference patterns because they focus on efficient tensor communication across connected compute systems. Companies running customer service agents, cybersecurity monitors, and multilingual assistants simultaneously now see hardware design as a strategic issue, not just a technical one.

The phrase “Google TPU 8i performance per dollar for agentic workflows 2026” sums up this broader procurement design. Companies are starting to judge infrastructure based on ongoing inference economics, not just one-off benchmark marketing.

The Enterprise Push Toward Predictable AI ROI

In the past year, executives have grown more cautious about AI infrastructure spending. Boards no longer sign off on unlimited accelerator expansion just for long-term AI potential. They want to see clear, measurable results.

The pressure is why Google Cloud AI ROI is now a key topic in infrastructure talks. CIOs are asking practical questions: How much revenue does each autonomous agent bring in for every dollar spent on inference? Which workloads really need top-tier acceleration? How much waste is hidden in orchestration pipelines?

More and more, the answers point toward infrastructure strategies that focus on steady inference efficiency rather than just maximum training scale.

This trend is also changing how companies negotiate cloud purchases. Businesses are starting to set aside specialized inference accelerator pools while keeping training environments in separate buying categories. It’s similar to how companies once split transactional databases from analytics systems during earlier cloud adoption.

Why DPU benchmarks will shape AI procurement strategy.

The bigger picture goes beyond just Google hardware. Enterprise AI is now in a phase where things are running efficiently, and matters more than chasing new experiments.

Organizations, that is, large numbers of self-governing agents, need infrastructure that manages speed, low latency, energy use, and governance simultaneously. This makes Google TPU-ETI benchmarks more important, as procurement leaders now judge hardware by long-term operational economics rather than one-off speed tests.

The discussion about agentic inference, OpEx, TPU vs GPU costs, Vertex AI optimization, and MoE hardware indicates a shift in enterprise tech priorities. AI infrastructure is now less about raw computing power and more about reliable business efficiency.

In the coming years, the companies with the biggest AI advantage may not be the ones with the largest compute clusters. Instead, they’ll likely be the ones who know exactly how much it should cost to run intelligent automation at scale.

Executive Procurement List:

- The article explains how Google TPU 8i benchmarks improve enterprise AI inference efficiency.

- The article explores why Agentic Inference OpEx is becoming a major concern for enterprises.

- The article compares TPU vs GPU cost models for large-scale AI workloads.

- The article highlights how Vertex AI optimization reduces duplicate inference operations.

- The article examines how MoE model hardware influences long-term Google Cloud AI ROI.

Source: News, tips, and inspiration to accelerate your digital transformation