Redmond, VA,

Atomic Answer: Microsoft’s new “Secure and administer” protocols for 365 Copilot agents establish an AI mandate framework for governing autonomous “digital labor”. This shift forces IT procurement to prioritize “agent-ready” GPU clusters capable of handling the erratic market-ready inference demands of concurrent autonomous agents.

A Fortune 500 infrastructure team found that almost 40% of its GPUs were unused during peak licensing renewals. The problem wasn’t a lack of demand for AI, but rather administrative fragmentation. Different business units set up their own AI Copilots and repeated inference pipelines, and allocated too much compute to governance tasks that rarely used full capacity. This mismatch is now a key reason why Microsoft 365 Copilot agents are changing how companies approach hardware strategy.

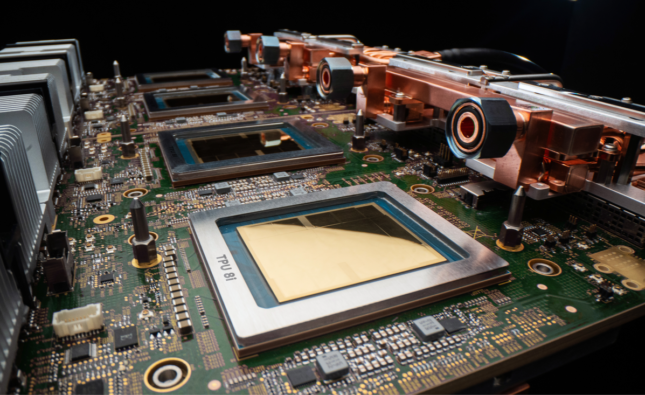

With the agentic AI admin model, procurement decisions have moved from asking how many GPUs are needed to deciding which workloads should get the best acceleration. This matters because companies now buy AI infrastructure not just for training models, but also to run dozens of connected software agents that handle compliance, productivity, cybersecurity, and workflow automation.

The Administrative Layer Is Becoming the Real AI Bottleneck

At first, companies viewed AI spending mainly in terms of model performance—faster inference, wider context windows, and more parameters. Now, CIOs face a new challenge. AI agents need ongoing orchestration, policy enforcement, audit logging, and identity checks, all of which use significant compute resources.

This is where Microsoft 365 Copilot agents have changed how companies plan their AI and IT infrastructure. By promoting agent-driven workflows, Microsoft encourages businesses to run ongoing AI systems that handle tasks such as scheduling meetings, summarizing legal documents, automating ticket routing, and monitoring for issues simultaneously.

In the past, companies bought GPUs mainly for training clusters or analytics pipelines. Now, with the agentic AI admin approach, there’s a continuous management layer. This change raises the baseline compute demand, even if user-facing AI activity seems low.

A multinational bank shows this in action. Its internal assistants used less computing power for regulatory paperwork than the governance systems did for checking access permissions and monitoring data risks. The oversight systems ended up costing more than the applications themselves.

Why GPU Procurement Is Moving Toward Administrative Priorities

Hardware vendors used to focus on raw performance. Now, enterprise buyers care more about how efficiently systems can orchestrate tasks, support governance, and balance workloads across many agents.

This data evolution explains the growing relevance of GPU orchestration in enterprise procurement strategy. Companies deploying thousands of AI agents cannot tolerate fragmented allocation policies that leave expensive accelerators stranded in isolated departments.

Instead, enterprises increasingly consolidate compute pools under centralized AI administration teams. These teams operate much like cloud financial management divisions did during the early era of public cloud expansion.

The emergence of secured AI agents intensifies this trend. Security teams require uninterrupted monitoring, encrypted inference handling, and real-time compliance validation. These safeguards increase GPU utilization because security processes now run alongside primary AI workloads rather than in parallel.

The pressure becomes even more pronounced during large-scale rollout phases. A healthcare network deploying AI-assisted patient scheduling across two hundred facilities may trigger thousands of simultaneous agent interactions each day. Each engagement generates authentication checks, governance reviews, and contextual retrieval operations.

This operational reality has accelerated the demand for AI-centric scaling models in which enterprises treat AI infrastructure as a continuously optimized production system rather than a collection of isolated projects.

How Microsoft Is Positioning Administrator AI Operations

Microsoft understands that enterprise AI adoption depends less on flashy demos and more on operational trust. That strategy explains the company’s investment in governance tooling surrounding Microsoft 365 Copilot agents.

The wider ecosystem. AI also helps workers adapt. MSFT virtual training programs now focus more on AI administration, compliance automation, and agent governance instead of just prompt engineering. Companies are looking for administrators who can manage connected AI systems between departments, not just data scientists and software development pipelines. Traditional DevOps teams optimized development speed. Modern AI operations require lifecycle oversight for continuously adaptive agents. As a result, agentic DevOps practices are emerging as a dedicated discipline focused on monitoring behavior drift, identifying clinicians, and orchestrating dependencies.

Administrative workloads now directly affect how companies buy infrastructure. CIOs are choosing GPUs based on how well they support governance, not just on top performance numbers.

The Economics Behind Enterprise AI Governance

The grounds are simple. Adding AI agents, less money, and poorly managed agents can lead to lawsuits. As a result, companies now value reliable governance performance more than theoretical maximum compute. This shift changes how procurement processes judge hardware vendors and cloud providers.

For example, a manufacturing company using AI for supply chain forecasting might set aside a top GPU resource for governance monitoring during tournament cycles or times of political uncertainty. These tasks require quick responses, as delays in compliance checks can stall important decisions.

The term ‘enterprise secure administration of Microsoft 365 Copilot Agents 2026‘ is starting to capture this new operational need. Companies want centralized surveillance systems that can scale across global boundaries without slowing governance.

That objective influences even hardware purchases. It also shapes energy strategies, network design, and vendor choices. Since AI governance tasks often run nonstop, companies have to consider how they use and regulate power in computing environments where managing efficiency determines infrastructure value as much as raw processing capability.

Why the AI Procurement Conversation Will Continue to Shift

The market now sees AI agents less as separate software tools and more as digital labor systems. This new view changes the entire approach.

Companies rolling out Microsoft 365 Copilot agents at scale need ongoing orchestration systems that manage permissions, compliance, workflow escalation, and real-time teamwork between agents. These needs make the agentic AI admin a key part of enterprise strategy, not just a support role.

This also explains why enterprises more often prioritize GPU orchestration, secure AI agents, and AI fact-free scaling initiatives simultaneously rather than independently. The technologies depend on one another operationally.

In the coming years, procurement leaders will likely judge GPU investments based on governance-focused metrics rather than performance alone. Things like how well infrastructure is used, how quickly policies are enforced, and how stable multi-agent systems are may matter more than traditional speed tests. This–

This change has bigger results for tech leaders. Companies that see AI administration as a major issue may end up spending too much on compute and not enough on governance. On the other hand, those that adopt agentic DevOps and strong oversight will likely get more value from their hardware.

The companies that benefit most from AI won’t always be the ones with the most GPUs. Instead, they’ll be the ones with the best systems for managing them.

Executive Procurement Checklist:

- Examines how Microsoft 365 Copilot agents are changing GPU procurement priorities, explains why AI governance now consumes major compute resources.

- explores the rise of secure AI agents and GPU orchestration.

- highlights the growing role of agentic DevOps and MSFT Virtual Training.

- discusses how enterprises are scaling AI infrastructure for long-term operational efficiency.

Source: Go deep on real code and real systems at Microsoft Build