A one-second delay in a factory safety system can mean halted production or worse, yet many enterprises still rely on centralized processing that introduces latency at the worst possible moment. Microsoft is addressing this gap by extending Microsoft Azure AI capabilities into environments where decisions must be made instantly, pushing intelligence closer to devices through edge computing AI, and strengthening the foundation for distributed AI systems.

Why Real-Time AI Demands New Architecture

Moving Beyond Centralized Models

Conventional cloud processing sends data from devices to central servers, which then return insights. This process works for analytics, but is too slow for situations where timing is critical. Industrial robots, self-driving cars, and live video analysis need responses in just milliseconds.

Microsoft’s solution creates a cloud edge infrastructure that handles data locally while still working with central systems. This mix enables real-time AI while keeping things scalable. It also saves bandwidth by sorting data at the edge before sending it on.

Microsoft Azure AI and Edge Computing AI

Bringing Intelligence Closer to Action

Microsoft has made Azure AI work smoothly in edge environments. This means it can connect to IoT devices, on-site servers, and locations with unreliable internet access. The main goal is to let edge AI work on its own when needed while still staying connected for updates and management.

Take a logistics company with a fleet of delivery vehicles. Rather than sending all data to the cloud, the vehicles’ systems analyze routes, traffic, and driver habits right on board. This allows for instant decisions, making things safer and more efficient. At the same time, summary data is sent to central systems for bigger picture analysis.

Distributed AI Systems in Practice

Coordinating Intelligence Across Locations

Distributed AI systems are advancing, making decisions across more places, not just one. Instead of using a single processing center, intelligence is shared across many points. Each one does its own job while also helping the whole system.

The model is particularly effective for low-latency systems. For example, in smart manufacturing, sensors detect anomalies and trigger immediate responses without waiting for cloud confirmation. At the same time, data flows to centralized platforms for long-term optimization.

Microsoft’s architecture supports this balance by enabling consistent model deployment across environments. Developers can build once and deploy across the cloud and edge with minimal changes, simplifying enterprise AI deployment.

Cloud Edge Infrastructure and Performance Gains

Reducing Latency Without Losing Scale

Latency remains the defining challenge for real-time applications. By extending cloud edge infrastructure, Microsoft reduces the physical and network distance between data and computation. This directly improves response times.

The advantages go beyond just speed. Processing data locally means systems don’t always need to be online, which is important for remote or mobile settings. It also keeps sensitive data closer to where it’s moved, improving security.

Industries like healthcare and finance, where privacy is crucial, can use this method to comply with regulations while still performing well. Mixing local processing with central control makes systems stronger and more reliable.

Gaming Infrastructure AI as a Test Case

Real-Time Demands at Scale

Few factors demand faster responses than gaming. Multiplayer environments require synchronization across thousands of players, often in real time. Microsoft’s investment in gaming infrastructure AI highlights how edge capabilities can support these requirements.

When AI models are placed closer to players, systems can better predict reactions, reduce lag, and improve the experience. This also helps create content that adapts to players’ actions.

What works in gaming can also help other fields. Any tool or app that needs instant feedback can use these setups. This includes things like augmented reality, live streaming, and interactive training.

AI Compute and Resource Optimization

Balancing Power and Efficiency

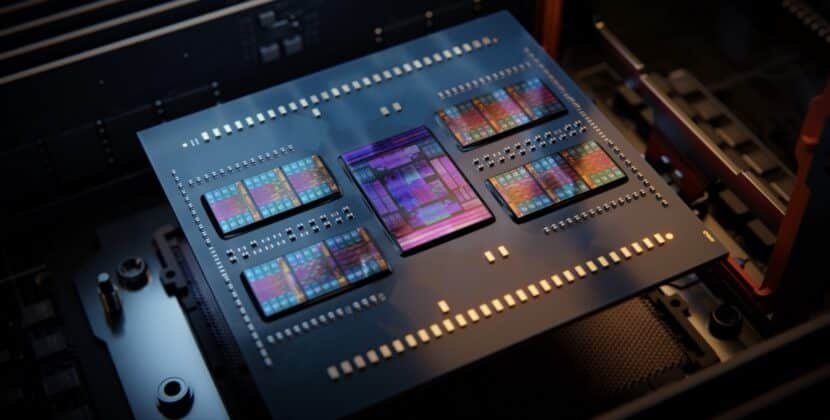

Deploying AI at the edge introduces new challenges around AI compute. Devices must process complex models without the resources of large data centers. Microsoft addresses this by optimizing models for efficiency and enabling flexible deployment options.

Companies can decide where to allocate their resources based on each job’s needs. Important tasks can run locally while less urgent ones go to the cloud. This way, cloud resources are used effectively, and edge devices aren’t overloaded.

Managing resources well also cuts costs. By handling only the needed data at the edge, companies avoid extra data transfers and storage fees.

Enterprise AI Development Strategies

Integrating Edge and Cloud

Successful enterprise AI development requires a clear strategy that integrates edge and cloud environments. Microsoft provides tools that simplify this process, allowing organizations to manage models, monitor performance, and update systems from a central platform.

Key considerations include:

- Identifying workloads that require real-time processing.

- Determining data residency and compliance requirements.

- Balancing cost, performance, and scalability.

By addressing these factors, enterprises can build systems that deliver consistent performance across environments. The integration of edge and cloud also supports future scalability as workloads evolve.

The Role of Low Latency Systems in Competitive Advantage.

Speed as a Differentiator

Speed is no longer a technical metric; it is a business differentiator. Companies that deploy low-latency systems can respond to events faster, improve customer experiences, and reduce operational risks.

For example, in retail, real-time inventory tracking can prevent stockouts and optimize supply chains. In energy, immediate analysis of grid data can prevent outages. These capabilities depend on the ability to process data at the edge while maintaining coordination with centralized systems.

Microsoft’s focus on real-time AI positions it to support these use cases effectively. By combining edge and cloud capabilities, the company enables organizations to act on data as it is generated.

Resources and Further Reading

For those evaluating edge AI strategies, the following resources provide deeper insights:

- Microsoft Azure AI documentation and product updates.

- Azure IoT Edge and hybrid cloud deployment guides.

- Case studies on edge AI in manufacturing and healthcare.

- Research papers on distributed AI systems and latency optimization.

These resources provide practical advice on using and adopting edge AI solutions.

Looking Ahead: The Future of Real-Time Intelligence

Microsoft’s move into edge computing shows a broader shift in how AI systems are built and used. The focus is now on making systems faster, stronger, and more flexible.

As distributed AI systems mature and cloud-edge infrastructure evolves, organizations will increasingly rely on intelligence that operates at the edge, where data is generated. Those who align their strategies with this direction will gain an operational edge, not through larger models, but through faster, more precise decisions.

Source: Azure Updates