SANTA CLARA, Calif. —

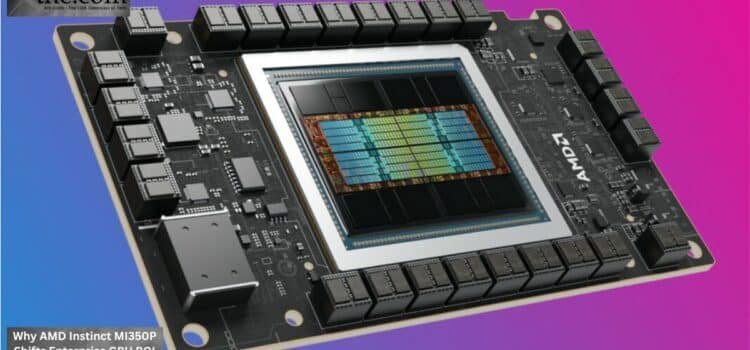

Atomic Answer: The Instinct MI350P PCIe GPU has been launched by AMD (AMD), which is meant to be a “drop-in” upgrade for enterprise racks currently available. The MI350P achieves this by using HBM3e memory and the ROCm 7.0 software stack, enabling companies to increase their inference cluster sizes without requiring significant cooling changes for high-wattage OAM modules.

The launch of AMD Instinct MI350P PCIe enterprise inference 2026 accelerator provides a scalable platform for companies looking to expand AI capabilities without requiring an upgrade to their current rack infrastructure. This HBM3e ROCm 7.0 drop-in GPU upgrade is based on advanced memory technology and the ROCm 7.0 software environment, allowing enterprises to build inference clusters on standard PCIe platforms without expensive liquid cooling requirements. With this new launch, companies can build inference clusters on standard PCIe platforms without costly liquid cooling. In the AI enterprise segment, there is currently greater emphasis on efficient infrastructure, cost predictability, and rapid time-to-deployment. The shift has resulted in high demand for accelerators that can integrate into standard enterprise IT infrastructure without extensive changes to the data center infrastructure.

AMD Instinct MI350P arrives in this segment at a very strategic time. Rather than focusing solely on hyperscale model training, AMD is developing the accelerator for more practical uses of Enterprise AI for companies that run standard x86 server farms.

The Importance of Deploying PCIe

Among the factors preventing traditional companies from adopting AI, compatibility with hardware infrastructure has been a key issue, as many high-performance accelerators require liquid cooling, power redesign, and custom rack configurations to deploy, greatly raising implementation costs.

This approach also supports PCIe Gen5 air-cooled rack GPU deployment, making adoption easier for enterprises that cannot redesign their data centers from scratch.

Main Benefits of PCIe GPUs

- Simpler integration into legacy enterprise racks

- Significantly decreased cooling costs.

- More rapid procurement and setup

- Higher compatibility with existing x86 platforms

Such an approach can be very useful for banks, telecoms, healthcare institutions, logistics companies, and other critical sectors where even a brief downtime can cause serious disruptions.

The Transition to Inference Clusters

Corporate demand is shifting from foundational model training towards inference. Most firms use AI copilots, automation, customer service, and analytics solutions that rely on steady inference clusters running 24/7.

As a result, enterprises are evaluating accelerators differently. The AMD Instinct MI350P PCIe enterprise inference 2026 platform is designed around sustained inference scalability instead of peak training benchmarks.

It means companies will start assessing GPUs differently.

- Requirements for Enterprise Inference

- Fast response generation

- Continuous 24/7 performance reliability

- Efficient energy consumption by AI servers

- Multi-user scalability

- Long-term operational cost predictability

In contrast to previous designs, AMD Instinct MI350P targets all those factors. Rather than optimizing extreme training capabilities, AMD aims at providing sustainable inference scalability for Enterprise AI deployments.

HBM3e and Performance Efficiency

Memory bandwidth is emerging as a key bottleneck for inference tasks. Modern large language models and multimodal architectures must have fast access to memory to keep latency levels low even under high inference loads.

.AMD addresses this challenge through its HBM3e ROCm 7.0 drop-in GPU upgrade architecture.

- Advantages of HBM3e Memory

- Increased bandwidth for accelerating inference

- Reduced latency when executing AI tasks

- High energy efficiency per workload

- Effective handling of simultaneous enterprise queries

- Superior performance per watt characteristics

HBM3e also enables enterprises to reduce operational costs.

How ROCm 7.0 Changes AMD’s Software PerspectiveHow ROCm 7.0 Changes AMD’s Software Perspective

In the past, Nvidia maintained its leading position thanks to its well-developed CUDA ecosystem. Many companies were hesitant to switch from their GPU infrastructure because of potential software compatibility issues.

ROCm 7.0 drastically changes this conversation. The platform now offers improved framework support and stronger enterprise deployment capabilities through ROCm 7.0 open stack x86 server compatibility.

ROCm 7.0 Advantages

- Expanded capabilities in PyTorch and TensorFlow

- Higher compatibility with AI open-source frameworks

- Better deployment capabilities for enterprise users

- More optimized performance on inference clusters

- Greater flexibility for developers

The improvement in ROCm 7.0 open stack x86 server compatibility makes AMD increasingly viable for organizations running standard enterprise server infrastructure.

The debate surrounding AMD MI350P vs Nvidia L40S inference TCO is therefore becoming more relevant for enterprise procurement teams evaluating long-term AI deployment economics.

Enterprise ROI and Cost Optimization

The economics of AI adoption are evolving quickly. Many companies first adopted cloud AI solutions because they offered instant scalability without requiring upfront financial investment. But tokenized pricing structures are now becoming very costly for enterprises that require constant processing power.

Dedicated inference clusters provide more stable economics over extended periods.

Possible ROI Advantages

- Decreased monthly inference expenses

- Less reliance on cloud GPU rentals

- Increased ownership of resources

- Enhanced workload reliability

- Higher data sovereignty and compliance

Industry discussions increasingly focus on how does AMD Instinct MI350P PCIe drop-in upgrade deliver 35% lower TCO for enterprise inference versus cloud-based GPU token rental as enterprises compare local inference infrastructure with recurring cloud AI costs.

This will be especially useful for companies that use AI-powered agents within their operations on a daily basis.

Infrastructure Challenges Remain

However, despite the streamlined deployment process of the MI350P, businesses should not expect AI scalability to become trivial. The high-density configuration of GPUs would continue to produce substantial thermal and power outputs.

Typical Infrastructure Challenges

- Thermal buildup at the rack level

- PSU constraints in legacy server models

- Optimized airflow considerations

- Requirement for rear-door cooling systems

- Higher power usage due to constant workloads

According to AMD, it is advisable to audit server power supplies to ensure each GPU slot supports at least 450W prior to deployment.

Overlooking such considerations may lead to operational instability as enterprises scale their inference cluster across different sites.

Industry-Wide Implications

The launch of the AMD Instinct MI350P PCIe enterprise inference platform for 2026 reflects a broader transformation in Enterprise AI purchasing behavior.

Such a development would favor businesses looking to deploy rather than experiment with computational scalability.

Impacts at the Market Level

- Increasing enterprise PCIe accelerator utilization

- Higher emphasis on air-cooled AI infrastructures

- Regional growth in inference deployments

- Growing preference for efficient enterprise AI servers

- Increased competitiveness against hyperscale cloud economics

Server vendors and colocation companies have already begun catering to this movement by developing infrastructure tailored for enterprise inference, not just hyperscale training clusters.

Conclusion

The AMD Instinct MI350P is not just another enterprise GPU introduction. It embodies the broader industry trend of shifting towards Infrastructure-Efficient Enterprise AI deployments, driven by operational ROI considerations rather than sheer computational horsepower. With its PCIe GPUs, HBM3e memory, ROCm 7.0 compatibility, and drop-in capabilities, AMD is targeting companies looking for scalable inference clusters without redesigning their current data center architectures. In an ongoing quest for cost-effective AI deployments, the MI350P may become one of the most important accelerators shaping enterprise inference infrastructure in 2026.

Executive Procurement Checklist: AMD Instinct MI350P Deployment

- Procurement Shift: Transition from OAM modules to PCIe Gen5 form-factors for mid-tier enterprise AI “Agent” servers to avoid custom rack redesigned.

- ROI Benchmark: Target a 35% reduction in OpEx by migrating high-volume inference from cloud-token models to local dedicated clusters.

- Software Readiness: Verify stack compatibility with ROCm 7.0 to ensure seamless PyTorch and TensorFlow migration from legacy CUDA environments.

- Infrastructure Risk: Despite air-cooling compatibility, high-density configurations (4+ GPUs per node) may necessitate Rear Door Heat Exchangers (RDHx) to manage rack-level thermal buildup.

- Operational Action Step: Conduct a mandatory PSU audit; each target server slot must support a minimum 450W power envelope to ensure GPU stability under 24/7 inferen

- ce loads.

Source- AMD Instinct™ MI350 Series GPUs