News Summary

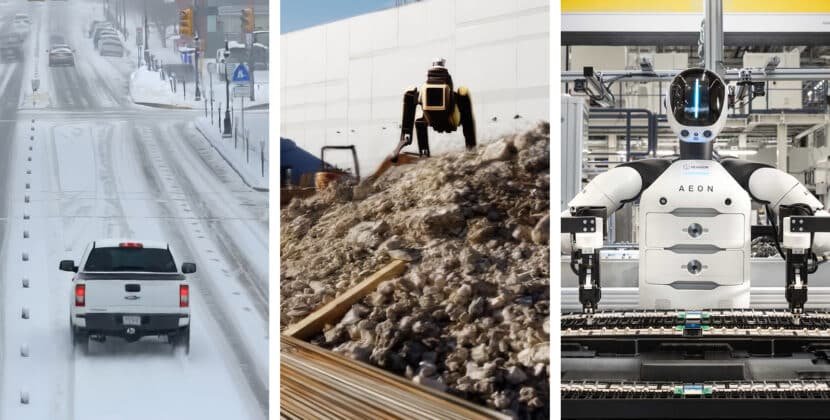

- The blueprint enables processing and organizing large amounts of data, generating computer-created (synthetic) data, applying Decision-making algorithms (reinforcement learning), and testing physical artificial intelligence (AI) models for computer vision-based agents, robotics systems, and self-driving vehicles.

- Cloud providers use the blueprint to turn large-scale computing power into agent-driven data production tools.

- Top physical AI developers are using Bluetooth to speed up work on robotics, vision AI agents, and self-driving vehicles.

At GTC, Nvidia announced the Nvidia Physical AI Data Factory Blueprint — an open reference design that automates the generation (creation), refinement (improvement), and validation (quality and accuracy checks) of training data, making it cheaper, faster, and simpler to train physical AI systems at scale.

With the blueprint developers can use, NVIDIA, Cosmos, Open World Base models, and top coding agents, they can turn small training datasets into large, varied ones. This includes rare and unusual cases that are hard or costly to collect in real life.

NVIDIA is partnering with Microsoft Azure and Nebius to bring the open blueprint to their cloud platforms. This enables developers to leverage advanced computing to generate large training datasets. Companies such as Field AI, Hexagon, Robotics, Linker, Void Vision, Milestone Systems, RoboForce, SkildAI, Teradyne Robotics, and Uber are already adapting Blueprint to accelerate robotics, vision, air, and autonomous vehicle development.

Physical AI is the next frontier of the AI revolution, where success depends on the ability to generate massive amounts of data, said Rev Lebaredian, Vice President of Omniverse and Simulation Technologies at Nvidia. Together with cloud leaders, we are providing a new kind of agentic engine that transforms compute into high-quality data, enabling the next generation of self-governing systems and robots to come to life. In this new era, Compute is data.

A unified engine for physical AI improves with data, computing power, and larger models. The physical AI data factory blueprint provides a single reference design for converting raw data into model-ready training sets through modular, automated workflows.

- Curate and search: Nvidia Cosmos Curator is a tool that handles, improves, and labels large datasets that include both real-world and synthetic (artificially generated) data.

- Augment and multiply: Cosmos Transfer greatly increases and diversifies the selected dataset, combining real-world and computer-generated data to better represent rare and unusual scenarios across a range of environments and lighting conditions.

- Evaluate and validate column Nvidia Cosmos Evaluator (which uses Cosmos Reason) and Evaluation Algorithm, and is now on GitHub. It automatically checks scores and filters data to ensure accuracy and readiness for training.

Nvidia is using the physical AI data factory blueprint to train and test Nvidia Alpamayo, the first open resource-based vision–language–action model for rare autonomous driving situations. Skild AI and Uber are also using the blueprint to add advanced robot models and self-driving vehicles.

Agent Driven Orchestration At Scale

Many robotics developers lack the resources to set up and manage the complex AI systems needed to generate data at scale.

NVIDIA OSMO is an open-source tool that unifies Windows across diverse computing environments. By reducing manual tasks, developers can focus on building models. Osmo integrates with coding agents such as Claude Code. OpenAI codecs and cursor-enabled AI agents manage resources, resolve bottlenecks, and accelerate model deployment at scale.

Powering the Global Physical AI Ecosystem

Cloud service providers are key to offering the first AI infrastructure, machine learning tools, and orchestration services that developers need. To build and launch physical air at scale

Microsoft Azure is integrating the physical AI data factory blueprint-a set of guidelines and reference architectures for building, training, and validating artificial intelligence systems that interact with the physical world – into an open physical AI. Tool chain now available on GitHub. The Blueprint offers integration with Azure services such as Azure IoT operations (a platform for managing and analyzing Internet of Things data), Microsoft Fabric (AUD Data and Analytics Platform), real-time intelligence (a service for processing streaming data) and Microsoft Foundry (a suite of development tools) to enable enterprise-bred agent-driven workflows for quickly and at-scale training and validating physical AI systems.

Early adopters use the Azure Physical AI toolchain to accelerate data generation, refinement, and testing for perception, mobility, and reinforcement learning projects.

Nebius has added Osmo to its AI cloud, enabling developers to use the blueprint to set up data pipelines ready for production and customized to their needs. Nebius’s system supports the whole physical AI stack, combining Nvidia RTX Pro 6000 Blackwell Server Edition GPUs with fast storage, built-in data management and labeling, serverless execution, and managed inference.

Early users like Milestone Systems, Voxel51, and RoboForce – members of the previously mentioned group – are using the blueprint on Nebius infrastructure to speed up model development for video analytics, AI agents, self-driving vehicles, and Steel humanoid robots.

The Nvidia Physical AI Data Factory Blueprint should be available on GitHub in April.

You can watch the GTC keynote from NVIDIA founder and CEO Jensen Huang and check out the sessions.