News Summary

- The Newton Physics Engine, now in NVIDIA ISAAC Lab, helps researchers and developers build more capable and adaptable robots.

- The new ISAAC GR00T Open Foundation model enables robots to reason like humans. It breaks complex tasks into simpler steps and uses prior knowledge and common sense to finish them.

- NVIDIA COSMOS world-based models let developers generate diverse data, enabling faster, scale-out training of physical AI models.

- Researchers at top universities such as Stanford, ETH Zurich, and the National University of Singapore are using NVIDIA’s accelerated computing and software to advance robotics research.

- Top robot developers such as Agility Robotics, Boston Dynamics, Disney Research, Figure AI, Franka Robotics, Hexagon, Skild AI, Solomon, and Techman Robot are now using NVIDIA ISAAC and Omniverse technologies.

NVIDIA announced today that the open-source Newton Physics Engine is now available in NVIDIA ISAAC Lab, along with the open NVIDIA ISAAC GR00T and 1.6 reasoning, vision, language, and action model for robot skills and new AI infrastructure. These technologies give developers and researchers an open first robotics platform that speeds up iteration, standardizes testing, unifies training with on-robot inference, and helps robots safely and reliably transfer skills from simulation to the real world.

Humanoids are the next frontier of physical AI, requiring the ability to reason, adapt, and act safely in a volatile world, said Rev. Lebaredian, vice president of Omniverse and Simulation Technology at NVIDIA. With these latest updates, developers now have the three components to bring robots from research into everyday life:

- ISAAC GR00T as the robot’s brain

- Newton as their body

- NVIDIA Omniverse as their training ground

Newton Opens New Standard For Physical Simulation In Robotics

Robotics learn faster and more safely in simulation, but humanoid robots with their complex joints, balance, and movements challenge today’s physics engines. More than a quarter of a million robotics developers worldwide need accurate physics so that the skills they teach robots in simulation can be used safely and reliably in the real world.

NVIDIA announced today the beta release of Newton, an open-source GPU-accelerated physics engine managed by the Linux Foundation. Newton is built on the NVIDIA Warp and OpenUSD frameworks and was developed in collaboration with Google DeepMind, Disney Research, and NVIDIA. It is available now.

Thanks to Newton’s flexible design and support for various physics solvers, developers can now simulate very complex robotic actions, such as walking through snow or gravel and handling cups and fruits, and then successfully deploying them in the real world.

Recent adopters of Newton include respected research labs and universities like ETH Zurich Robotic Systems Lab, Technical University of Munich, and Peking University, as well as robotics company Lightwheel and simulation engine company Style3D.

Cosmos Reason Enhances Robot Thinking in the New Open Isaac Gr00t N1.6 Model

Robots need to interpret uncertain instructions and adapt to unfamiliar situations to handle real-world tasks.

The newest ISAAC GR00T N1.6 robot foundation model, coming soon to Hugging Face, will include NVIDIA Cosmos Reason, an open, customizable reasoning vision-language model designed for physical AI. Cosmos Reason works like the robot’s brain, turning implicit instructions into step-by-step plans. It uses prior knowledge, common sense, and physics to help robots handle new situations and perform a wide range of tasks.

Cosmos Reason has been downloaded over 1 million times. It currently ranks highest on the physical reasoning leaderboard on Hugging Face. It can also organize and label large sets of both real and synthetic data for model training. Cosmos Reason 1 is now available as a simple NVIDIA NIM microservice for deploying AI models.

ISAAC GR00T N1.6 now allows humanoid robots to move and handle objects simultaneously. This gives them more freedom in their torso and arms, making it easier to do challenging tasks such as opening heavy doors.

Developers can further train GR00T models with the open-source NVIDIA physical AI dataset on Hugging Face. Users have downloaded this dataset over 4.8 million times, and it now offers thousands of artificial and real-world examples.

Top robot makers like AeiRobot, Franka Robotics, LG Electronics, Lightwheel, Mentee Electronics, Neura Robotics, Solomon Techman Robot, and UCR are testing ISAC GR00T-N models to build general-purpose robots.

Launching New Cosmos World Core Models for Physical AI Development

NVIDIA has announced new updates to its Open Cosmos WFM (World Foundation Models), which have been downloaded over 3 million times. These updates let developers generate a wide range of data to speed up the training of physical AI models at scale using text, image, and video prompts.

- Cosmos Predict 2.5, coming soon, combines the strengths of three Cosmos WFMs into a single model. This reduces complexity, saves time, and improves efficiency. It can generate longer videos up to 30 seconds and supports multi-view camera outputs for world simulations.

- Cosmos Transfer 2.5, also coming soon, is 3.5 times smaller than the previous and delivers faster, higher-quality results. It can create photo-realistic synthetic data, meaning lifelike images and scenes generated by computers from 3D simulations and spatial control inputs such as depth segmentation, edges, and high-definition maps.

A New Workflow For Teaching Robots To Grasp Objects

Teaching a robot to pick up an object is one of robotics’ hardest challenges. It goes beyond moving an arm; it turns concepts into precise actions, a skill robots learn through trial and error.

The new Dexterous Grasping workflow in the developer preview of iSight Lab 2.3, built on the NVIDIA Omniverse platform, trains robots with multi-fingered hands and articulated arms in a simulated computer-generated environment. Using an automated series of progressively more challenging training tasks, the workflow adjusts technical factors such as gravity (which affects how objects fall), friction (which determines how surfaces slide against each other), and object weight, helping robots learn skills in unpredictable settings as well.

Boston Dynamics Atlas robots used this workflow to learn grasping, which greatly improved their ability to manipulate objects. Top robotics developers such as Agility Robotics, Boston Dynamics, Figure AI, Hexagon, Skild, AI Solomon, and Techman Robot are now using NVIDIA ISAAC and Omniverse technologies.

Testing Learned Robotic Robot Skills in Simulation

Teaching a robot a new skill, such as picking up a cup or walking across a room, is very challenging. Testing these skills on a real robot takes a lot of time and can be costly.

The solution lies in simulation, which enables testing a robot’s learned skills across countless scenarios, tasks, and settings. Even in simulation, developers tend to build fragmented, simplified tests that do not reflect the real world. A robot that learns to steer a perfect, simple simulation will fail the moment it faces real-world complexity.

NVIDIA and Lightwheel are joining forces to help developers run complex, large-scale simulation tests without having to start from scratch. ISAAC Lab Arena, their freely available framework for scalable experiments and standardized testing, will be available soon.

New NVIDIA AI Infrastructure Enables Robotics Workloads Anywhere

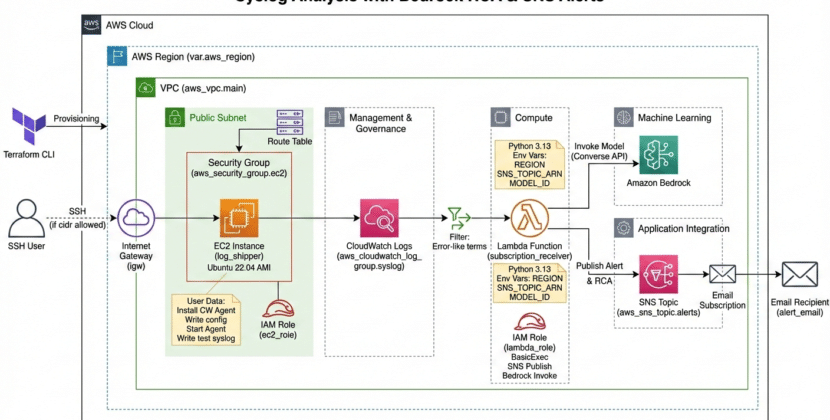

NVIDIA announced an AI infrastructure designed for the most challenging workloads, enabling developers to leverage advanced technologies and software libraries.

NVIDIA GB200/NVL72 is a rack-scale system with 36 Grace CPUs and 72 Blackfire GPUs. Major cloud providers are using it to speed up AI training and inference. Tasks include intricate reasoning and physical AI tasks.

- NVIDIA RTX Pro Servers, which offer a single hardware and software architecture for every robot development workload, including: Model training, Teaching robots to perform tasks using data, Synthetic data generation (creating simulated data to use in development and testing), Robot teach learning (enabling robots to improve at tasks through algorithms), Simulation (virtually testing robots before real-world deployment). RTX PRO servers have been adopted by the RAI Institute.

- NVIDIA’s Jetson Thor, powered by a Blackwell GPU, lets robots run multiple AI workflows, such as intelligent interactions, and enables real-time on-robot inference. This is a major step forward for high-performance physical AI tasks and applications, such as humanoid robotics. Partners such as Figure AI, Galbot, Google DeepMind, Mentee Robotics, Meta, Skild AI, and Unity have adopted Jetson Thor.

NVIDIA Advances Progress in Robotics Research

NVIDIA technologies, including GPUs, simulation frameworks, and CUDA-accelerated libraries, were referenced in nearly half of CoRL’s accepted papers, with use across leading research labs and institutions such as Carnegie Mellon, University of Washington, ETH Zurich, and National University of Singapore.

CoRL also featured BEHAVIOR, a Robotic Learning Benchmark project from the Standard Vision and Learning Lab, and Taccel, a high-performance simulation platform for advancing vision-based tactile robotics developed by Peking University.

Find out more about NVIDIA’s robotics research at CORL, taking place from September 27 to October 2 in Seoul.

Source: NVIDIA Accelerates Robotics Research and Development With New Open Models and Simulation Libraries