The risks connected to artificial intelligence systems that operate independently and control essential systems are increasing at the same pace as their deployment in critical areas. The Cybersecurity and Infrastructure Security Agency (CISA) has recently highlighted emerging AI security concerns, warning that expanding attack surfaces could expose organizations to large-scale breaches.

The warning exists because businesses and government organizations increasingly depend on AI systems to operate their activities, make automated decisions, and improve productivity. The technologies provide major benefits to users, but they create new security weaknesses that existing security systems cannot protect against.

Expanding Attack Surfaces in AI Systems

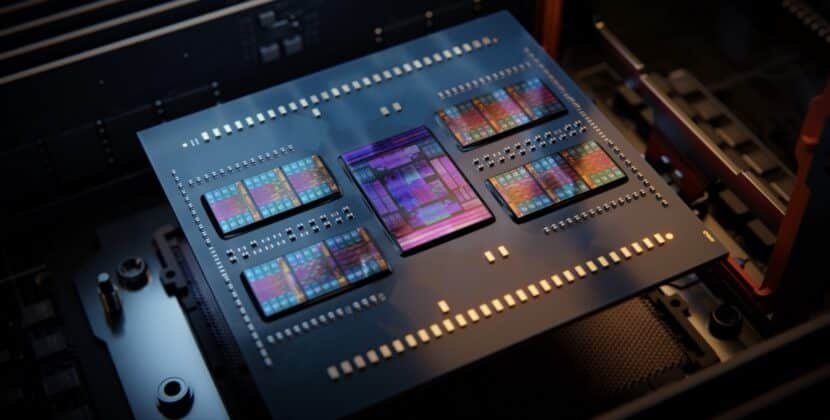

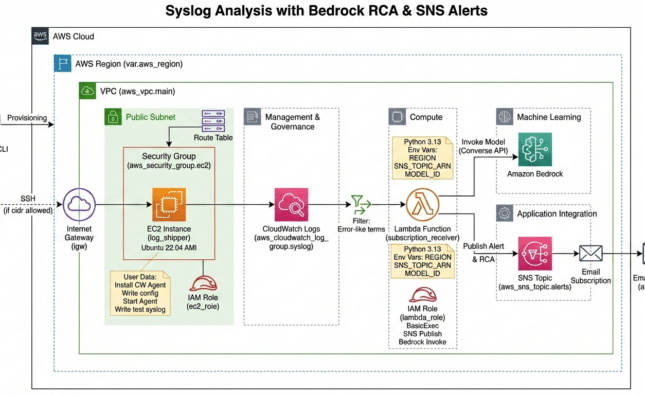

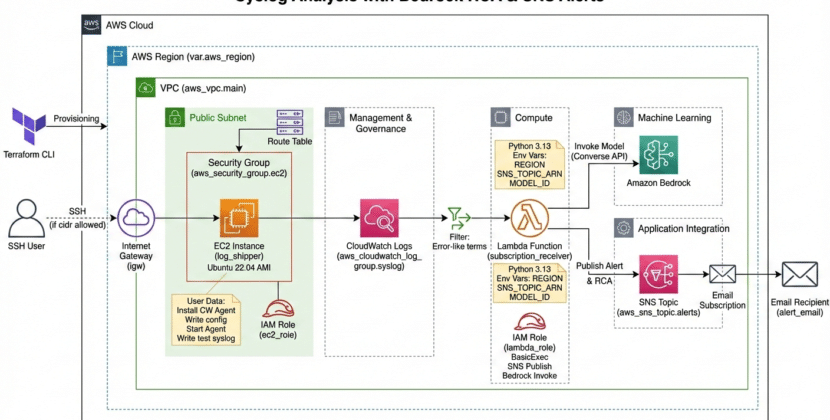

The main security issue CISA identified is the rapid growth of digital attack surfaces. AI systems function through four distinct layers: data inputs, algorithms, cloud infrastructure, and user interfaces. Malicious actors can use each layer as their entry point into the system.

AI systems differ from traditional software because they maintain ongoing learning and adaptation capabilities. AI security becomes difficult because AI systems develop new weaknesses over time. Weaknesses in training data allow attackers to manipulate model outputs and obtain unauthorized access to system controls.

The problem becomes more difficult because of autonomous systems. AI systems need human supervision to operate safely, as breaches can have serious consequences for financial systems and essential infrastructure.

The Rise of Identity-Based Threats

The CISA organization now recognizes identity as an essential security boundary that requires increased protection. AI-based systems operate in environments that no longer rely on traditional network perimeter restrictions. System security depends on identity verification and access control systems, which support operational protection.

The identity firewall functions as an essential security component in this situation. The identity-based security method controls access to AI systems by allowing only authorized users, devices, and applications to connect.

Organizations can achieve real-time access control through identity firewall implementation, which enables them to track system entry points and safeguard against unauthorized access. AI systems that operate across various platforms and services require this method as vital.

Data Integrity and Model Manipulation

AI security faces another major challenge: protecting both training and operational data used by models. The design of AI systems requires extensive datasets, which creates security risks because attackers can exploit data poisoning techniques to compromise them.

In this scenario, attackers insert malicious data into training datasets to train an artificial intelligence system that produces incorrect, biased results. The effects can create severe problems because they affect critical fields such as healthcare, finance, and national security operations.

Model manipulation constitutes a new rising threat. Hackers exploit weaknesses in AI algorithms to manipulate system functions, leading to incorrect decisions and system crashes.

CISA requires organizations to establish robust data verification systems and monitoring procedures to reduce existing threats.

Challenges in Securing Autonomous Systems

The transition to autonomous AI systems creates new security risks that need to be addressed. The systems function as completely autonomous entities, which makes it difficult for human operators to monitor their performance.

The security system demands that protection elements be integrated into the system design. The identity firewall system provides a strong access-control solution that requires additional protection measures to maintain system security.

A complete security system requires three main components: continuous monitoring, anomaly detection, and automated response systems. Organizations require these security measures because they help organizations identify threats and respond effectively before any incidents occur.

Regulatory and Policy Implications

Policymakers and regulatory bodies now focus more on artificial intelligence because its risks to society continue to increase. CISA warns organizations that they need to develop new frameworks to address the specific challenges posed by AI systems.

Governments now understand that standard cybersecurity rules do not provide adequate protection for environments that use artificial intelligence technology. New policies must consider factors such as algorithm transparency, data governance, and accountability.

Organizations must implement AI security measures to enable compliance with new standards and best practices. The process requires organizations to conduct routine risk assessments while establishing security protocols to protect the entire AI development process.

The Role of Collaboration and Information Sharing

CISA emphasizes the importance of collaboration between the public and private sectors in addressing AI-related risks. The battle against cyber threats demands that all organizations work together, as threats continually evolve and no single entity can handle them.

Information sharing is a vital component of both threat identification and threat mitigation. The process of sharing insights and best practices enables organizations to build stronger defenses and develop more effective responses to new security threats.

Government agencies, technology companies, and research institutions need to establish partnerships to develop new security solutions. The existing frameworks need to be improved through these collaborations to advance AI protection solutions.

Balancing Innovation and Security

The fast development of AI technologies creates a challenging situation that requires organizations to find an appropriate solution. Organizations want to use AI technology to gain a competitive edge. The technologies offer benefits, yet organizations must acknowledge the risks they entail.

CISA recommends that organizations incorporate security measures throughout their entire artificial intelligence development process. The process requires system designers to establish security during system design, security testing, and system performance evaluation.

Organizations need to establish comprehensive security systems because identity firewall systems offer powerful security benefits.

Impact on Businesses and Critical Infrastructure

The security risks associated with AI systems impact more than just single organizations. AI systems have become essential to critical infrastructure sectors such as energy, transportation, and healthcare.

A breach of these systems could have far-reaching consequences, disrupting essential services and endangering public safety. Organizations need to focus on AI security to develop robust security systems.

Security incidents create financial losses and reputational damage, which businesses need to evaluate. Data breaches and system failures can lead to significant losses that will damage public trust.

The Future of AI Security

The security situation needs to keep pace with technological advancements, according to CISA’s warning.

The future of AI security research will focus on three main areas: advanced threat detection systems, automated defense technologies, and enhanced identity management frameworks. The safeguarding of AI-driven environments will depend on these technological advancements.

Organizations that invest in these capabilities will be better positioned to navigate the complexities of AI adoption and protect their systems from emerging threats.

Conclusion: Addressing a Growing Risk Landscape

The Cybersecurity and Infrastructure Security Agency has announced that advances in artificial intelligence pose dangerous threats that people need to consider. The need for advanced AI security has become critical as autonomous technologies become increasingly prevalent.

The adoption of strategies such as identity-based access control and the implementation of an identity firewall can help mitigate these risks, but they are only part of the solution. Organizations must take a comprehensive approach to address vulnerabilities across the entire AI ecosystem.

The development of artificial intelligence technology will depend on both technological advancements and the effectiveness of security measures for these systems. The digital era will face its major challenge in maintaining that operational strength and security.

Sources: CISA AND NCSC-UK RELEASE MALWARE ANALYSIS REPORT ON FIRESTARTER BACKDOOR