A major advance in AI hardware is shifting how companies view performance and cost. Google’s new TPU 8i reduces inference latency, helping AI systems respond faster and work more efficiently at scale. Real-time applications are now central to digital experiences, so latency is a major business concern. Companies that do not address it may end up with slower systems and higher costs.

Why Inference Speed Is Critical

Inference latency is the time a model takes to process input and give output, and it is crucial for today’s AI systems. Lower latency improves user experience, especially in conversational AI, fraud detection, and recommendation engines.

Google’s TPU 8i helps cut processing delays, allowing systems to run faster and more efficiently. This is especially important for businesses that handle large volumes of real-time data, where even small delays matter.

How AI Infrastructure Strategies Are Changing

In traditional AI scaling, the primary approach was to add more computer hardware (via very large GPU clusters—massive racks of GPUs). This method works fine but can lead to higher costs and lower effective productivity.

As AI technology moves to more specialized hardware, organizations are changing their AI infrastructure strategies to focus on efficiency/performance improvements rather than just scaling capacity.

With the new Google TPU 8i, organizations can achieve better performance from their current computing resources without scaling up. With the advancement of AI technology, this transition from brute force scaling to intelligently designed systems will happen quickly.

TPU versus GPU Argument Picked Back Up

With the development of new AI technologies and the rise of specialized hardware (chipsets), the debate between TPU and GPU has resurfaced. In addition, GPU chips remain among the most effective means for training AI models due to their flexibility and parallel processing power.

However, TPUs are specialized for tensor-based computation; therefore, they are much better suited for inference and are becoming increasingly popular as more organizations move their training and deployment workloads to separate lines of business (computing environments).

In this manner, organizations will not need to phase out GPU chips altogether; instead, organizations now have more tools available for their different stages in the AI lifecycle, allowing them to use the right tool for each lifecycle activity as a part of their overall infrastructure strategy, which is just more strategic and workload-specific (for each organization).

Changing the Way Businesses Build and Scale Their AI Infrastructure

In the past, businesses typically built their AI system by simply adding more compute power (via large GPU clusters) until they reached their desired level of machine learning capabilities. While adding additional compute resources was effective, over time, the costs of this computing have risen while the rate of return has dropped.

The rise of high-performance, purpose-built AI hardware like the Google TPU 8i is forcing business operators to rethink how they build and scale the machines needed to run AI models. Instead of simply adding more compute capacity to run their AI systems, businesses are looking to optimize for both efficiency and performance.

In particular, businesses using the TPU 8i will be able to achieve far greater performance than they would by simply adding the appropriate number of GPUs to their system. In effect, using the TPU 8i allows businesses to transition from a brute-force approach of adding compute resources to one based on intelligently designed systems.

New Perspective on TPU vs. GPU

With the emergence of advanced specialized hardware for AI, the debate between using GPUs or TPUs to train AI models is gaining renewed pace. One reason GPUs have traditionally been the de facto way to train AI models is their flexibility and ability to process many parallel tasks simultaneously.

Conversely, TPUs, by design, allow for highly efficient execution of tensor-based operations for inference workloads. Therefore, as companies continue to separate the workloads (training and deployment) associated with running AI models, understanding the benefits of both types of hardware will become critical.

Furthermore, TPUs do not provide an alternative to GPUs. Rather, by using TPUs alongside GPUs, companies will be able to determine the best tool for each stage of an AI project lifecycle. The ongoing discussion about TPU vs GPU highlights that companies are increasingly adopting a more deliberate, workload-specific approach to AI hardware planning.

Enhancing Agentic AI Systems

As artificial intelligence (AI) systems become increasingly autonomous, their performance requirements are also growing. Systems that employ agent-based intelligence make decisions, interact with users, and carry out tasks on an ongoing basis and in real time.

The improvements to the Google TPU 8i have a substantial impact on agentic AI performance, resulting in accelerated response times and improved interaction reliability. This is particularly true for applications such as virtual assistants, automated workflows, and intelligent monitoring systems.

An increase in agentic AI performance means these systems can complete more complex tasks without delays, leading to higher-quality outcomes and greater user satisfaction.

Enterprise Cost Implications

Minimizing inference latency directly affects the cost of operations for running an organization. Because the underlying infrastructure for an organization’s AI services is typically billed by compute, the ability to process quickly will reduce the organization’s bottom line.

By improving the performance capabilities of their AI systems, organizations will reduce the total cost of ownership of their AI deployments. The efficiency gains achieved through Google TPU 8i will make it a compelling choice for any organization seeking to scale its operations without unduly impacting its budget.

Additionally, improvements in AI infrastructure will enable organizations to allocate resources and human capital more efficiently and effectively, ultimately leading to greater value through higher returns on investment.

Moving to Different Systems

Changing from one system to another can be difficult. There are several things businesses will have to do to transition to a new system:

- Change the way they do business.

- Make sure that the new system is compatible with their current systems.

- Train their employees to use the new system(s).

When moving from one system to another, businesses must be very careful about making the necessary changes, especially when switching to a different system (for example, a new GPU-based machine).

However, the long-term benefits of using a more advanced system (in terms of productivity and lower costs) are often worth the initial investment or time to make the conversion.

Overall Impact on Industry

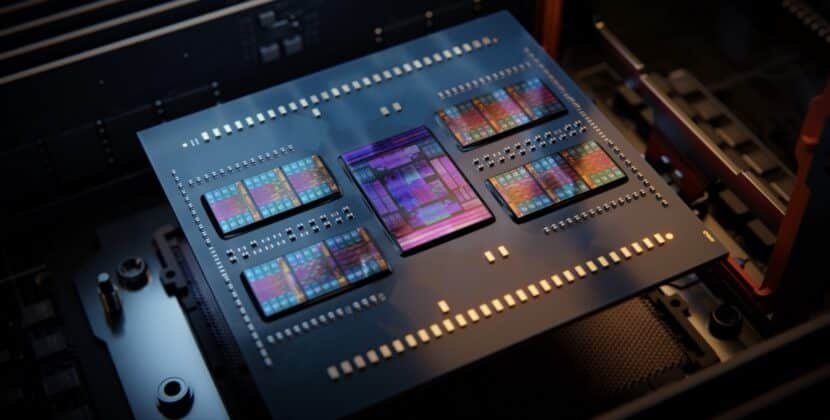

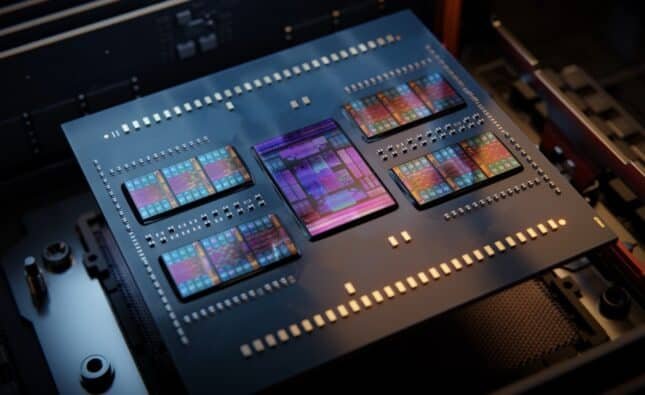

The introduction of the new Google TPU 8i marks a significant step toward specialized systems for AI. The continual increase in the complexity of AI-related workloads is driving a stronger demand for optimized systems.

This increase in specialization will promote the development of new ideas and products across all parts of the technology industry, including hardware design and software systems. This will also provide companies with a more strategic view of how they plan for their infrastructures.

Conclusion

AI systems will likely adopt a mixed approach in the future, with many different hardware configurations working together to achieve optimal training and production. Trained AI infrastructure today will benefit organizations by making it easier to scale up or adapt their operations to meet future advances in technology.

Google’s TPU 8i technology shows us that we’re not only seeing small changes to AI but also changing how we build, deploy, and optimize AI systems for performance and cost.

Source: News, tips, and inspiration to accelerate your digital transformation