The age of single massive AI supercomputers is running into physical and thermal barriers. For years, the industry tried to boost performance by adding more transistors to each chip, but moving data across a motherboard now uses too much energy and slows everything down. A recent developer leak confirmed what many expected: NVIDIA’s upcoming Rubin architecture is shifting focus from single-chip power to smoother meta-cluster performance. The discovery of a meta-cluster scaling flag shows that Jensen Huang’s next platform is more than just a faster GPU. It is a plan for a distributed system that works together like one giant processor across continents.

The Rubin Leak: Decoding the Meta Cluster Flag

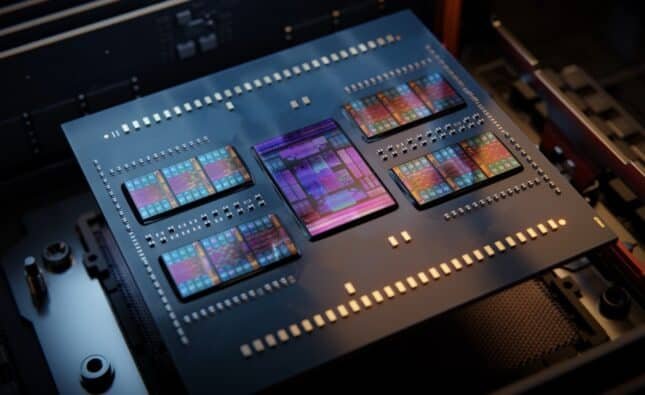

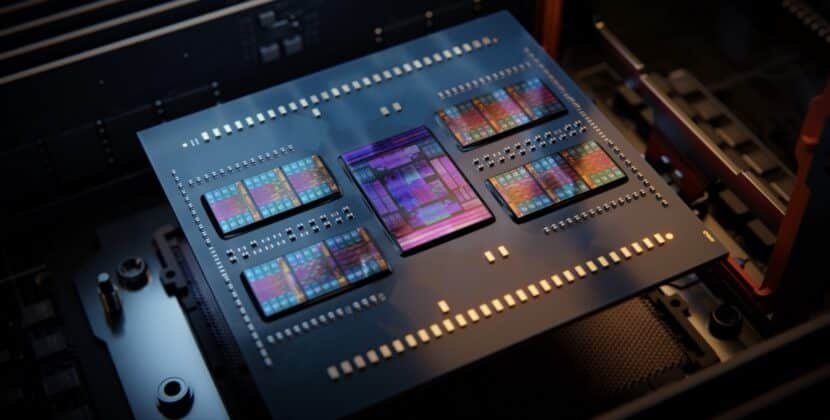

Recently, tech enthusiasts noted a line of code in early software that mentions an automated load-balancing system for shared memory. This is a big deal. In the past, running AI workloads across several clusters or groups of servers caused major delays due to data movement across network switches. The Ruben scaling flag suggests that NVIDIA is building in hardware support so developers can manage 32,000 GPUs as simply as they would handle eight.

This breakthrough is all about improving the environment cluster by putting the scaling logic right into the hardware and network. NVIDIA is cutting out the software overhead that typically accounts for about 20% of the training efficiency. For a company spending five billion on a cluster, the 20% boost means a saving of one billion dollars. The Rubin architecture aims to make communication between clusters as fast as communication between chips was just two generations back.

Why GPU Scaling Is the New Performance Frontier

Single-chip performance improvements are slowing down. By 2026, the real challenge will be how well a system can handle huge workloads with trillions of parameters without failing. NVIDIA Rubin packages this with HBM4 memory and new optical connections. If the link scaling flag works as expected, it will enable elastic compute, letting models grow across many clusters and handle spikes in demand and shrink when finished.

The implications for AI compute are profound. Current Blackwell-based systems are already driving this shift with significant effects on AI computing. Today’s Blackwell systems already stretch the limits of cooling and power. By focusing on multi-cluster scaling, Nvidia gives data center designers a new option. Instead of building one huge, hard-to-cool 100-megawatt facility, companies can connect several smaller 20-megawatt centers with almost no loss in performance.

Key Advantages of Rubin’s Distributed Architecture

- Near linear scaling: you can add 1,000 more GPUs and get almost 1,000 units of extra performance instead of the usual 700 units lost to networking limits.

- Unified memory fabric: the system creates a shared memory space so an AI agent can quickly access data even if it’s stored on another drive

- Fault tolerance: In a multi-cluster setup, if hardware fails in one area, the Rubin fabric reroutes traffic, so training continues without interruption.

- Reduced token latency: by improving how data flows through the MLing cluster, the time to generate the first token of an AI response drops sharply

The Move Toward Optical Interconnects

To handle this kind of GPU scaling, the Rubin platform reportedly uses silicon photonics. Regular copper wires can’t carry enough data for multi-user operations over long distances without overheating. Optical links use light to move data, which is faster and uses less power. The scaling flag in the development build likely acts as a traffic controller for this optical network, ensuring trillions of bits of data flow smoothly between server pods.

This new architecture directly addresses the data gravity problem. As data sets reach petabyte sizes, it’s no longer practical to move data to the compute. Instead, you need to bring the compute to the data. With Rubrik’s multi-cluster setup, a company can keep its sensitive data in one secure area and use the computing power of another cluster to process it all at the speed of a local connection.

Infrastructure as a Competitive Advantage

For C-suite executives, the Rubin leak shows that the cycle of buying and building AI hardware is speeding up. They’re moving from buying individual servers to subscribing to a flexible computing fabric. The companies that succeed in the next decade will treat their AI compute as a scalable utility, not just a set of servers. The Rubin slide shows that Nvidia is now building the world’s first truly distributed operating system, not just making chips.

Finding the Rubin scaling flag signals the end of the standalone server era. Looking ahead to the 2027 release, the main challenge will be how these clusters connect with local power grids and global fiber networks. The biggest technical problem for advanced AI is no longer the model code but managing heat and energy in a multi-cluster world. Those who master this scale will set the limits for what machine intelligence can do.