A new wave of performance insights will quickly be revealed through benchmark disclosures, and scaling AI system performance is no longer as easy as adding more hardware. The current benchmark data for NVIDIA Rubin has revealed serious constraints on system performance in large-scale deployments, making it even more challenging for organizations deploying AI systems with these limitations as they become increasingly complex.

Historically, the assumption was that adding GPUs (graphics processing units) would yield the same or similar improvements. However, benchmarks suggest that this linear-scaling model is nearing its end, and organizations may need to revisit their AI system design and deployment processes.

A Stopping Point in Linear Scaling

Modern AI workloads have seen exponential growth, while the efficiency of the infrastructure supporting that growth has not increased at the same rate. As systems continue to scale, so do the overhead costs of coordination, communication delays, and contention among multiple resources, ultimately limiting significant performance gains.

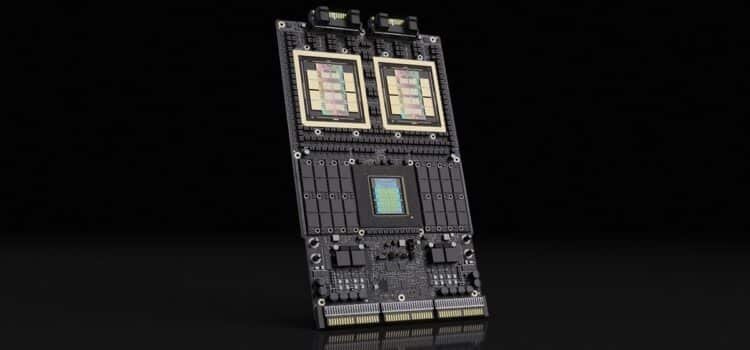

Insights from NVIDIA Rubin indicate that there is a limit to the number of GPUs that can be added to these large-scale deployments to increase performance at a rate proportional to the additional work the system performs. In other words, once the system’s performance characteristics reach a certain threshold, adding more GPUs will reduce overall operational performance.

The Importance of GPU Clusters

For many years, large GPU clusters have served as the primary infrastructure for AI training and deployment. They allow for parallel processing of large datasets and complex computations within models.

As cluster sizes increase, however, new problems arise. Managing communication across thousands of GPUs becomes more and more challenging as

delays and decreased efficiencies increase.

The data indicates that simply increasing the existing size of GPU clusters without optimizing interconnects or the overall system design results in diminishing returns. This has serious ramifications for enterprises that rely heavily on compute infrastructure investments.

The Role of NVLink

The communication layer between GPUs heavily dictates how scaling will work. NVLink, among other technologies, plays a crucial role in enabling high-speed data transfers between all nodes in a cluster. Results from operating benchmarks indicate that NVLink provides a greater performance advantage over traditional interconnects, but it will not overcome the limits imposed by the increasing size and complexity of the workloads processed by GPU cluster systems.

Ultimately, there must be a careful trade-off between computing resources and the ability to move data effectively within GPU clusters. Otherwise, such systems may find themselves increasingly inefficient and excessively costly due to continued unoptimized scaling efforts.

Scaling Challenges of AI : An Understanding

The definition of AI scaling is evolving to encompass a range of components. Companies are no longer focused solely on scaling compute resources; they must also consider additional factors when implementing an AI solution.

- Data transfer performance

- System Architecture

- Workload distribution

- Latency Between Nodes

- NVIDIA Rubin’s latest findings demonstrate how vital each of these areas is to achieve substantial performance improvements.

As the size and complexity of AI models continue to grow, the challenges of scaling AI will grow as well.

Enterprise Cost Impacts

These benchmarks also highlight that the limitations will directly impact an organization’s cost structure. If an organization spends capital to create additional infrastructure, but the infrastructure fails to deliver a proportional performance increase, the total cost of ownership will be very high.

Organizations that are funding multi-GPU clusters must now evaluate the return on investment for the expenditures incurred in building the cluster. Lack of efficient scaling will drive operational costs and extend the time to build and deliver AI solutions.

The result is a compelling reason for an organization to adopt better design architecture and improve its existing infrastructure rather than continually adding more hardware.

Moving Towards Intelligent Infrastructure Design

The results indicate that the industry is shifting from brute-force scaling to a more intelligent infrastructure design. Companies are now spending time and resources on:

- Optimizing their workloads based on the hardware they are using

- Improving transfer efficiency

- Combining different architectures to achieve greater efficiencies in workload distribution

The emergence of technologies such as NV Link will accelerate this shift.

Conclusion

In the future, AI Infrastructure will likely rely heavily on a mix of high-quality hardware, improved architecture, and smart resources.

Instead of simply increasing the size of GPU clusters, businesses will need to implement a strategy to capitalize on the ability to scale up. Some examples include:

- Distribution of processors according to distributed computing models

- Specialized hardware for various tasks performed within the same company

- To enable improvements in communications and networking capabilities

NVIDIA Rubin’s insights into scaling AI represent an urgent issue for the industry. AI scaling can no longer be seen solely as a challenge from a development perspective; it must be thought through on a more strategic basis, and innovation must play a role in this process.