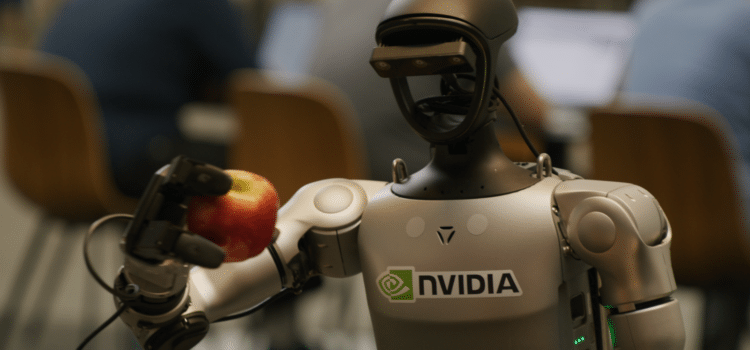

NVIDIA is launching a unified platform to advance intelligent home and humanoid robots, driving innovation in the field.

Building on this central platform, the ISAC GR00T-N1 Foundation model is fundamental. It enables robots to think, learn, and act more like humans.

Announced at GTC and CES 2025–2026, these updates are meant to help robots handle more than just basic tasks, enabling them to manage complex, changing household chores.

Main Features Of NVIDIA’s Robot AI System

- Isaac GR00T-N1 model is the first open, fully customizable foundation model for general humanoid reasoning and serves as the primary control system.

- The robot AI features two subsystems: one for fast, instinctive responses similar to immediate human reflexes and another for more considered, deliberate decision-making, akin to human logical thinking.

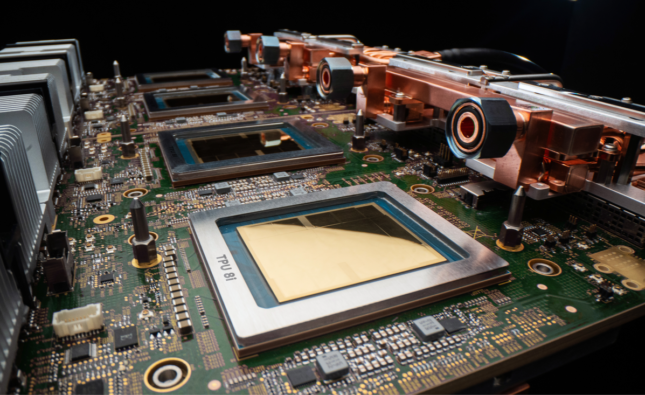

- The Jetson Thor Computing Platform is dedicated to powering humanoid robots; it leverages the Blackwell GPU to deliver the processing power needed to run complex AI models on the robot itself, enabling real-time interaction and decision-making.

- ISAC Lab (formerly ISAC SIM) is a virtual training environment where robots practice new skills in simulated digital worlds before using them in real life, helping ensure better performance and safety. (SIM: Simulation)

- Newton Physics Engine is designed to improve physical realism; it was developed with Google DeepMind and Disney Research to heighten a robot’s ability to accurately interact with and manipulate objects in its environment.

With these features established, we can now consider what future home robots will be able to accomplish.

With the new AI system, home robots will be able to

- Understand and act, robots can follow complex instructions like “tidy the house” and figure out the steps needed to complete the task without being programmed for every single movement, learned from humans through the high-safety GR00T Blueprint.

- Robots can acquire new skills by observing humans perform tasks and generate substantial training data to accelerate their learning and adapt to environments.

- The system enables robots to handle new situations such as handling different objects, navigating unknown areas, and managing multi-step tasks.

Industry Adoption

NVIDIA is partnering with numerous firms to deploy these technologies, including 1x Technologies, Figure AI, Boston Dynamics, Agility Robotics, and LG Electronics. These partners are using Jetson Thor and ISAC GR00P to develop robots for both consumer and industrial applications.

By enabling broader collaboration, the goal is to move from specialized robotic arms to general-purpose humanoid robots that can work safely alongside humans in homes and factories.

NVIDIA has introduced a range of new technologies to boost the development of humanoid robots. This includes NVIDIA ISAC GR00T-N1, the world’s first open, fully customizable foundation model designed for general-purpose humanoid robots. reasoning and scales.

Other technologies in the lineup include simulation frameworks and blueprints, such as the NVIDIA ISAAC GR00T blueprint, which helps generate synthetic data. There is also Newton, an open-source physics engine developed by Google DeepMind and Disney Research, specifically for building GR00T-N1, the first in a customizable model series available to developers, with pre-training to help address global labor shortages on people.

The age of generalist robotics is here, said Jensen Kwan, founder and CEO of NVIDIA, with NVIDIA ISAAC GR00T N1 and new data-generation and robot learning frameworks. Robotics developers everywhere will open the next frontier in the age of AI.

Looking closer at how GR00T N1 supports the developer ecosystem, we see distinct advances for the community

The GR00T-N1 Foundation model uses a dual-system design inspired by how people think. System 1 acts quickly, like human reflexes or intuition. System 2 takes a slower, more careful approach for deliberate decision-making.

System 2 in the model uses a vision-language model AI that connects images and text to understand its surroundings and instructions, then plans actions. System 1 takes these plans and turns them into robot movements, learning from both human-guided data and synthetic data made using NVIDIA Omniverse (a simulation software suite).

GR00T-N1 can handle a variety of common tasks, for example, grasping moving objects with one or both arms and passing items from one arm to the other. It can also manage complex multi-step tasks that need a longer context and a mix of skills. These abilities are useful for tasks such as material handling, packaging, and inspection.

Developers and researchers can further adapt GR00T-N1 for specific robots or tasks using real or synthetic data.

During his GTC keynote, Huang showed One X’s humanoid robot performing household tidying tasks autonomously using a policy trained with GR00T-N1. This demonstration resulted from an AI training partnership between 1X and NVIDIA, highlighting how GR00T-N1 enables 1X robots to gain autonomy in complex tasks.

The future of humanoids is regarding adaptability and learning, said Brent Barnidge, CEO of One X Technologies. While we develop our own models, NVIDIA’s GR00T-N1 provides a significant boost to robot reasoning and skills. With minimal post-training data, we fully deployed on Neo-Gamma, promoting our mission of creating robots that are more than tools rather, companions capable of assisting humans in valuable, immeasurable ways.

Other top humanoid developers with early access to GR00TN1 include Agility Robotics, Boston Dynamics, Menti Robotics, and Neura Robotics. This partnership enables these companies to accelerate the development and testing of humanoid capabilities using NVIDIA’s technology.

NVIDIA, Google DeepMind, and Disney Research focus on Physics

NVIDIA is working with Google Deep mind and Disney Research to create Newton, an open-source physics engine. Newton will help robots learn to deal with complex tasks more accurately.

Newton is built on the NVIDIA Warp framework and will be optimized for robot learning. This collaboration enables the engine to work with simulation tools such as Google DeepMind’s MuJoCo and NVIDIA Issac Sim. The companies also plan to let Newton use Disney’s physics engine, which should advance robotic simulation accuracy across partner platforms.

Google, DeepMind, and NVIDIA are also working together on MuJoCo Warp, which should speed up robotics machine learning tasks by over 70 times. This partnership provides developers with Google DeepMind’s open-source MJX library and faster access to advanced simulation for robot training.

Disney Research will be among the first to use Newton to advance its robotic character platform, powering next‑generation entertainment robots such as the expressive Star Wars–inspired BDX droids that joined Huang on stage during his GTC keynote.

The BDX droids are just the beginning. We’re committed to bringing more characters alive in ways the world hasn’t seen before, and this cooperation with Disney Research, NVIDIA, and Google DeepMind is a key part of that vision, said Kyle Laughlin, senior vice president at Walt Disney Imagineering Research and Development. This alliance will allow us to create a new generation of robotic characters that are more expressive and engaging than ever before and connect with our guests in ways that only Disney can.

NVIDIA, Disney Research, and Intrinsic have also announced a new partnership to develop

OpenUSD pipelines and best practices for handling robotics information workflows are intended to facilitate more efficient interoperable data management among robotics partners.

More Data to Advance Robotics Post-Training

Robots need large, varied, and high-quality datasets to develop, but collecting this data is expensive for humanoid robots. Real human demonstration data is limited to how much a person can do in a day.

To help solve this problem, NVIDIA announced the ISAC GR00T blueprint for synthetic manipulation motion generation, built on Omniverse and NVIDIA Cosmos transfer-world–based models. This blueprint enables developers to generate large amounts of synthetic motion data for manipulation tasks using only a few human examples.

With the first parts of the blueprint, NVIDIA created 780,000 synthetic motion paths in just 11 hours. That equals 6,500 hours or 9 months of human demonstration data. By mixing this synthetic data with real data, NVIDIA improved GR00T N1’s performance by 40% compared to using only real data.

To provide better training data, NVIDIA is releasing the GR00T-N1 dataset as part of a larger open-source physical AI dataset now available on HuggingFace.

Availability

The NVIDIA GR00T N1 training data (robot learning data) and evaluation scenarios can now be accessed on HuggingFace and GitHub two online platforms for sharing software and datasets. The NVIDIA ISAC GR00T Blueprint is available as an interactive demonstration on build.nvidia.com and as a downloadable resource on GitHub.