Houston, TX

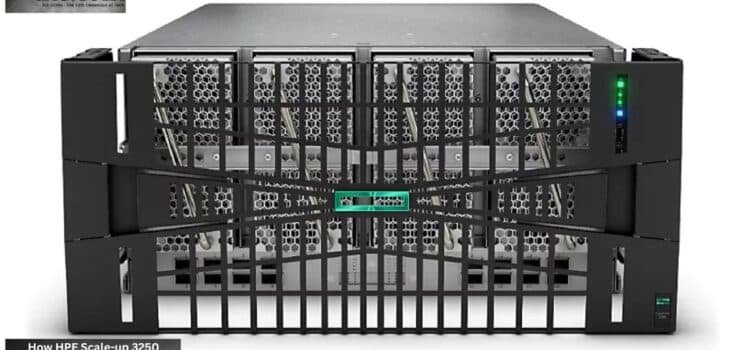

Atomic answer- The launch of the Hewlett Packard Enterprise (HPE) Compute Scale-up Server 3250 marks the first validated server system for SAP HANA that supports a minimum memory configuration of 48TB. This technological development enables corporations to execute heavy-duty agent-based AI applications on-premises using memory alone, bypassing the 10,000-nanosecond lag present in conventional scale-out architectures.

AI competition no longer revolves solely around better GPUs or bigger language models. Now, the battle is all about memory architecture, and Hewlett Packard Enterprise is taking direct aim at this crucial evolution in technology.HPE Compute Scale-up 3250 SAP HANA AI 2026 , announced at the end of last year, represents an all-new server architecture tailored specifically to the demands of extremely fast real-time AI analytics.

The product plays out against a backdrop of difficulties faced by enterprises with existing scale-out infrastructure. The traditional architecture using standard Ethernet is often plagued by latency issues that negatively impact the performance of any AI inference, real-time analytics or autonomous enterprise processes.Instead, the HPE Compute Scale-up 3250 SAP HANA AI 2026 platform is designed to keep everything in memory, avoiding the many latency problems associated with such workloads.

In particular, the importance of this new offering lies in its certification to deploy SAP HANA with a memory floor of up to 48 TB.The emergence of the 48TB in-memory AI agentic workload server architecture is helping enterprises rethink the way they deploy large-scale AI environments.

The development of autonomous AI solutions, digital twins, predictive analytics, and enterprise copilots demands low-latency infrastructure.

Why In-Memory AI Databases Are Important

Current organizations generate massive amounts of operational data at every instant. Traditional data storage solutions do not always have the capability to analyze data in a timely manner to support instantaneous decision-making. Hence, the importance of the in-memory AI database.

This is where the 48TB in-memory AI agentic workload server becomes especially valuable for handling enterprise-scale AI tasks. As such, it minimizes the time required to analyze the data and thus speeds up the inference cycle.

Advantages include:

- Increased speed in enterprise analytics

- Lower latency in AI workflows

- Enhanced operational forecasting capabilities

- Instantaneous decision-making

- Greater scalability with AI implementation

In fields such as finance, logistics, health care, and manufacturing, time can make a significant difference. This is why the launch of the HPE 3250 server is highly significant when discussing enterprise AI ROI, since organizations are assessing it based on productivity gains from AI.

Validation of SAP HANA Transforms Enterprise Procurement Operations

One of the most significant changes resulting from the system’s release is its validation for SAP HANA platforms. There is already a wide range of global enterprises that rely on SAP ecosystem processes for vital operations, making the incorporation of AI capabilities into such workflows much simpler with the use of an established scale-up platform.

The reason is the growing trend among businesses to integrate AI into their ERP, logistics, procurement, and finance platforms. In-memory execution will allow eliminating the delays associated with transferring data between different computing environments.

It can also be used in combination with RISE with SAP deployments to switch between on-cloud and on-premises environments with greater convenience.

Advantages for enterprises using this platform include:

- Easier hybrid cloud deployment

- Lower latency of operation

- Superior AI workload orchestration

- Less complex data handling

- Enhanced transactional analysis at scale

Switching over to this solution is significantly simpler for businesses that already use HPE infrastructure.

Intel Xeon 6 Boosts Scale-Up Performance

Additionally, the inclusion of Intel Xeon 6 processors is another factor in the system’s success. Modern enterprise-scale artificial intelligence applications require balanced optimization of compute and memory instead of relying solely on GPUs.

In particular, the new generation of processors is aimed at enterprise workloads that focus on density, memory performance, and robustness. Together with 16 sockets, the system provides the possibility to achieve 64 TB of DDR5 memory capacity.

As a result, enterprises will be able to:

- Operate large AI models in memory.

- Boost AI inferencing performance.

- Work in complex simulation environments

- Avoid infrastructure fragmentation

- Optimize enterprise data throughput.

Moreover, the HPE 3250 100ns latency real-time AI analytics advantage allows enterprises to process large datasets more efficiently while reducing network delays that typically slow down AI-driven decisions.

Enterprise Data Center Density as Competitive Indicator

The rise in artificial intelligence has placed unprecedented demands on corporate infrastructure for cooling, rack density, and electricity use. Enterprise data center density is increasingly becoming a key criterion as companies implement AI at scale.

While the traditional system setup typically requires a number of distributed nodes to handle large-scale AI operations, scale-up solutions such as the HPE platform enable companies to compress high memory requirements onto smaller machines.The 48TB in-memory AI agentic workload server model significantly reduces the need for excessive network communication between separate systems.

Some of the advantages of this include:

- Simpler network setup

- Lower latency across nodes

- Better rack efficiency

- Effective heat dissipation

- Ease of maintenance

As more companies adopt enterprise agentic AI systems, it seems inevitable that processing large datasets through in-memory computation will be a key competitive advantage.

Security and Financial Strategy

Moreover, HPE has been focusing on security by deploying silicon root-of-trust technology and implementing post-quantum cryptography. In view of the constantly emerging cyber risks, there is an increasing need for platforms to offer long-term support for cryptography.

On the other hand, there remains a significant financial challenge when considering the infrastructure required for large-scale AI. The significant investment cost remains a barrier to implementation.

To mitigate this problem, HPE has offered the “90/9 Advantage,” which allows delayed payments in exchange for faster deployment times.At the same time, businesses leveraging RISE with SAP hybrid cloud 3250 deployment frameworks can scale AI workloads more flexibly across hybrid environments.

Conclusion

The introduction of the HPE Compute Scale-up 3250 marks a paradigm shift in the approach to designing enterprise AI infrastructure. Rather than using solely distributed systems, there is now an increasing preference for scaled-up systems to enable effective in-memory AI computation.

With its support for in-memory AI databases, SAP certification, Intel Xeon 6 capabilities, and high-density data center design, the platform can easily qualify as one of the best candidates for future enterprise AI computing. Additionally, enterprises evaluating infrastructure investments are increasingly asking: how does HPE Compute Scale-up 3250 48TB memory floor eliminate 10000-nanosecond Ethernet latency for enterprise agentic AI workloads on SAP HANA.

With the rapid adoption of AI across industries, future success would depend on proper infrastructure planning.

Enterprise Procurement Checklist

- Procurement Logic: Prioritize scale-up architecture for real-time AI analytics where 100ns latency is a competitive requirement.

- Operational Advantage: Post-quantum cryptography is natively embedded in the silicon root of trust for all 3250 nodes.

- Infrastructure Constraint: Requires a 16-socket configuration to achieve the full 64TB DDR5 memory capacity.

- Deployment Impact: Optimized for “RISE with SAP” deployments, streamlining cloud-to-on-premise hybrid transitions.

- Financial Consequence: High upfront CapEx is mitigated by HPE’s “90/9 Advantage” financing, offering 0 payments for 90 days.

Source- Hp News