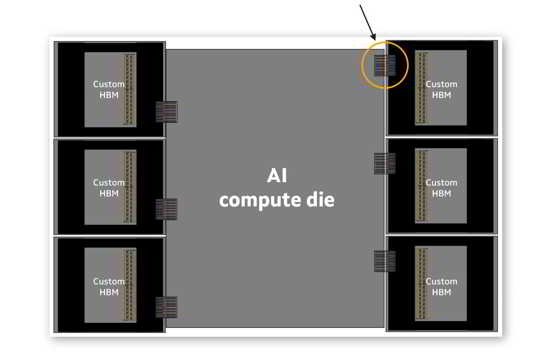

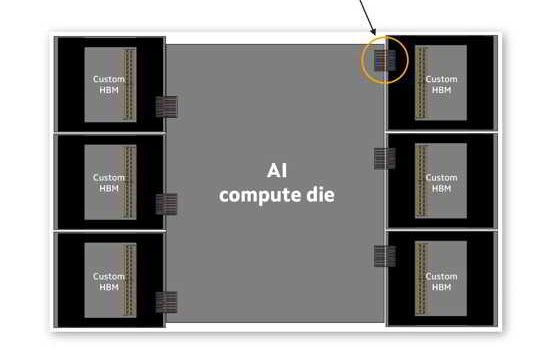

SANTA CLARA, Calif.: There appears to be an ongoing silent revolution within the US cloud ecosystem, centered on the introduction of a highly efficient engineering update from Marvell AI accelerator chip 2026 that may well change the future architecture of hyperscaler computing systems. Given the increasing competition around performance and expenses, the move towards efficiency appears inevitable. In this case, efficiency comes from the use of purpose-built AI accelerators that can complete inference workloads faster and use less energy than general-purpose GPUs. The approach will allow cloud providers to tune specific computing processes without wasting excessive computing power.

Efficiency Takes the Lead

With the current engineering update, custom AI chip cloud infrastructure can perform specific workloads more efficiently. Rather than scaling out broadly, the cloud industry players are learning how to scale effectively.

Among the most notable updates are:

- Enhanced inference acceleration in data center chips

- Energy-efficient chip deployment per task

- Real-time processing at lower latency levels

- Cost-effective deployments on massive scales

Influence on Hyperscaler Strategy

Currently, the big cloud players are adapting their AI inference GPU alternative hyperscaler strategy towards domain-specific silicon. This update reflects the broader industry trend, as custom silicon is increasingly seen not only as an addition to GPU clusters but also as an alternative.

- Higher adoption of custom silicon among US-based cloud vendors

- Decreased dependence on GPU-focused architecture

- Increased adaptability of load distribution

In doing so, the Marvell AI accelator chip 2026 offer another option in a market monopolized by GPU suppliers.

Industry-wide Competitive Impact

The ripple effect of this update has already begun to manifest. While NVIDIA continues to lead in training applications, inference optimization is emerging as the next frontier of efficient cloud infrastructures.

- US cloud vendors are fast-tracking the adoption of customized silicon solutions.

- GPU suppliers face challenges in inference-focused loads

- AI-accelerated hardware is becoming more popular for budget-oriented applications.

As cloud AI chip cost efficency diversification continues, performance per dollar will become the new competitive metric.

Cost Efficiency Takes Center Stage

Among the most important consequences of this development is its impact on costs. Scalable but cost-efficient solutions are required for AI inference tasks. The integration of Marvells AI accelator chip 2026 enables cloud providers to reduce costs without sacrificing performance.

In terms of cost efficiency:

- Reduced power usage increases margins.

- Silicon optimization eliminates unnecessary hardware.

- Efficient AI scaling avoids costly infrastructure redundancy.

It sets the stage for specialized processors becoming a major factor in cloud infrastructure economics in the next generation.

The Future Direction of Cloud Infrastructure Design

Going forward, it is anticipated that cloud computing will migrate towards hybrid compute architectures that combine GPU and accelerator hardware. It would allow cloud providers to select the most efficient compute unit based on the workload’s requirements.

Projected future trends include:

- Increased use of custom processors in data centers across hyperscale networks

- Experimentation with hybrid computing stacks

- Fast-evolving design philosophies among AI inference GPU alternative hyperscaler.

With the growing role of AI accelerators, cloud infrastructure is likely to be defined by cost efficiency rather than scale alone in the future.

Cross-manufacturer ripple effects

In addition to the direct consequences for Marvell’s own offerings, we should consider the implications of the update for competitors and other market actors.

- NVIDIA encounters increasing competition for its inference-oriented deployments

- Hyperscale clouds continue developing their custom silicon strategies.

- AWS and Google broaden their efforts in in-house chip design

- Manufacturers drive developments in cloud hardware performance benchmarks.

In light of such initiatives to rethink hyperscale infrastructure, the availability of Marvell AI chips allows companies to build flexible hybrid architectures.

Economics of Cloud Infrastructure

One of the most prominent implications of the update is cost-efficiency. To effectively support AI computations, cloud operators require a scalable, yet highly optimized, architecture. The introduction of a specialized processor from Marvell allows for a different approach to the economics of cloud buildout.

These advantages can be listed as follows:

- Minimized operational expenses due to efficient computing

- Energy savings by optimizing the power consumption of data center processors

- Efficient use of the computing resource without over-provisioning

- Improved scaling strategies for cloud AI applications

Conclusion

The recent update on Marvell AI chip technology highlights the emerging trend in cloud infrastructure development. In its current form, this infrastructure tends to focus less on the power of its components and much more on specialization for specific purposes, thanks to AI accelerators.

Following this trend, hyperscalers should expect increasing demand for AI scaling and optimal cloud hardware. The impact of these developments on innovation in data center chips and market competition suggests this change may become permanent.

Overall, the development of such technology represents the first step towards a more productive and efficient future in the US cloud system infrastructure.

Source: Marvell Newsroom