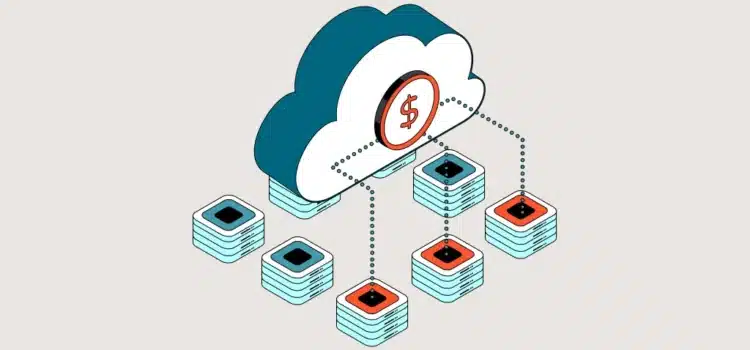

Mountain View, Calif.: A software provider handling 10,000 user requests each month suddenly sees operational costs jump by 40%. This sharp increase shows how Google Gemini pricing affects US SaaS companies that use large language models. With new spending caps and changing token prices, founders have to rethink their basic cost assumptions. Companies building features on the Google Gemini API now face the risk that even small changes in token usage can affect their profits. As teams grow their user base, adapting to this new AI SaaS pricing model is essential for long-term success.

The Economics of Token Consumption

The shift in billing tier systems and token consumption forces a complete rethink of AI SaaS pricing. Previously, development teams relied on predictable flat-rate tiers for their infrastructure needs. Now, they must track the exact number of reasoning tokens and cache sizes consumed during every user prompt. Newland, when software architects link their platforms to the Google Gemini API, they see significant cost swings. The new system charges for both internal reasoning tokens and the output users see. For example, a customer support platform using advanced reasoning models may find its AI API costs almost double when handling long conversations.

Managing Variable Expenditures

Consider a project management tool that summarizes weekly meeting transcripts. The platform processes approximately two million input tokens daily. The cost differential between standard inference and thinking tokens creates an unstable expense curve. To maintain a profitable AI SaaS pricing model, product managers must pass these fluctuating AI API costs to end users through tiered subscription packages.

The Architecture Of Scaled Features

Using advanced models means companies have to control costs for every query. They can’t afford to send unoptimized data. For example, a SaaS platform that analyzes documents should cache data to reduce costs.

When implementing SaaS AI integration, developers often forget to separate the context storage from the input payload. Storing large documents can easily push usage beyond the tier-one limit of $250 per month, triggering account locks during high-traffic periods.

Balancing Performance And Cost

Imagine an accounting software company that scans quarterly tax reports, processing hundreds of pages for each client. If it sticks to the tier one limits of the Google Gemini API, a spike in user queries can use up the monthly budget before the month ends. The team then has to use context caching to lower storage costs. While caching reduces ongoing input costs, the upfront storage costs still mean the company needs a steady flow of customers to remain profitable. Moreover, the need for robust enterprise AI pricing models requires a different approach to margins. When B2B clients demand high-volume data processing, the SaaS provider must establish usage-based add-ons. Without these add-ons, SaaS AI integration erodes the host company’s gross margins.

Market Dynamics And Tool Selection

As models change, companies have to look at other infrastructure options. With older preview models now retired, developers need to update parts of their code to use the new endpoints.

Using the latest Google AI tools allows developers to reduce inference latency. However, these new options come with higher price tags for complex reasoning. When evaluating AI competitions, SaaS companies must balance model capabilities with operational expenditures.

Strategic Infrastructure Adjustments

Take a legal tech startup pairing model providers. They find that Google AI tools work very well with long documents. However, the high cost per million output tokens makes them hard to use in cheaper consumer plans. Developers need to set rate limits to prevent unexpected spikes in monthly bills during busy periods. This means they constantly have to balance response quality with costs.

To manage costs, product teams use batch processing for tasks that aren’t urgent, such as nightly database checks. Batch processing can cut costs by half by sending these tasks through the batch endpoint. The startup keeps its AI SaaS pricing on track and operations steady.

Market Realities And Future Horizons

Enterprise clients want steady software subscription rates. If infrastructure costs change with the user front line, the SaaS provider assumes the risk of reaching tier three status, with a $20,000 monthly cap. Providers need many enterprise customers just to break even.

AI competition pushes companies to keep user fees low. At the same time, the $10 minimum prepayment tiers mean small development teams have to watch their cash flow closely.

Think of a marketing automation firm that creates email copy. During busy marketing seasons, it faces unpredictable token costs. To handle enterprise AI pricing, the firm sets dynamic spending limits for each customer. This helps protect profits from sudden spikes in token use.

Looking ahead, successful SaaS providers will use both caching and lightweight models. The main goal will shift from pure intelligence to maximizing value per token. Companies that fine-tune their context windows will win the biggest share of the market.

Source: Cloud Next ‘26: Momentum and innovation at Google scale