AI cost advantage now depends on scaling efficiency, not model size. That shift is clearly visible in recent NVLink benchmark and NVIDIA AI scaling results, where improvements to the interconnect drive real gains. Instead of focusing only on model settings, organizations are now evaluating how effectively GPUs communicate. The latest upgrades from NVIDIA highlight how infrastructure decisions directly influence performance outcomes.

Once Bandwidth Becomes the Real Bottleneck

The Quiet Constraint Inside Modern Clusters

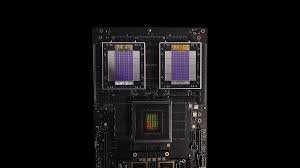

Large models need fast communication between GPUs to work well. Even with strong processors, limited bandwidth can slow down distributed training. NVIDIA’s NVLink architecture helps solve this problem.

Traditional interconnects can introduce synchronization delays. NVLink reduces these delays by enabling fast, direct transfers between GPUs. As clusters get bigger, this benefit becomes even more important.

This leads to better GPU cluster performance, especially with heavy workloads. Training tasks that used to slow down because of network delays now run more smoothly.

Measuring What Actually Matters

Why NVIDIA Benchmark NVIDIA AI Scaling Tells a Deeper Story

Benchmarks now focus more on scaling efficiency than just raw computing power. The latest NVLink benchmark and NVIDIA AI scaling data show how well systems keep up as more nodes are added. This gives a better idea of real-world performance.

Scaling problems often don’t show up in small setups, but as clusters grow, network delays can offset the benefits of increased computing power. NVLink helps by keeping bandwidth steady across all GPUs.

This directly supports AI training scale optimization. Faster synchronization means fewer idle cycles and more efficient resource utilization.

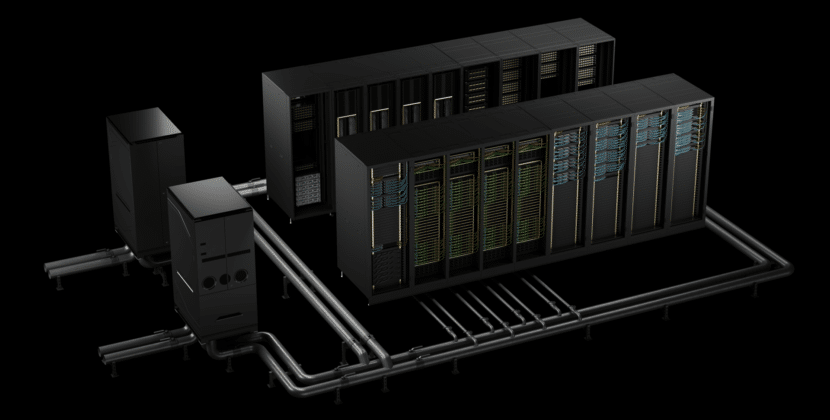

Rethinking Data Center Architectures

From Isolated GPUs to Unified Systems

Infrastructure decisions now directly alter operational budgets. Efficient scaling reduces the number of GPUs needed for a given workload. This is where AI compute cost reduction becomes achievable.

NVLink improves communication efficiency, reducing training time. Shorter training cycles mean using less energy and spending less on infrastructure.

The benefits go beyond just training. Inference tasks also benefit from faster data exchange, especially in live applications.

Training Speed Is No Longer Just About Computing.

Balancing Compute Power with Communication Speed

High-performance GPUs alone cannot guarantee faster training. Communication lags often limit overall throughput. This makes AI training speed optimization a multi-dimensional challenge.

NVLink helps by matching computing power with communication speed. GPUs can share data as fast as they process it. This harmony is key for large models.

This improvement is easy to see in distributed training. Synchronization happens faster, so you can run more training cycles in the same amount of time.

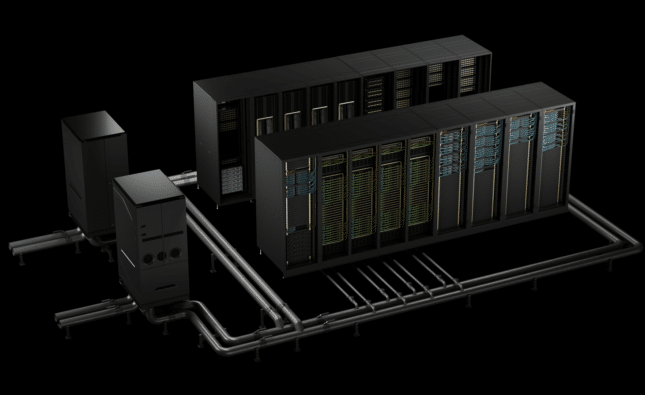

Scaling Without Losing Efficiency

The Promise of NVLink Benchmark NVIDIA AI Scaling

A major challenge in AI infrastructure is maintaining effectiveness as you scale up. Many systems start strong but slow down as more nodes are added. The latest NVlink benchmark and NVIDIA AI scaling results show a better path.

NVLink keeps bandwidth steady even as clusters grow. This lets organizations scale up without losing efficiency. It also makes it easier to plan for future growth.

Such consistency is essential for GPU scaling in data centers. Predictable performance enables better resource planning and long-term strategic development.

Architecture Choices That Shape Outcomes.

Why Interconnect Design Matters More Than Ever

The type of interconnect you choose affects every part of AI deployment. It matters for both training speed and cost efficiency. NVIDIA’s NVLink architecture stands out in high-performance settings.

Other interconnects might work for smaller jobs, but large-scale AI needs more bandwidth and less delay. NVLink handles these needs well.

This advantage is even more important as workloads get more complex. Multi-model pipelines and real-time computation need smooth data flow between GPUs.

Cost, Scale, and the New Competitive Edge

Where AI Compute Cost Reduction Aligns With Performance

Organizations are under pressure to deliver results while controlling costs. Efficient scaling provides a path to achieve both. And willing enables faster training with fewer resources, directly supporting AI compute cost reduction.

This gives companies a competitive edge. They can test ideas faster and deploy models more efficiently. Being able to scale without high costs sets them apart.

The benefits aren’t just for big companies. Smaller teams also gain from needing less infrastructure and getting more uniform performance.

Final Perspective. Scaling Intelligence, Not Just Models

The latest NVLink upgrade signals a major shift in AI strategy. Now, success depends on how well systems scale, not just on the power of each part. Interconnect technology is now a key factor for both performance and cost.

Organizations should think about long-term scalability when choosing infrastructure. NVLink shows that smart design can lead to real improvements. As AI workloads grow, efficient scaling will shape the next stage of progress.

Source: Technical Blog