Washington DC: One disputed inventor’s name can halt a federal contract worth hundreds of millions. This risk is now central to how companies assess artificial intelligence vendors as guidance around AI inventorship becomes clearer, especially with the use of common factors. Government procurement teams are changing the rules that decide who wins, who qualifies, and who is left out.

The Legal Fault Line Behind AI Inventorship

Federal agencies have always relied on clear ownership structures when awarding contracts, but that clarity breaks down when AI systems help create new inventions. This is no longer just a theoretical issue. If an algorithm creates a new solution used in defense software, who counts as the inventor under US law?

The answer now frequently depends on the Pannu factors, which define joint ownership based on contribution, collaboration, and design. These rules, once used mainly for human investors, are now being tested with AI-assisted development. A contractor using advanced systems, such as those found in current-tier AI environments or on AWS GovCloud, must demonstrate that human contributors meet the inventorship requirements.

If they cannot do this, problems arise quickly in federal procurement. Agencies are unwilling to award contracts when intellectual property rights are involved. This creates a new compliance burden that goes well past traditional patent law.

Procurement Meets Patent Doctrine

The overlap between AI, inventorship, and federal procurement is causing a major change. Contracting officers now look beyond technical skills: they also consider where the innovation originated. This means reviewing how the code was created, who led the process, and whether human contributors meet the Pannu factors.

Take the example of a defense contractor developing an AI-powered threat detection platform. If parts of the system were generated by generative models, auditors will want to know whether those outputs meet joint inventorship standards. If this is unclear, the contract could be delayed or even rejected.

This careful review also applies to platforms running in secure environments like AWS GovCloud, where compliance rules are already strict. Vendors now need to use legal tech tools that can track authorship in detail. Without these tools, they cannot provide the needed documentation to meet procurement guidelines.

The Rising Stakes Of AI-Assisted Development

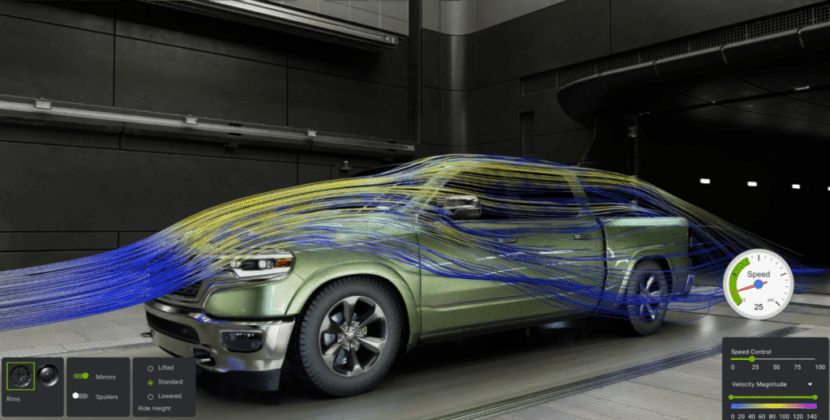

The risks become clearer when you consider the regulatory issues of AI-powered code in US defense contracts. AI systems can generate useful and even complex code with little human help. While this productivity adds value, it also brings uncertainty.

If a system creates a key algorithm for a missile defense application and no human can claim direct authorship under the Pannu factors, the intellectual property might not be protected. For government agencies, this is an unacceptable risk. For contractors, it could threaten revenue from long-term contracts.

Companies using tools similar to generative AI need to set up clear administrative frameworks. These frameworks explain how human engineers guide AI outputs and ensure the contributions meet joint inventorship standards. Without these controls, even the best technical solutions might not pass procurement review.

Legal Tech As A Compliance Backbone

Industry has responded quickly. Companies are investing in many legal tech systems that document the development process for AI-assisted inventions. These platforms record who started prompts, how outputs changed, and where humans influenced the final results.

This detailed documentation fulfills two main purposes. It helps with patent filings by aligning with AI inventorship standards and strengthens procurement bids by demonstrating compliance with federal procurement rules. In this way, legal tech connects innovation alongside eligibility.

Infrastructure providers now play a bigger role. Services such as AWS and GovCloud are not just secure hosting services anymore; they also store comprehensive logs of development. Contractors must make sure that every stage of AI-assisted work in these environments can pass review under the Pannu factors.

Competitive Pressure and Market Realignment

Stricter AI inventorship guidance is changing the competitive landscape in the defense technology sector. Companies that clearly show compliance get an immediate edge. Those who cannot may face delays, higher costs, or even be left out.

This situation is happening right now. A contractor using advanced analytics with systems like Palantir AI might perform better, but absent a clear argument for joint inventorship standards, that advantage might not translate into winning contracts.

At the same time, procurement teams are getting more advanced. They now use organized evaluation methods that combine patent law with acquisition rules. The Pannu factors affect not only legal decisions, but also a company’s position in federal procurement.

A New Baseline for Government Contracts

Moving to stricter AI inventorship standards does not slow down innovation; it makes it more disciplined. Companies need to match their technical processes with legal requirements from the start. This coordination involves legal and compliance teams, often with help from advanced legal tech tools.

Those stakes will keep rising as agencies depend more on AI-powered systems. The regulatory risks of AI-generated code in US defense contracts will remain significant, shaping contract structures and award decisions. Companies that plan for these risks and build compliance into their development will move faster and compete better.

This creates a procurement environment where clear authorship is just as important as technical skill. The Pannu factors set the rules, but results depend on how well companies follow them. Those who make AI inventorship a strategic priority rather than a mere legal detail will lead the next phase of government technology.

Source: Uspto News and updates