Redmond, Wash.: A Fortune 500 financial services company recently found that an automated workflow had been collecting sensitive client data for weeks because its permissions were too broad. This situation shows the serious security risks that autonomous AI agents can pose in enterprise Microsoft 365 environments. As these systems become more common in daily work, IT teams must prove that digital workers follow strict rules. The third-party audit is now the main way to test this. Organizations need to balance their first adoption of smart tools with the requirements of Agent 365 | AI identity protocols.

When executives approve new automation, they often see it as just another software upgrade instead of a major change in how work gets done. This mistake can leave gaps in oversight. The Agent 365 | AI identity framework helps address this by providing unique, verifiable credentials to non-human entities. Without this step, IT teams cannot easily find the source of a problem or stop unauthorized access to old data.

The Identity Crisis Within Modern Infrastructure

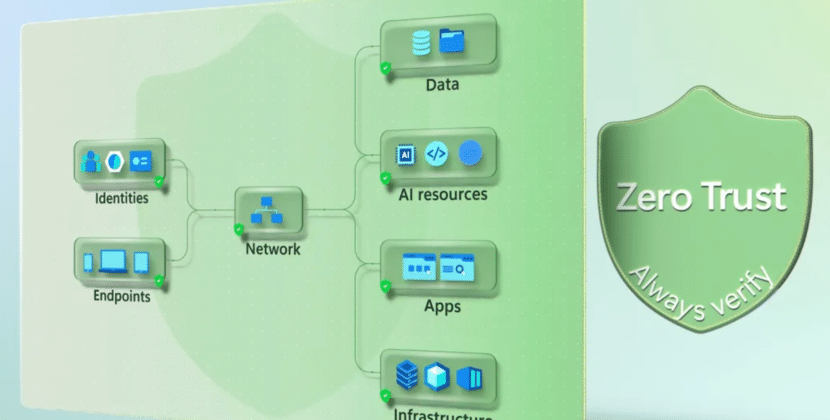

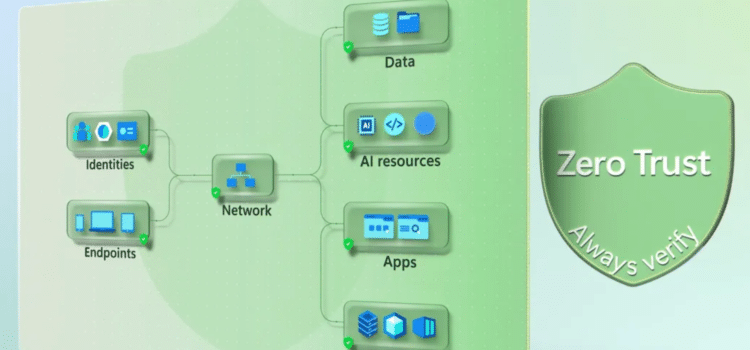

Moving to agentic computing changes how we think about network security. In the past, security was focused on protecting people. Now it needs to focus on machines too. If organizations do not manage the identities of automated systems as carefully as they do for employees, serious problems arise. Microsoft security requires that every engagement be authenticated, but many companies do not apply this rule to non-human workflows.

Administrators should use Entra ID to establish a clear access structure for these new automated entities. By treating automation as an important part of the identity system, companies can apply conditional access policies that adjust in real time. If a system tries to access something new, the policy should require another check. This helps contain any damage if an agent is compromised or behaves unexpectedly.

The Governance Gap

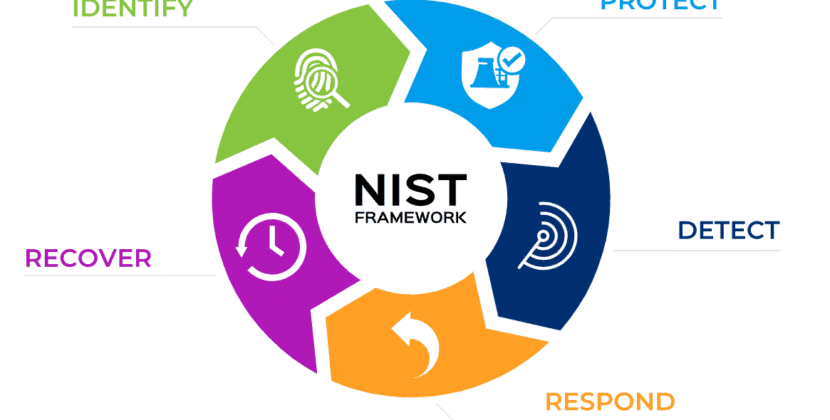

Good governance remains the most important hurdle for companies moving from pilot to production. Many teams deploy software without a clear understanding of the data pipeline. When autonomous agents make decisions, they create a trail of intent that requires constant monitoring. If the audit records are incomplete, compliance officers have no way to verify the rationale behind a major transaction.

Real governance is more than just keeping logs. It means being involved in every stage of the agent’s life. Each automated entity needs a human sponsor who is responsible for what it does and what it can access. Without this accountability, there is no clear way to fix problems when they happen.

Securing Compliance Under Pressure

The 30-day audit period makes IT teams face tough questions about their setups. Are autonomous agents accessing files they should not? Does the Entra ID setup follow the concept of least privilege? These questions are hard to answer because developers may add new features so quickly.

Microsoft Security offers the tools needed to see these connections clearly. By using built-in features to map how agents and data depend on each other, teams can spot over-privileged entities before they cause problems. This is not only about meeting compliance rules. It is an important step toward strengthening the system and supporting safer, faster innovation.

The Future of Trusted Automation

As agentic coding develops, trust will shape how people and machines work together. The most successful organizations will treat identity as the core of their operations, not just an extra step. Using the Agent 365|AI identity model gives companies the structure they need to grow safely.

In the future, successful companies will treat user and machine access equally when assessing security risks. Each request will be seen as untrusted until it is verified. Leaders who accept this will find that strong security can help their businesses build trust into their systems, and businesses can create an environment where innovation and protection go hand in hand.

Source: Microsoft Source