Santa Clara, Calif.: Quantum processors usually make an error about once every thousand operations. Fixing this unreliability requires significant resources. IT teams often spend days manually tuning systems to run basic experiments. The NVIDIA Ising | Quantum AI framework changes this by automating calibration and fault correction. As a result, chief financial officers see calibration times drop from days to just hours when reviewing data center costs.

The Cost of Traditional Calibration

Manual calibration is a major cost for research institutions and tech companies. Technicians must continually measure and adjust physical qubits to preserve coherence. This ongoing work raises expenses and slows down research.

The introduction of the new software alters the capital allocation strategy for enterprise quantum activities.

Manual calibration is a major cost for research institutions and tech companies. Technicians must continually measure and adjust physical qubits to preserve coherence. This ongoing work raises expenses and slows down research.

The introduction of the new software alters the capital allocation strategy for enterprise quantum activities. Instead of dedicating vast teams of physicists to manual adjustments, firms can deploy multimodal models to automate them. This change lowers the day-to-day operational burden and shifts the focus toward hybrid computing environments.

Lowering the Financial Burden

Procurement teams now need to consider how NVIDIA Ising in open models affects their hardware budgets. When labs use these new tools, less manual work is required, allowing managers to allocate more resources to high-performance computing clusters. Lower maintenance costs for delicate hardware make research and development more affordable.

The news framework also uses a 3D convolutional neural network to decode errors as they happen. This lets current hardware handle bigger computations with fewer mistakes. Companies no longer need to buy additional processors to compensate for low accuracy.

The Role Of Software-Driven Infrastructure

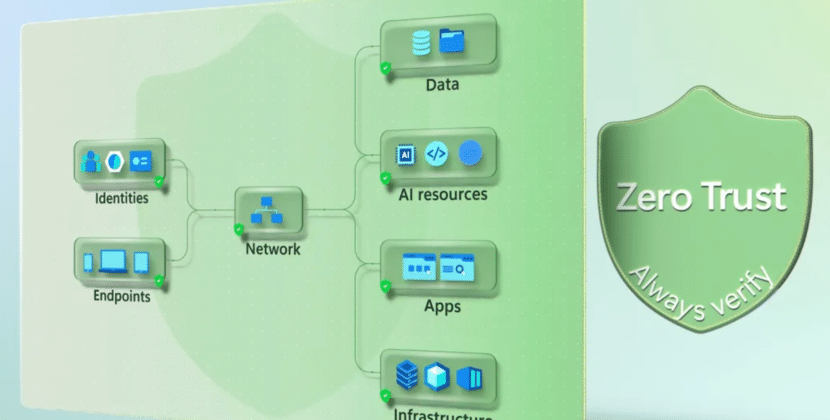

Moving to software-driven calibration tools relies on the system’s architecture. The cuQuantum platform and its laboratories run on regular GPUs and connect to quantum processors through fast interconnects. This setup lets developers use open source AI to fix local errors before they become bigger problems.

When companies use this architecture, they add classical GPUs to their current systems. This means some upfront spending on computing resources, but it saves money over time by extending processor lifespans. Using open-source AI also means researchers avoid paying for expensive software licenses, helping control costs.

Integrating the Control Plane

Modern machines need close coordination between different computing resources. Developers use CUDA Q to write mixed algorithms that run on CPUs, GPUs, and quantum units simultaneously. This flexibility allows research teams to adapt to different types of qubits without having to buy new controllers.

For example, a national lab running large calculations on trap-ion hardware needs its control loop to keep up with the processor’s speed. By using CUDA-Q, the lab doesn’t have to build custom control hardware for every experiment. This standard approach lowers costs and enables faster evaluation of new designs.

Managing the Shift in Infrastructure Budgets

Switching to software-assisted direction changes how labs are built. In the past, organizations bought more physical qubits to boost computing power. Now they focus on balancing investments between classical GPUs and quantum computing units.

Successful QPU integration requires laboratories to upgrade their interconnects to enable rapid communication between the GPU and the quantum chip. The NVIDIA Ising | Quantum AI framework needs these high-bandwidth connections to process error syndromes quickly.

When upgrading, finance teams need to plan for the cost of faster interconnects. The upfront expense is balanced by the system’s data accuracy. With these tools, research institutions lower mistake rates and make their equipment more useful for applied optimization.

Operational Modifications

Enterprise infrastructure managers now face different choices when planning their annual budgets. The shift toward hybrid computing means that purchasing decisions emphasize data bandwidth over raw cubic count. The future of enterprise quantum infrastructure depends on this assimilation. Organizations that adopt the new AI models reduce their overall equipment spending while increasing the reliability of their systems.

With the NVIDIA Ising | Quantum AI framework, companies can train their models on-site and keep their proprietary data secure. This local training lowers security risks and avoids the high costs of using external cloud services.

Forward Looking Horizons

AI-driven control systems are changing how labs manage their computing infrastructure. Companies that upgrade without planning for a software-first approach risk losing profit margins. The key measure for large hardware investments is the ability to scale processes while keeping costs steady.

Source: Nvidia Newsroom