Columbus, OH

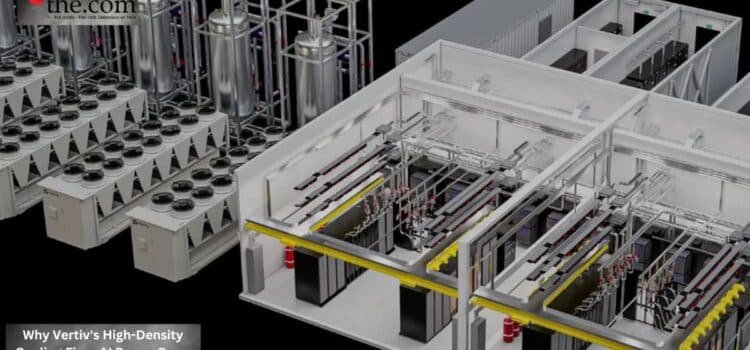

Atomic Answer: Vertiv (VRT) is seeing a massive surge in demand for liquid-to-chip cooling and rear-door heat exchangers as AI rack densities exceed 100 kW. This technical shift is mandatory for enterprises deploying Nvidia Blackwell systems, which cannot be cooled by traditional air conditioning methods.

Today, a single AI training rack can use more electricity than a small commercial building. NVIDIA’s newest GPU clusters often need, and often exceed, 100 kilowatts per rack, and some large operators are already planning for 200 kW setups. The main challenge is no longer having enough computing power. It’s mana ging the heat these systems produce. Traditional air 1`cooling can’t remove heat quickly enough without increasing energy waste and construction costs.

This is why Vertiv liquid cooling is now a key topic in AI data center power planning for executives, for co-location providers, and infrastructure architects.

The Physics Problem Behind AI Expansion

AI infrastructure has changed how data centers operate. Ten years ago, most enterprise facilities ran at 5 to 10 kW per rack. Only high-performance computing setups went higher, and those were rare.

AI erased that boundary.

Modern GPU systems pack a lot of processing power into small spaces. Eight GPU servers used for training large language models create constant heat that older cooling systems can’t handle. Trying to cool these setups with standard air conditioning results in higher fan speeds, increased energy use, uneven airflow, and a greater risk of equipment failure.

This is why Vertiv liquid cooling has become an urgent solution.

Liquid cooling moves heat about three thousand times more efficiently than air. This matters because GPU clusters do not produce steady workloads. They spike aggressively during both training and inference cycles, creating volatile GPU power envelopes that stress both cooling and electrical systems.

Traditional air cooling can’t keep up with these rapid changes, but liquid cooling systems can respond much more accurately.

Why Air Cooling Hits Economic Limits

Many companies initially tried small upgrades rather than redesigning their facilities. They added containment systems, extra chillers, or tested higher aisle temperatures.

For moderate AI adoption, these dose measures helped.

But when workloads exceeded 70-100 kW per rack, the economics changed quickly. Operators found that air cooling alone needed more space, bigger equipment, and more energy. The resulting thermal CapEx often exceeded the cost of the compute hardware itself over multi-year deployments.

This creates a serious bottleneck for companies investing heavily in AI infrastructure.

For example, a company installing 500 high-density GPU racks could face delays of 18 to 24 months if its current facilities cannot handle the heat and power needs. While they wait, the requirements and resources sit unused, and competitors get ahead.

Now, the market sees cooling design as a key business factor, not just a facility issue.

How Rear Door Cooling Changes The Equation

Rear door heat exchangers have become popular because they make it easier to upgrade existing facilities. Instead of rebuilding the whole data hall, operators can add liquid cooling directly to high-density racks.

That approach matters for organizations pursuing a datacenter infrastructure retrofit rather than a greenfield of construction.

A rear-door heat exchanger collects heat from servers before it enters the room. It uses chilled liquid to absorb and remove heat directly at the rack, taking pressure off the main air systems. This lets facilities handle denser AI setups without having to replace all their mechanical equipment right away.

For companies with older infrastructure, this offers a gradual transition.

Instead of halting operations for years to rebuild, companies can upgrade incrementally while growing their AI capacity. This is important financially since downtime and delays often cost more than buying new hardware.

The Real Cost of Supporting 100 kW AI Racks

A less obvious challenge in AI expansion is not just cooling efficiency but also planning uncertainty.

Executives frequently underestimate the infrastructure redesign costs for 100 kW+ per-rack AI clusters because they focus narrowly on GPU acquisition budgets, the supporting ecosystem, power delivery, heat rejection, floor loading, and piping systems. These costs often multiply project costs far beyond initial projections, and backup redundancy further increases them.

A facility built for 10 kW workloads can’t just scale up with software tweaks.

It needs real structural changes.

That includes upgraded busways, liquid distribution systems, advanced monitoring platforms, and integrated thermal controls capable of handling volatile AI data center power demand patterns. Without those investments, even state-of-the-art GPUs risk throttling performance to avoid thermal overload.

That’s why Vertiv’s liquid-cooling solutions are increasingly featured in large-scale expansion plans and enterprise AI upgrades.

The goal isn’t just to lower temperatures; it’s to ensure computing resources are used predictably.

Why Cooling Has Become an Executive-Level Decision

In the past, infrastructure leaders talked about cooling in terms of efficiency and facilities management. AI has changed that. Now, boards assess whether infrastructure constraints could slow product development, delay training, or hurt competitiveness.

This shift brings cooling vendors into important discussions about revenue growth and business resilience.

Companies rolling out AI at scale need infrastructure that can handle unpredictable compute demands without incurring high operating costs. Solutions such as rear-door heat exchangers, liquid cooling, and smart power management help maintain steady performance and energy use, even in dense environments.

The next stage of AI growth won’t just be about who has the fastest chips. It will favor those who can run dense computing environments efficiently, reliably, and at scale. In this setting, votive liquid cooling is becoming essential infrastructure for the future of AI data centers.

Enterprise Procurement Checklist

- Procurement Effect: Vertiv (VRT) infrastructure now a “pre-requisite” buy for Blackwell GPU clusters.

- Infrastructure Risk: Facility floor weight limits may be exceeded by heavy liquid-cooling equipment.

- Deployment Impact: 6-12 month lead times for high-density cooling retrofits.

- ROI Implications: Increased upfront CapEx offset by 30% lower cooling-related energy bills.

- Operational Action: Perform a thermal audit of existing data centers before ordering H100/B200 upgrades.

Source: NVIDIA Launches Cosmos World Foundation Model Platform to Accelerate Physical AI Development