A delay of just 200 milliseconds can cost a retail platform millions during busy periods. Even small latency spikes can spread through distributed systems. AWS latency, AI, and predictive scaling are starting to change how infrastructure handles these challenges. Rather than waiting for slowdowns, AWS is testing systems that can predict and prevent them.

Why Latency Prediction Has Become a Strategic Priority

Latency is no longer just a technical issue. It has a direct impact on revenue, customer loyalty, and system reliability. Companies operating across different regions often face unexpected delays due to traffic spikes, network congestion, and even workload spikes.

Traditional scaling models only respond when certain limits are reached, when CPU usage goes up, alarms go off, and more resources are added. This approach often falls behind actual demand.

AWS compute optimization changes this process. Now, systems analyze patterns over time to spot signs that often precede latency spikes. This helps infrastructure get ready before performance drops.

Inside the Predictive Latency Engine.

Real-Time Modeling in AWS Latency AI Predictive Scaling Cloud

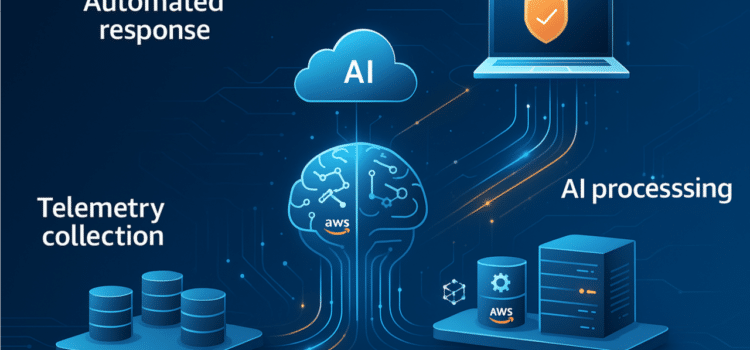

The AWS Latency Engine uses continuous telemetry to gather data from compute nodes, storage, and network paths. This information feeds machine learning models trained to spot early signs of congestion.

Rather than waiting for clear signs of failure, the system watches for small changes. Even a slight rise in packet retransmissions or queue depth can prompt it to act early.

At this point, multi-region routing AI becomes crucial. The system not only adds resources but also moves workloads to different locations to maintain steady performance.

How Predictive Load Shifting Works

The engine evaluates multiple variables, including current workload distribution, historical traffic patterns, regional latency benchmarks, and network health indicators, simultaneously.

When the model expects a latency spike, it begins shifting the load. Traffic is routed to regions with greater capacity and lower risk of delays.

For example, if an e-commerce platform sees demand growing in Asia, some traffic might be sent to nearby regions before servers get overloaded. This helps avoid bottlenecks instead of just responding to them.

Moving Beyond Reactive Scaling

Limitations of Traditional Autoscaling

Reactive scaling uses set rules. These rules work well when demand is predictable, but they struggle to handle sudden spikes.

Take a streaming platform during a big live event. Traffic can jump in just seconds. By the time scaling rules kick in, users might already notice buffering.

With AWS Latency AI and Predictive Scaling Cloud, the system anticipates these surges. It allocates resources and adjusts routing before thresholds are crossed.

Integrating Compute And Network Intelligence

Predictive scaling involves more than just adding servers. It means coordination between the compute and network layers.

Here, AWS compute optimization is key. The system decides which instances to scale, where to put them, and how to balance workloads effectively.

Meanwhile, multi-region routing AI makes sure traffic takes the best possible paths. It looks at latency, cost, and regional availability as things happen.

Operational Impact On Enterprise Workloads

Organizations that use predictive latency systems can see real improvements. There is little downtime, and performance stays more consistent.

In financial services, where every millisecond counts, predictive routing can cut down transactional delays. In gaming, it helps keep real-time play stable for players around the world.

When AWS compute optimization and smart routing work together, infrastructure becomes stronger. Systems adjust all the time instead of only in set steps.

Risks and Trade-Offs

Reduced Visibility Into Decision-Making

As systems get more automated, they become less transparent. Engineers might not always know why workloads are moved or resources are assigned in certain ways.

The lack of visibility can make troubleshooting harder. If there are performance problems, finding the root cause is more challenging.

Predictive scaling can use more resources. Allocating capacity in advance might increase operating costs.

Organizations need to weigh performance improvements against the cost. Not every workload needs advanced prediction models.

Strategic Implications for Cloud Leaders

For executives, predictive latency systems mark a change in infrastructure strategy. The focus shifts from just planning capacity to using smarter orchestration.

Investments will focus more on systems that blend data analysis with automation. This covers both multi-region routing AI and advanced scaling tools.

Companies that use these features early could get ahead of the competition. Faster response and steady performance can help them stand out in busy markets.

The Future of Predictive Cloud Infrastructure

AWS’s work on latency prediction reflects a broader industry trend. Cloud providers are starting to build systems that act before problems show up.

The integration of AWS latency AI, predictive cloud scaling and routing, and compute intelligence points to a more autonomous infrastructure model, one where decisions happen continuously, not in response to alerts.

As these systems mature, the role of engineers will evolve. They will define policies and constraints while AI handles execution. The result is a cloud environment that anticipates demand, accepts in real time, and reduces the gap between expectation and performance.

Source: AWS News Blog