Companies that control computing for today are set to lead in AI tomorrow. This trend is becoming clear as NVIDIA Blackwell data centers are influencing infrastructure choices across the US. With NVIDIA’s recent deployment news, the discussion is shifting from AI models to the need for more computing capacity. Now, the main question is who can build, expand, and maintain the biggest AI systems?

A New Map of Power Is Being Drawn

Where complete ownership defines influence.

Blackwell stands for more than just a hardware update. It denotes a bigger shift toward gaining an edge through infrastructure. Organizations with control over computing resources are gaining more influence in the AI world.

This shift is accelerating investments in hyperscale AI infrastructure. Large providers are expanding facilities to meet the rising demand for training and inference workloads. The scale of these investments reflects long-term strategic positioning.

At the same time, AI compute USA priorities are becoming more visible. National capacity is now a competitive factor, not only a technical one.

The Frontline of Deployment

Why NVIDIA Blackwell data centers are setting the pace.

Organizations that are ready to adopt Blackwell are quickly adding these new systems. Since they already run major operations, they have an advantage. Their speed in deploying new technology is changing the competitive field.

Such momentum is pushing the AI data center race ahead. Companies now compete on both performance and speed of action. Any delay in rolling out new systems can mean missed chances.

To support this growth, GPU deployment USA strategies are expanding aggressively. Securing hardware and optimizing placement have become urgent priorities.

Inside The Cluster: Where Performance Is Won

The Mechanics of Scaling Intelligence.

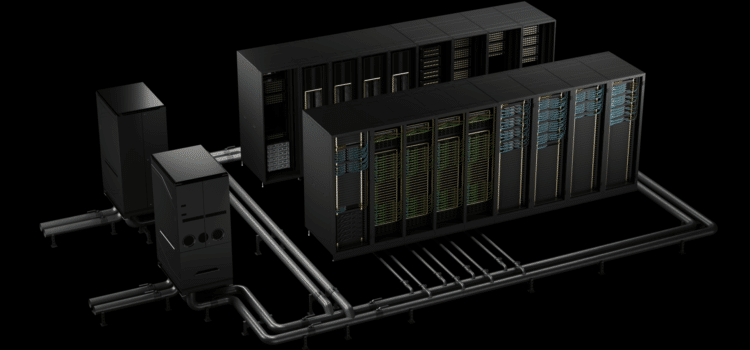

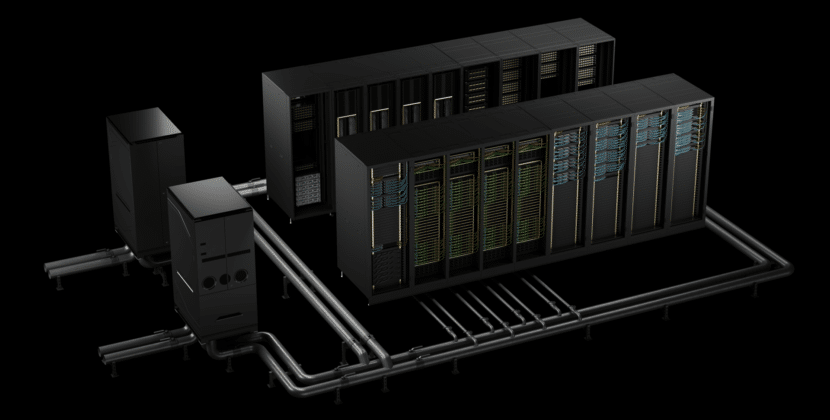

Natural systems use tightly connected groups of GPUs. These clusters enable the processing of many tasks at once, something that was previously difficult. How well they perform depends on both the hardware and the system’s management.

That is why NVIDIA clusters are so important. They let thousands of GPUs work together as one system. This leads to faster training and more effective task handling.

Supporting this scale requires strong compute infrastructure, USA investments. Data centers must adapt to higher power demands and more sophisticated cooling requirements.

The Cost Equation Behind Scale

Why is bigger not always simpler?

Growing AI infrastructure clearly improves performance, but it also brings financial and operational problems. Organizations need to closely evaluate these factors.

The expansion of hyperscale AI infrastructure is driven by long-term performance goals. Larger systems reduce cost per unit of compute over time, yet they require considerable upfront investment and careful planning.

That is why AI compute USA strategies are closely tied to economic considerations. Companies must ensure that their scaling efforts align with expected returns.

Geography as a Competitive Advantage

Where infrastructure meets location strategy

The location of data centers now holds a crucial role. Factors such as energy availability, network connectivity, and local laws influence decisions. These considerations are central to G-GPU deployment USA planning.

Different regions provide distinct advantages. Some provide lower operating costs, while others offer better access to talent and infrastructure. Choosing the right location can greatly affect productivity and effectiveness.

At a wider level, compute infrastructure USA development is being determined by national priorities. Policies and incentives are guiding where investments are made.

Competition Beyond Hardware

The developing dynamics of the AI data center race

The AI data center race is no longer limited to technology. It involves supply chains, partnerships, and operating capabilities. Companies must manage all these parts effectively to stay competitive.

Having access to hardware is still essential. Even well-prepared companies can face delays when supplies are limited. That’s why planning ahead is so important.

In this setting, NVIDIA clusters give companies a real advantage. They help roll out new systems faster and make scaling up more efficient.

The Reality Of Auditing At Scale

When growth introduces new challenges

Managing large AI systems is complicated. They need state-of-the-art monitoring, regular maintenance, and ongoing improvements. Without these steps, performance can drop quickly.

Organizations need to tackle problems such as high energy use and maintaining system reliability. These problems get bigger as they add more infrastructure. Managing these issues well sets the companies apart.

Despite these hurdles, the benefits of scaling remain significant. Properly managed systems deliver uniform performance improvements.

Strategic Inflection Point

Why NVIDIA Blackwell Data Centers Signal a New Era

The launch of Blackwell marks a major shift in AI infrastructure. The focus shifts from trying things out to running large-scale operations. Companies need to adapt fast to stay competitive.

Companies that invest early are setting themselves up for long-term success. They learn more, improve their processes, and become more efficient. Those who wait could struggle to keep up.

This shift illuminates why planning ahead matters. The choices companies make about infrastructure today will affect what they can do in the future.

Final Outlook: Compute Power Writes the Future

The arrival of Blackwell systems was a major change in the AI world. Having control over computing resources is now the main key to success. Organizations that can grow effectively will lead the next wave of innovation.

But just growing bigger isn’t enough. Companies also need to run their operations productively and plan carefully. Success will depend on harmonizing growth with good management.

As competition heats up, being able to build and manage infrastructure will set leaders apart. The next stage of AI progress will depend on the foundation that companies are putting in place now.

Source: NVIDIA in Brief