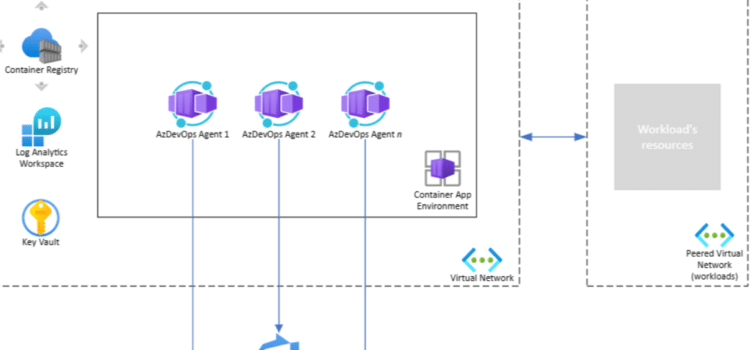

Just one conflicting instruction can disrupt an entire AI workflow. For example, in a test environment, two agents attempted to overwrite the same dataset simultaneously, resulting in a complete rollback. Azure AI agents, agent attestation, and multi-agent systems are now being built to prevent these kinds of problems. Microsoft’s new supervisor layer brings structured control to areas where autonomous agents once worked with little coordination.

Why Agent Conflicts Are Becoming a Board-Level Concern

Autonomous agents now work together rather than alone. Companies often use dozens, or even hundreds, of agents across finance, operations, and customer service. Without oversight, these agents can end up making decisions that conflict with each other.

This is where Microsoft AI governance moves from theory to practice. Governance is no longer just about policy documents. Now it is built into the systems themselves, guiding agencies’ behavior in real time.

Take a procurement system as an example: one agent focuses on cutting costs, while another aims to deliver faster. If they do not coordinate, their actions with suppliers can clash. This can lead to inefficiency or even financial risk.

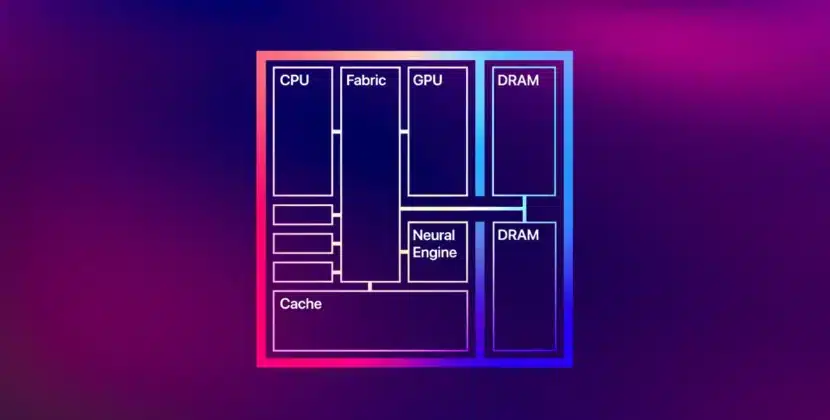

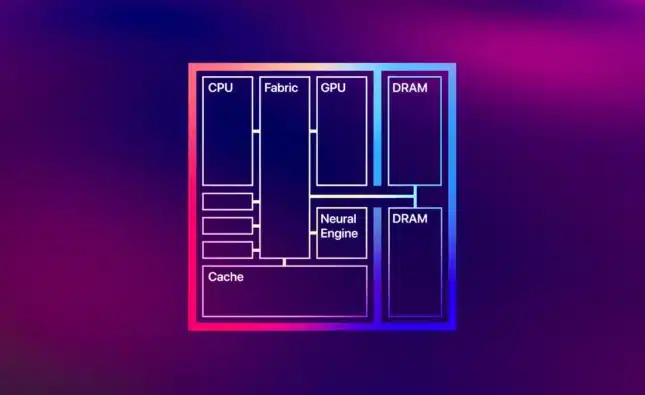

Inside the Supervisor Layer Architecture

Embedding control in Azure AI agents, agent orchestration, and multi-agent systems

Microsoft’s supervisor layer acts as an arbitration engine. It checks agent decisions before they are carried out and compares them to set rules.

Rather than letting agents act on their own, the system adds checkpoints. Every action request is validated against rules, priority levels, and context checks.

This method improves conflict resolution by adding structured steps. It catches conflicts before actions are taken, rather than after something goes wrong.

Decision Mediation and Priority Alignment

The supervisor layer gives agents different priority levels. These priorities can change depending on the situation, business rules, and past results.

For example, in a logistics environment:

- A delivery optimization agent may dominate during peak hours.

- A cost control agent may take precedence during cost-peak cycles.

This flexible system helps reduce conflicts between different goals. It also makes sure agent decisions support the company’s overall objectives.

Operational Impact On Enterprise Systems

Adding supervisory control changes how organizations use automation. Systems now focus on preventing problems before they occur rather than just fixing them after the fact.

In large-scale multi-agent systems, this shift reduces error propagation. A single flawed decision no longer cascades across dependent processes.

Companies also get better audit visibility. Every decision goes through a central layer, which creates records that can be traced. This supports Microsoft’s AI governance, especially in industries such as banking and healthcare that have strict rules.

Balancing Autonomy With Oversight.

The Limits Of Full Independence

Total autonomy may seem efficient in theory, but in reality, it can lead to unpredictable results. Agents trained on different datasets often interpret goals differently.

This is where agent conflict resolution becomes essential. Without it, systems may appear functional while quietly generating inconsistencies.

The supervisory layer does not remove autonomy. Instead, it sets clear limits. Agents still work on their own, but within a system that keeps an eye on them.

Latency vs Control Trade-Off

Adding a supervisory layer requires more processing. Each decision needs to be checked, which can slow things down a bit.

However, Microsoft’s design keeps this delay small. The system uses quick validation models that work almost instantly.

For most enterprise use cases, the trade-off favors control. A slight delay is acceptable if it prevents systemic errors.

Strategic Implications for C-Suite Leaders

For executives, this change means they need to rethink AI investments. It is not just about performance anymore. Control, traceability, and alignment are just as important.

Organizations deploying Azure AI agents, agent orchestration, and meta-agent systems must rethink their architecture. The focus moves from scaling agents to managing interactions between them.

This also changes how companies handle risk. Autonomous systems without supervision can create hidden problems. With a supervisor layer, these risks can be seen and managed.

Competitive Landscape and Industry Direction

Microsoft’s new approach puts pressure on other cloud providers. Businesses will start to expect the same level of control from all platforms.

The industry as a whole is moving toward layered AI systems. Basic agents handle tasks; supervisor layers control and coordinate; and governance frameworks ensure everything follows the rules.

This layered setup shows how complex enterprise AI is becoming. It recognizes that autonomy needs structure to work at scale.

The Next Phase of Controlled Autonomy

Microsoft’s supervisor layer sets a new standard for managing smart systems. It shows that autonomy should be guided, not left to its own devices.

As enterprises expand their use of meta-agent systems, the need for coordination will only intensify. Systems that can resolve conflicts before they surface will define the next phase of AI infrastructure.

The real change is in how organizations view control. It is not a limitation, but a way to achieve reliable growth.

Source: Microsoft Foundry documentation