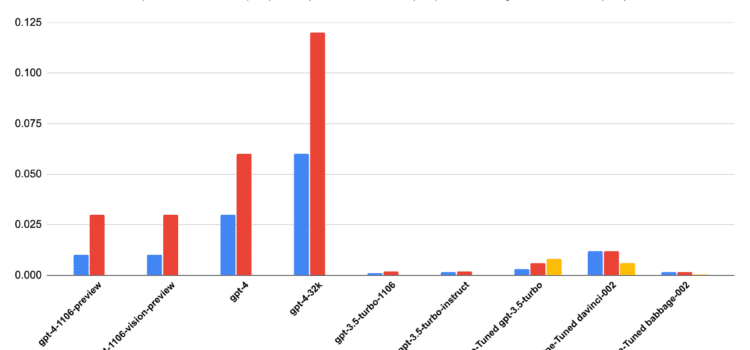

Recent platform logs show a small but important change in how developers use large language models. Developers are using fewer tokens per request, but overall demand is going up quickly. This trend affects OpenAI pricing and token efficiency, especially for teams running large deployments. It shows that greater efficiency does not always mean lower costs.

Understanding the Token Efficiency Trend

Token efficiency means making the most out of each API call by using fewer input and output tokens. Using fewer tokens per request usually means prompts are clearer or the model gives better answers. Developers are improving how they write queries to avoid wasting tokens. This results in more focused and shorter interactions.

But being efficient with each request does not always lead to overall savings. The logs show that developers are making more API calls in total. This increase cancels out the savings from using fewer tokens per request. So total usage is still going up.

Why is Usage Volume Increasing?

The increase in usage comes from more industries adopting AI. More apps are using AI in daily work, from customer support to data analysis. Making API calls is now a regular part of many tasks. This growth leads to higher overall demand.

Another reason is that developers now break tasks into smaller, more frequent requests. This helps improve accuracy, but also means more total API calls. As a result, usage continues to rise.

The Cost Paradox: Efficiency Versus Spend

For finance teams, this trend brings more uncertainty. Old cost models assumed that usage and spending increased together in a straight line, but that is no longer true. Now, both efficiency and volume need to be considered.

This is where API cost AI becomes a critical metric. It is not just about the token price, but also about how usage patterns evolve. Monitoring both dimensions is essential for accurate budgeting.

Developer Behavior is Evolving

Developers are quickly changing how they work to get better results. They are getting better at writing prompts, making them shorter and clearer. This helps reduce excess token use.

At the same time, apps are becoming more interactive. Users now expect quick responses and ongoing feedback, leading to more API calls per session. So even with better efficiency, total usage is still rising.

Tools And Automation

Automation tools are also changing how APIs are used. Now, systems can make API calls automatically without people involved. Background tasks, monitoring, and integrations all add to the total demand. These automated actions increase overall usage.

This change shows why it is important to keep a close eye on usage growth. Without good tracking, costs can rise fast. Developers need better tools to watch and manage their usage.

Pricing Models Under Pressure

The observed trends are putting pressure on these trends, making current pricing models harder to maintain. Providers usually charge per token, assuming usage will be predictable, but changing user habits challenge that assumption. Pricing may need to change based on usage volume. Another is subscription-based access for predictable workloads. Both approaches aim to balance efficiency with scalability. The goal is to align costs with real-world usage patterns.

Strategic Considerations For Businesses

Businesses need to rethink how they use AI. Just being efficient is not enough to keep costs down. They must also improve their overall use of the service. This calls for a broader strategy.

Knowing how OpenAI pricing and token efficiency work is now a key priority. Companies should look at both how efficient each request is and how much they use overall. This helps them make better choices about scaling.

Balancing Efficiency And Growth

The main challenge is balancing efficiency and growth. Cutting the number of tokens per request is helpful, but only if it reduces the overall number of requests. Organizations need to weigh these trade-offs carefully.

This is why API cost AI is so important. It gives a bigger picture of how costs add up. By tracking this, teams can spot where their usage is not efficient.

Managing how usage grows also takes discipline. Teams need to set limits, watch trends, and improve their workflows. Without these steps, any efficiency gains can be lost to higher demand.

Final Thoughts on Cost Dynamics and Scaling

Changes in API usage indicate that the ecosystem is maturing. Developers are becoming more efficient, but they are also relying more on AI. This combination poses new challenges for cost management.

To succeed, businesses need to understand OpenAI pricing and token efficiency. It is not only about using fewer tokens, but also about managing total usage. Companies that adapt to this will be able to grow sustainably.

In the end, efficiency and growth need to work together. Focusing on just one can cause problems. Taking a complete approach helps businesses innovate without facing surprise costs.